Scientists Closer to Reading Words From Your Brain

Share

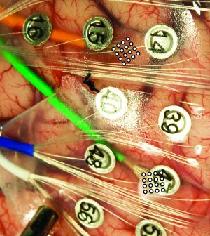

Those unable to speak may one day be able to have their words read directly out of their brains. Researchers at the University of Utah have used electrodes on the brain's surface to determine which words a patient was saying. As described in the recent publication in the Journal of Neural Engineering, scientists attached two grids each with 16 microelectrodes to two different parts of a patient's brain - a section that deals with facial muscles and movements and a speech center known as Wernicke's area. Scientists then had the patient repeatedly speak ten words that they believed would benefit those paralyzed and unable to talk: yes, no, more, less, hot, cold, thirsty, hungry, hello, and goodbye. They had up to a 90% success rate in distinguishing between the brain activity associated with any two words and up to 48% success determining a single word among the field of 10. While these results are very preliminary they demonstrate that we may be able to tell what someone is trying to say just by monitoring the surface of their brain.

Neuroscience is slowly making progress with translating brain signals into useful information. We've seen how the firing of motor neurons can be used to control a computer cursor and mechanical devices with Braingate, neurons in the Broca's area of the brain (another speech center like Wernicke's) have been used to delve into how we process language, and scientists have had some luck translating brain activity into vowel sounds as well. Each of these techniques, however, have required researchers to place electrodes within the brain itself. Pushing wires into your grey matter has a host of associated risks and limitations including brain damage. The University of Utah experiment used microelectrodes on the surface of the brain. While still under the skull, these electrodes do not penetrate the brain and only use surface signals to guess at activity deeper in the brain.

Of course, that distance does lend itself to some limits in accuracy. Using all points in the 16 microeletrode arrays, University of Utah scientists were only able to get 74% success in distinguishing between two words and only 28% in detecting which word was spoken among the ten options. That's better than chance (10%) but far from great. After selecting the 5 electrodes with the best results from each array, the success rates jumped considerably - up to 90% and 48% respectively (as mentioned above). Guessing the correct word half the time isn't good enough to actually implement such a system in real scenarios, but it's a step in the right direction.

University of Utah scientists also learned some interesting general information about the brain's speech centers. While activity in the part of the brain controling facial movements consistently showed stimulation when the patient spoke, the Wernicke's area fluctuated as words were repeated and showed increased activity when the patient was congratulated by the researchers. This suggests that Wernicke's area is a higher-processing center and may not be particularly useful in developing a device that could let paralyzed patient's speak. This sort of revelation could be very useful, however, in helping understand the brain in general.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

As always we should worry when an experiment has a testing sample of just one person. The patient, a man with epilepsy, had part of his skull already removed so that doctors could use penetrating electrodes to treat his condition. His open brain was a convenient testing ground for the surface based microelectrode arrays. This sort of piggy-backing onto other brain treatments is actually fairly common for experimental neuroscience - it's hard to justify cutting someone's brain open to test something you don't know will work, much better to try to find people who already have to take that risk and ask to poke around. Still, there's no telling how the lessons learned in this speech research will apply to other patients.

If surface signals are the key to reading words from the brain, we might be able to improve the signal quality by improving the microelectrode array doing the reading. Bradley Greger, head of the research team, told University of Utah news that they were hoping to build an 11x11 microelectrode array next (with 121 electrodes). Future experiments may be able to pinpoint the exact areas on the brain's surface best used to monitor speech. In the recent research, the patient only repeated each word 31 to 96 times (he got tired during the hour long sessions over four days). Future research may also improve their success rates with greater repetition among multiple patients. It may be years, however, before such clinical trials would begin.

It's unclear how close we'll need to get to the brain in order to provide a means of translating thoughts into words. Signals on the skull (EEG) are precise enough to allow those with locked-in syndrome (complete paralysis) a means of typing, but such systems are very slow. Greger's research suggests that microelectrode arrays on the brain's surface could be precise enough to allow us to pick out words from a simple vocabulary. Eventually we should be able to develop the means to translate neural activity into words as easily as our brains do everyday, but it may mean sending wires deep into our skulls. Either way, being able to communicate with the outside world just by linking your thoughts to a computer may be worth a little poking into the brain.

[image credits: University of Utah News]

[source: University of Utah News, Greger et al 2010 JNE]

Related Articles

In the Scramble to Power AI, Investors Bet $140 Million on Data Centers at Sea

You Probably Wouldn’t Notice if a Chatbot Slipped Ads Into Its Responses

An AI Just Beat Doctors at Diagnosing ER Patients

What we’re reading