Art and technology make beautiful music together. Bruno Zamborlin is a young joint PhD student in both computational technologies and art at IRCAM in Paris and Goldsmiths University of London. He’s created an exciting interface that detects user inputs by picking up on acoustic vibrations in a surface. Almost any surface. With a simple microphone, the Mosaicing Gestural Surface system or Mogees, can pick up taps, scratches, and other gestures on glass, wood, even a balloon. Zamborlin has developed Mogees as part of an ongoing series of unique pattern recognition technologies for different gesture interfaces. With the release of a video showcasing his Mogees project (see below), he’s received interest from many musicians looking to take advantage of the intuitive and creative means of mixing sounds for musical performance. The demonstration video hints at the variety of acoustic blending (mosaicing) that Mogees can handle and the versatility of the system in any environment. As the 21st Century continues to unfold, technology allows innovators like Zamborlin to challenge the classical assumptions of what is an interface, or even what is a musical instrument.

Art and technology make beautiful music together. Bruno Zamborlin is a young joint PhD student in both computational technologies and art at IRCAM in Paris and Goldsmiths University of London. He’s created an exciting interface that detects user inputs by picking up on acoustic vibrations in a surface. Almost any surface. With a simple microphone, the Mosaicing Gestural Surface system or Mogees, can pick up taps, scratches, and other gestures on glass, wood, even a balloon. Zamborlin has developed Mogees as part of an ongoing series of unique pattern recognition technologies for different gesture interfaces. With the release of a video showcasing his Mogees project (see below), he’s received interest from many musicians looking to take advantage of the intuitive and creative means of mixing sounds for musical performance. The demonstration video hints at the variety of acoustic blending (mosaicing) that Mogees can handle and the versatility of the system in any environment. As the 21st Century continues to unfold, technology allows innovators like Zamborlin to challenge the classical assumptions of what is an interface, or even what is a musical instrument.

According to Zamborlin, Mogees users can define their own patterns to be recognized. Thus, while in the video there are only three ‘gestures’ shown, Zamborlin has experimented with up to about twenty at one time. A user can define a tap, or a slow tap, or a scratch, or really any acoustic input by recording an example. Then, when that input is repeated, Mogees will perform the response a user desires – typically playing a sound file as seen in the video. Users could record as many different input types, and link them to as many different audio files (or other controls) as they want. The only real limit is processing power.

Part of Mogees’ innovation is in the ways inputs are linked to different musical sounds for playback. Each input sound is analyzed frame by frame so that an audio file can be cued up before the input gesture is even finished. Zamborlin also uses physical modeling so that gestures can be connected to appropriate physical laws – in other words the gestures inspire sounds that echo, or pop, or reverb according to how the gesture itself was made. Additionally, Mogees uses concatenative synthesis or “audio mosiacing” to link the sounds from the microphone to the closest matching audio sample from a database. The result, as seen in the demo video, are sounds which seem to natively emerge from the very surfaces that Zamborlin touches. A balloon really doesn’t sound like a rattle when scratched, but the link between gesture and audio is so smooth, that it seems like it should.

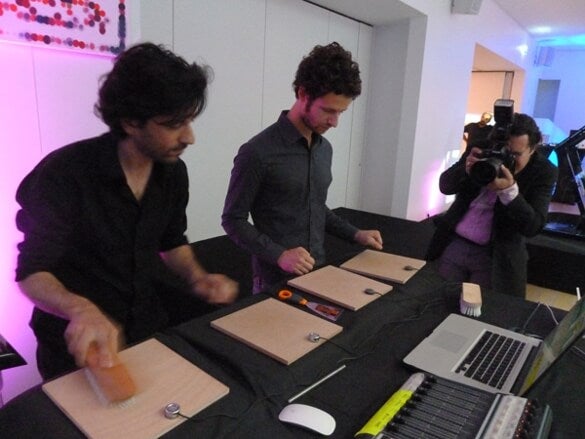

Mogees is one of several nontraditional interface projects Zamborlin has worked on. In fact, Mogees owes much of its development to an earlier project with Zamborlin’s adviser Frédéric Bevilacqua called The Gesture Follower. Zamborlin has also collaborated with Lorenzo Pagliei, a composer at IRCAM, to create AirPlay – a nontraditional gesture controlled musical setup that works to blend audio clips in realtime for performance. AirPlay uses wireless sensors, Kinect 3D tracking sensors, and Mogees to combine a variety of inputs into one composition. Shake a fist and they can bang a virtual gong, slide a hand in the air and they can control a turntable-like sound, or touch a wooden surface and an acoustic microphone will translate that input into audio controls. While that acoustic input isn’t Mogees (it’s much simpler), it does suggest what Mogees is capable of doing. The following video shows one of Zamborlin and Pagliei’s AirPlay performances entitled I materiali. It has been cued to show a section where the acoustic inputs (seen as the microphones attached to the wooden boards on the table) feature more prominently.

As inspirational as Zamborlin’s acoustic pattern recognition may be, the basic concept behind Mogees is old news. In fact, scratch based acoustic control seems to be repeated around the world every two years or so. Singularity Hub discussed how Chris Harrison from Carnegie Mellon University created a delightful scratch-based control system for simple electronics in 2008. Harrison’s Scratch Input was more focused on recognizing a few key gestures rather than Mogees more open and user defined scheme, but the core idea is remarkably similar. The same could almost be said of TAI-CHI (Tangible Acoustic Interfaces for Computer Human Interaction), a project funded by the EU and produced in 2006. Again, TAI-CHI had a different focus – it was aimed at determining absolute position and movement using an array of microphones – but at a conceptual level it wasn’t very different from Mogees either. TAI-CHI seems to be defunct and Harrison seems to have moved on to other projects. Zamborlin, however, continues to work on Mogees as part of his PhD. Currently he’s working on the UI for the project, trying to find ways to make programming the system simple for non-computer savvy users. He also plans on possibly integrating Mogees (or part of it) into a “Seeing Touching” project at IRCAM that will blur the lines of when input is detected in a gesture – either during the motion in the air or during the contact with a control. In other words, Zamborlin is actively improving Mogees, and finding new applications for the technology. While others have explored the acoustic input space before, Zamborlin is pushing the envelope. Mogees recognizes gestures as they happen, can be told to respond to any acoustic input a user desires, and can be integrated into other projects.

Detecting human movement on hard surfaces has considerable merit as a gestural interface. Place a microphone on an electronic controlled door, and it could replace the need for keys, conceivably. Or embed a microphone in a laptop and the entire structure could serve as the touchpad. Yet TAI-CHI and Scratch Input show that the accuracy of such an interface would be limited. Not impractical per se, just limited. Mogees may be a better approach. Instead of trying for an absolute control scheme to replace a mouse and keyboard, acoustic input may serve best when it is crafted to each user’s desires. Record the sound you want to use as an input, then use it as you like. I’m particularly interested in Mogees as an artistic tool – a method for harnessing the human inclination for kinetic motion during creative expression. Even if Zamborlin doesn’t bring a Mogees-based project to market in the next few years, I hope that the next student to “invent” audio gesture interfaces on hard surfaces with a simple microphone decides to use it as a child’s toy or musician’s turntable. Watching AirPlay I see a great potential for this technology to inspire our minds towards joyful expression.

[screen capture credit: Bruno Zamborlin]

[source: BrunoZamborlin.com, conversation with Bruno Zamborlin]