Didn’t Get The Job? A Computer May Be To Blame

Share

Robots continue to invade the workplace. But these bots aren’t going to sift through mountains of paperwork or fetch a pair of scissors for you. On the contrary, it is you who will have to answer to them.

There’s a fast-growing trend in the corporate world to replace human bias with algorithmic precision during the hiring process. In addition to an interview – or even during pre-interview screening – hopeful applicants are completing questionnaires. But unlike similar questionnaires of the past, it's the computer that looks at the answers and decides whether or not the applicant has got what the company is looking for.

Companies like Xerox. In looking for people to staff their call centers, Xerox used to put an emphasis on hiring people with call center experience. But then they analyzed the data. Turned out that the characteristic that best predicted a quality hire was, not experience, but creativity.

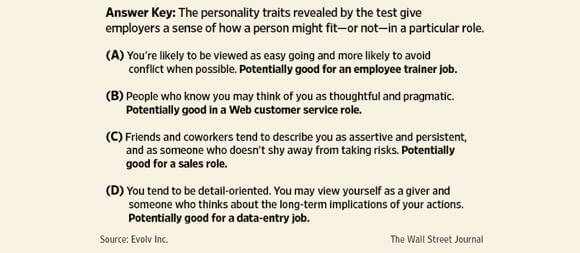

A large part of the testing is personality-based. For instance, the Xerox software asked applicants to choose between statements such as: “I ask more questions than most people do,” or “People tend to trust what I say.” With enough data, statistical analysis reveals which answers are the best predictors that an applicant will be a good employee – or not.

Some of the questions attempt to gauge the applicant’s feelings toward alcohol or how tolerant they would be of a long commute. According to Evolv Inc, the San Francisco start-up that runs the so-called talent management software for Xerox, the ideal applicant is creative but not very inquisitive or empathetic, lives close to the job, has reliable transportation to the job, and is a member of one or more social networks. But the software sets the limit on how many social networks is too many: five. How many HR offices would have been so specific about the applicant's social network limit? Only through rigorously crunching the numbers of hundreds of thousands of previous good and bad hires can such particularities surface.

But do the algorithms really work or are they simply a way for companies to automate bad hiring practices? According to research they do work. Companies using the software have seen performance go up, attrition rate go down, and disability claims go down.

As an applicant, you can try to game the system but that can be difficult. Companies know that applicants will try and tell them what they want to hear. So they try to craft their questions in ways that make it difficult to determine the “obvious” answer. It’s an intelligent approach, not based on what some HR person thinks is a tough question, but based on data of how applicants in the past have answered.

If company spending is any indication, it seems as though the predictive powers of the software is paying off. Global spending on talent-management software rose from $3.3 billion in 2010 to $3.8 billion in 2011, an increase of 15 percent. Some big tech companies are taking notice of the trend and making sure they get a piece of the automated hiring pie. Tech giant Oracle acquired Taleo in February, and IBM acquired Kenexa in August. Taleo and Kenexa are both businesses that specialize in employee assessment technologies.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

Related to these technologies is industrial organizational (I/O) psychology, a branch of psychology that applies psychological axioms to whole organizations. It makes sense: the same things that make a healthy person, like physical and mental well being, make for a healthy workplace. But, since I/O psychology in the end focuses on the bottom line, it tends to be practical-minded. Instead of resolving sibling rivalries, the I/O psychologist will pair an employee with the job that best fits their communication skills, attention to detail, leadership skills, etc. The principle behind talent management software is the same: pairing the best individuals with the job. The difference between the software and I/O psychologists is that the software is fine-tuned with data – pairing, in the Xerox case, “dependable call center employee” with “creativity” without any a priori notions about what defines a dependable call center employee.

With the unemployment rate the way it is, the software’s efficiency is no doubt loathsome to the vast majority who don’t pass the test. Employers, being human (at least for now), are hostage to human biases. Confidence, thinking on your feet, an easy smile and firm handshake are all admirable qualities on the surface. But hiring based on gut feelings about a person someone has just met doesn’t always work. In fact, experts argue that intuition has proven a poor hiring tool. Compared to the software, conventional hiring methods result in a markedly varied group of hires and aren’t very good at predicting which applicants will become quality, longterm employees. To believe this, we need only look at the large number of studies that show an applicant’s perceived qualifications are powerfully affected whether or not their name is John or Jane.

At the same time companies are touting the hard logic of talent management software, disgruntled non-hires are calling a very human foul: bias. In one case, a woman who is speech and hearing impaired, faced this question: “Describe the hardest time you’ve had understanding what someone was talking about.” Scoring 40 percent overall, the software concluded she was less likely than other applicants to “listen carefully, understand and remember.” The software also suggested that her interviewer be attentive to “correct language” and “clear enunciation.” The woman has filed a complaint with the Equal Employment Opportunity Commission alleging she was discriminated against due to her disability.

There’s an inherent risk in using large data sets to select for the ‘ideal applicant.’ At some point it can begin, inadvertently, to select against protected groups, people with certain characteristics that employers cannot, by law, consider when hiring. Some of the most visible of these characteristics are age, gender, pregnancy, race and color. The above example is a case where the software unknowingly considered the applicant’s disability – and counted it against her. And while she took her complaint to the EEOC, many people do not, largely because they never see their assessment scores. In 2011 the EEOC received a total of almost 100,000 complaints. Of these, only 164 were related to talent management software.

From farm to factory to food-making, robots are increasingly working alongside us or replacing us altogether. Standardizing job applicant evaluations seems only too obvious, especially since companies will do whatever they can to trim the fat, to increase productivity and profits. But as with any new technology, there’s going to be some growing pains as talent management software use increases. Employers will have to be watchful for any unintended consequences of applying an algorithm to the future health of their company, and the repercussions of passing human bias onto unwitting software. Maybe they'll come up with another program for that too.

Peter Murray was born in Boston in 1973. He earned a PhD in neuroscience at the University of Maryland, Baltimore studying gene expression in the neocortex. Following his dissertation work he spent three years as a post-doctoral fellow at the same university studying brain mechanisms of pain and motor control. He completed a collection of short stories in 2010 and has been writing for Singularity Hub since March 2011.

Related Articles

AI Can Now Design and Run Thousands of Experiments Without Human Hands. We Aren’t Ready for the Risk to Biosecurity.

An AI Solution to an 80‑Year‑Old Problem Has Shocked Mathematicians

AI Lab Partners Are Rewiring the Hunt for New Drugs

What we’re reading