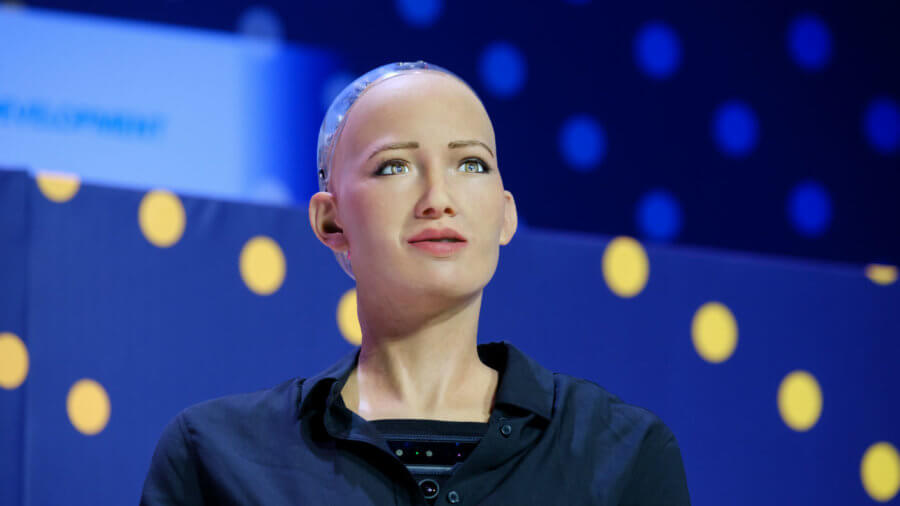

You might not have heard of Hanson Robotics, but if you’re reading this, you’ve probably seen their work. They were the company behind Sophia, the lifelike humanoid avatar that’s made dozens of high-profile media appearances. Before that, they were the company behind that strange-looking robot that seemed a bit like Asimo with Albert Einstein’s head—or maybe you saw BINA48, who was interviewed for the New York Times in 2010 and featured in Jon Ronson’s books. For the sci-fi aficionados amongst you, they even made a replica of legendary author Philip K. Dick, best remembered for having books with titles like Do Androids Dream of Electric Sheep? turned into films with titles like Blade Runner.

Hanson Robotics, in other words, with their proprietary brand of life-like humanoid robots, have been playing the same game for a while. Sometimes it can be a frustrating game to watch. Anyone who gives the robot the slightest bit of thought will realize that this is essentially a chat-bot, with all the limitations this implies. Indeed, even in that New York Times interview with BINA48, author Amy Harmon describes it as a frustrating experience—with “rare (but invariably thrilling) moments of coherence.” This sensation will be familiar to anyone who’s conversed with a chatbot that has a few clever responses.

The glossy surface belies the lack of real intelligence underneath; it seems, at first glance, like a much more advanced machine than it is. Peeling back that surface layer—at least for a Hanson robot—means you’re peeling back Frubber. This proprietary substance—short for “Flesh Rubber,” which is slightly nightmarish—is surprisingly complicated. Up to thirty motors are required just to control the face; they manipulate liquid cells in order to make the skin soft, malleable, and capable of a range of different emotional expressions.

A quick combinatorial glance at the 30+ motors suggests that there are millions of possible combinations; researchers identify 62 that they consider “human-like” in Sophia, although not everyone agrees with this assessment. Arguably, the technical expertise that went into reconstructing the range of human facial expressions far exceeds the more simplistic chat engine the robots use, although it’s the second one that allows it to inflate the punters’ expectations with a few pre-programmed questions in an interview.

Hanson Robotics’ belief is that, ultimately, a lot of how humans will eventually relate to robots is going to depend on their faces and voices, as well as on what they’re saying. “The perception of identity is so intimately bound up with the perception of the human form,” says David Hanson, company founder.

Yet anyone attempting to design a robot that won’t terrify people has to contend with the uncanny valley—that strange blend of concern and revulsion people react with when things appear to be creepily human. Between cartoonish humanoids and genuine humans lies what has often been a no-go zone in robotic aesthetics.

The uncanny valley concept originated with roboticist Masahiro Mori, who argued that roboticists should avoid trying to replicate humans exactly. Since anything that wasn’t perfect, but merely very good, would elicit an eerie feeling in humans, shirking the challenge entirely was the only way to avoid the uncanny valley. It’s probably a task made more difficult by endless streams of articles about AI taking over the world that inexplicably conflate AI with killer humanoid Terminators—which aren’t particularly likely to exist (although maybe it’s best not to push robots around too much).

The idea behind this realm of psychological horror is fairly simple, cognitively speaking.

We know how to categorize things that are unambiguously human or non-human. This is true even if they’re designed to interact with us. Consider the popularity of Aibo, Jibo, or even some robots that don’t try to resemble humans. Something that resembles a human, but isn’t quite right, is bound to evoke a fear response in the same way slightly distorted music or slightly rearranged furniture in your home will. The creature simply doesn’t fit.

You may well reject the idea of the uncanny valley entirely. David Hanson, naturally, is not a fan. In the paper Upending the Uncanny Valley, he argues that great art forms have often resembled humans, but the ultimate goal for humanoid roboticists is probably to create robots we can relate to as something closer to humans than works of art.

Meanwhile, Hanson and other scientists produce competing experiments to either demonstrate that the uncanny valley is overhyped, or to confirm it exists and probe its edges.

The classic experiment involves gradually morphing a cartoon face into a human face, via some robotic-seeming intermediaries—yet it’s in movement that the real horror of the almost-human often lies. Hanson has argued that incorporating cartoonish features may help—and, sometimes, that the uncanny valley is a generational thing which will melt away when new generations grow used to the quirks of robots. Although Hanson might dispute the severity of this effect, it’s clearly what he’s trying to avoid with each new iteration.

Hiroshi Ishiguro is the latest of the roboticists to have dived headlong into the valley.

Building on the work of pioneers like Hanson, those who study human-robot interaction are pushing at the boundaries of robotics—but also of social science. It’s usually difficult to simulate what you don’t understand, and there’s still an awful lot we don’t understand about how we interpret the constant streams of non-verbal information that flow when you interact with people in the flesh.

Ishiguro took this imitation of human forms to extreme levels. Not only did he monitor and log the physical movements people made on videotapes, but some of his robots are based on replicas of people; the Repliee series began with a ‘replicant’ of his daughter. This involved making a rubber replica—a silicone cast—of her entire body. Future experiments were focused on creating Geminoid, a replica of Ishiguro himself.

As Ishiguro aged, he realized that it would be more effective to resemble his replica through cosmetic surgery rather than by continually creating new casts of his face, each with more lines than the last. “I decided not to get old anymore,” Ishiguro said.

We love to throw around abstract concepts and ideas: humans being replaced by machines, cared for by machines, getting intimate with machines, or even merging themselves with machines. You can take an idea like that, hold it in your hand, and examine it—dispassionately, if not without interest. But there’s a gulf between thinking about it and living in a world where human-robot interaction is not a field of academic research, but a day-to-day reality.

As the scientists studying human-robot interaction develop their robots, their replicas, and their experiments, they are making some of the first forays into that world. We might all be living there someday. Understanding ourselves—decrypting the origins of empathy and love—may be the greatest challenge to face. That is, if you want to avoid the valley.

Image Credit: Anton Gvozdikov / Shutterstock.com