MIT’s Ridiculously Colorful Glove is Latest Hand Tracking Interface

Share

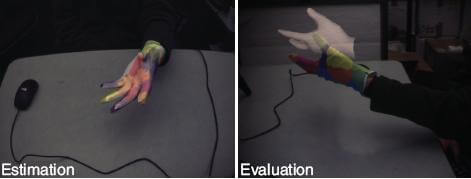

Human computer interfaces just got a lot more colorful. Graduate student Robert Wang at MIT's Computer Science and Artificial Intelligence Lab (CSAIL) has developed a simple but effective system for tracking hand motion that only requires a webcam and a glove. That lycra glove is covered in colorful blotches designed to help the hand tracking software determine how the user is posed. The color based technique allows for cheap and speedy motion capture at an interactive rate, giving you the ability to move virtual objects on the screen. Wang has a great overview of the system in the video below - it's the most powerful and awesome eye-sore I've ever seen.

There is a vast array of motion caption hardware systems in development. Gesture based control technology, which will be coming to your TV, video game console, and computer this year, relies on these systems to identify and understand hand positions and motion. Wang, and his advisor Jovan Popovic, have accomplished most of the feats I've seen in other hand-tracking systems, but with much simpler (and cheaper) hardware. The trick is in the colors, which gives the video sampling software reference points to determine hand orientation. Pranav Mistry (from MIT's Media Lab) uses an even simpler version of the color enhanced tracking for his Sixth Sense personal augmented reality device - with just bits of colored tape or marker caps on his fingertips. Though both of these MIT solutions seem silly in appearance, you can't argue with their accuracy and speed:

You can find even more videos of the colorful glove tracking system on Wang's YouTube channel or the project website.

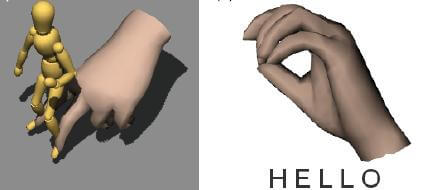

Wang and Popovic have experimented with several different applications for their simplified hand tracking system. According to the related paper, they've had success with using gesture recognition to read sign language (ASL). They've also used the motion capture to allow a user to control animated figures. A virtual hand can be moved around to explore diagrams or a walking motion with the fingers can control a bipedal virtual puppet. In physics based simulated worlds, the glove can be used to pick up virtual objects and move them around. There's many possibilities for applying the glove to various augmented reality tasks. Wang also created the following video to highlight some of the more humorous possible applications:

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

The biggest possible application, however, has to be a general human-computer interface. The next generation of HCI's are starting to move from the lab to the market, and gesture controls are as likely to succeed as kinetic computing blocks, touchscreens, and all the other possibilities out there. Will Wang's system be competitive with the likes of Project Natal, gesture controlled TV, and the amazing Minority Report interface? No, probably not. But it shouldn't have to be.

By relying only on innovative software, a webcam, and a colorful glove, Wang has carved out another kind of market - the low-end user-empowered side of things. Wang will be releasing a (free?) API soon via the project website, allowing others to presumably make their own gloves and try out the interface for themselves. It would be nice for Wang to make the software open source so that these users could also help innovate and improve the system. For example, the current setup has some limitations when it comes to depth perception and glove placement due to the mono-vision of the webcam. Yet we know that there are (relatively) cheap stereoscopic webcams available. A user of the API (or Wang himself) could overcome the limitations by updating the software to take advantage of a 3D webcam.

I've seen a lot of different human computer interfaces but I still haven't found one that clearly stands out as the one that will become a new standard. Chances are that, in the short term, there will be a plurality of systems replacing our use of keyboards and mice. I like Wang's colorful glove approach to the problem because it is cheap, easy to employ, and primarily reliant upon improvements in software, not hardware. All gesture controls, however, are going to need to find a way to incorporate haptics to give users some sense of feedback from the computers they interact with. Maybe Wang will find a way to include some simple electronics in the glove (heater, vibrator, electric pulse) to make the experience more tactile. In any case, I applaud Wang and all the other HCI innovators out there. Every new system is a step closer to finding the next great interface that will enhance and help define our growing dependence on computers.

[image credits: CSAIL, Wang et al 2010]

[video credits: Robert Wang]

[source: CSAIL, Wang et. al 2010]

Related Articles

In the Scramble to Power AI, Investors Bet $140 Million on Data Centers at Sea

Quantum Computers Are Coming to Break Cryptography Faster Than Anyone Expected

Printed Neurons That Mimic Brain Cells Could Slash AI’s Energy Bill

What we’re reading