All Topics

Artificial Intelligence

AI Can Now Design and Run Thousands of Experiments Without Human Hands. We Aren’t Ready for the Risk to Biosecurity.

Stephen D. Turner

An AI Solution to an 80‑Year‑Old Problem Has Shocked Mathematicians

Melissa Lee

AI Lab Partners Are Rewiring the Hunt for New Drugs

Shelly Fan

Popular

In the Scramble to Power AI, Investors Bet $140 Million on Data Centers at Sea

You Probably Wouldn’t Notice if a Chatbot Slipped Ads Into Its Responses

An AI Just Beat Doctors at Diagnosing ER Patients

Sony’s Table-Tennis Robot Beat Elite Human Players With Unorthodox Moves

Printed Neurons That Mimic Brain Cells Could Slash AI’s Energy Bill

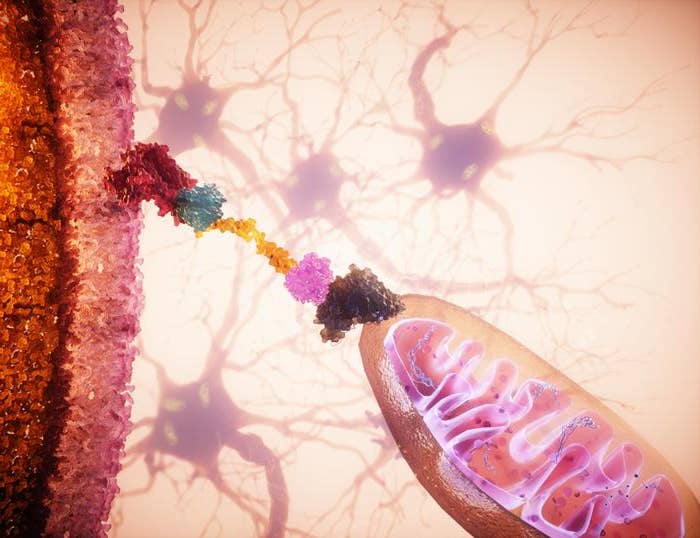

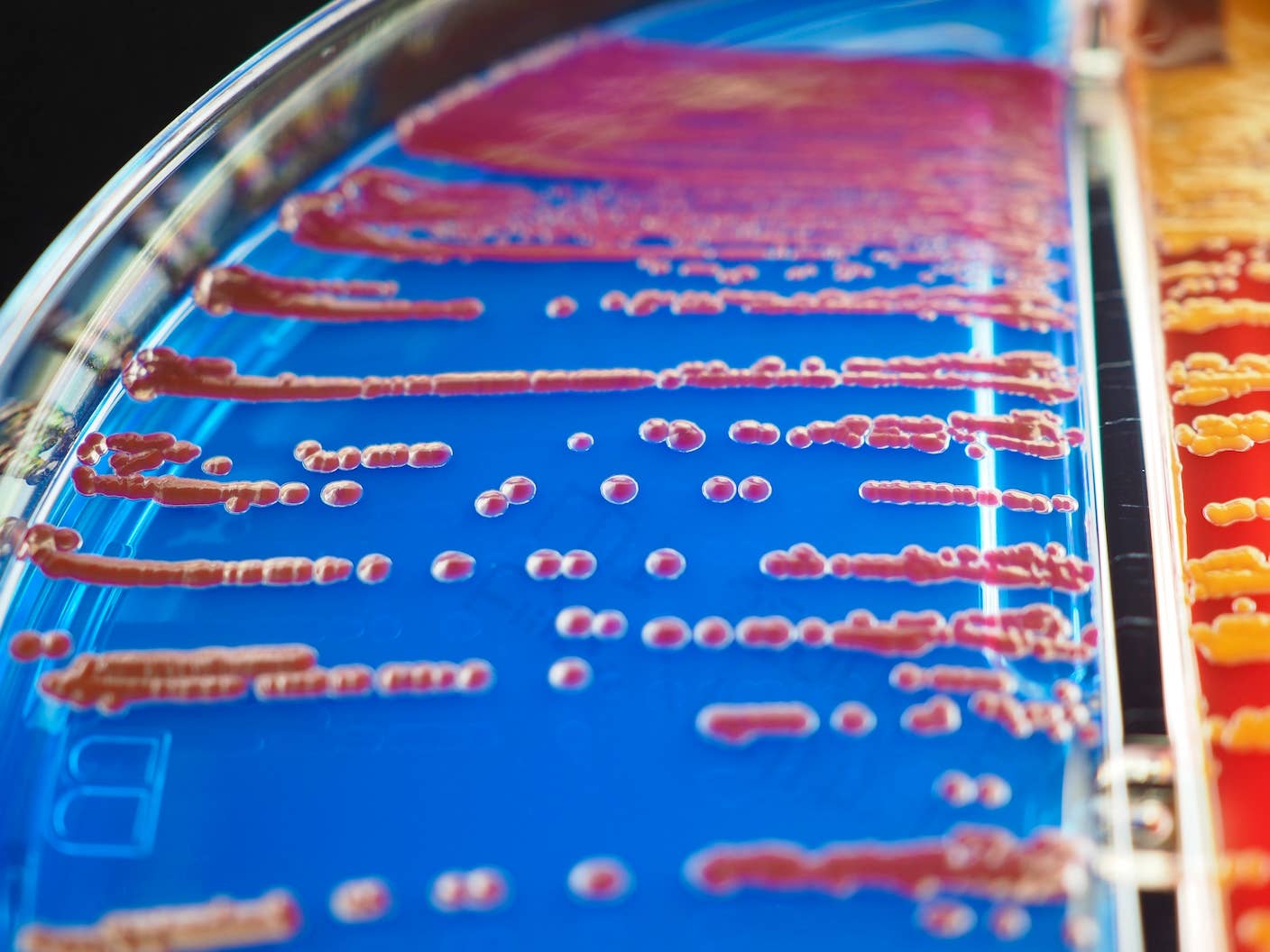

MIT Mined Bacteria for the Next CRISPR—and Found Hundreds of Potential New Tools

Biotechnology

How Fast Are You Aging? New Genetic Clock May Have the Answer

Shelly Fan

Photosynthetic Drops Soothe Dry Eyes With Sunlight

Shelly Fan

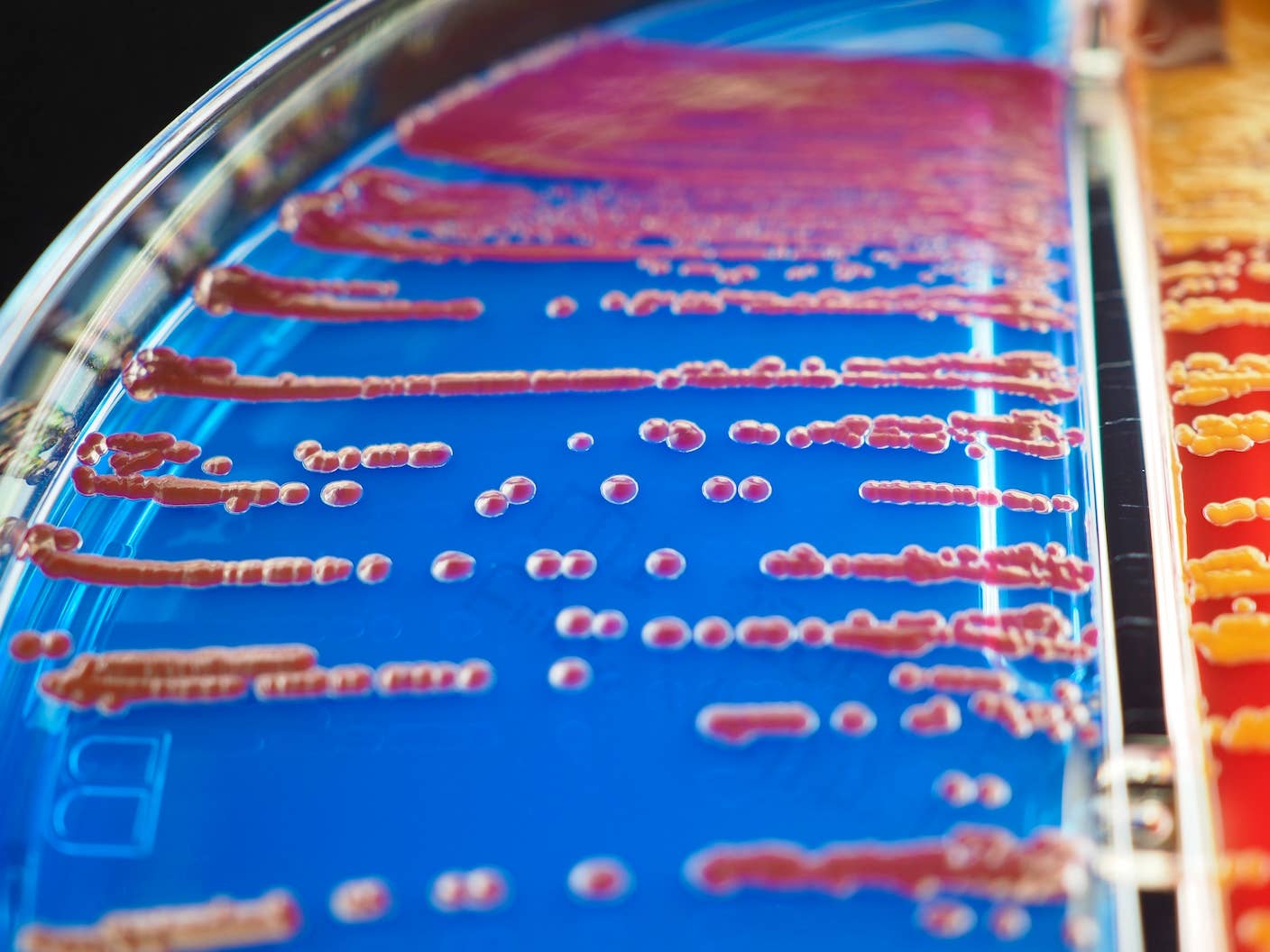

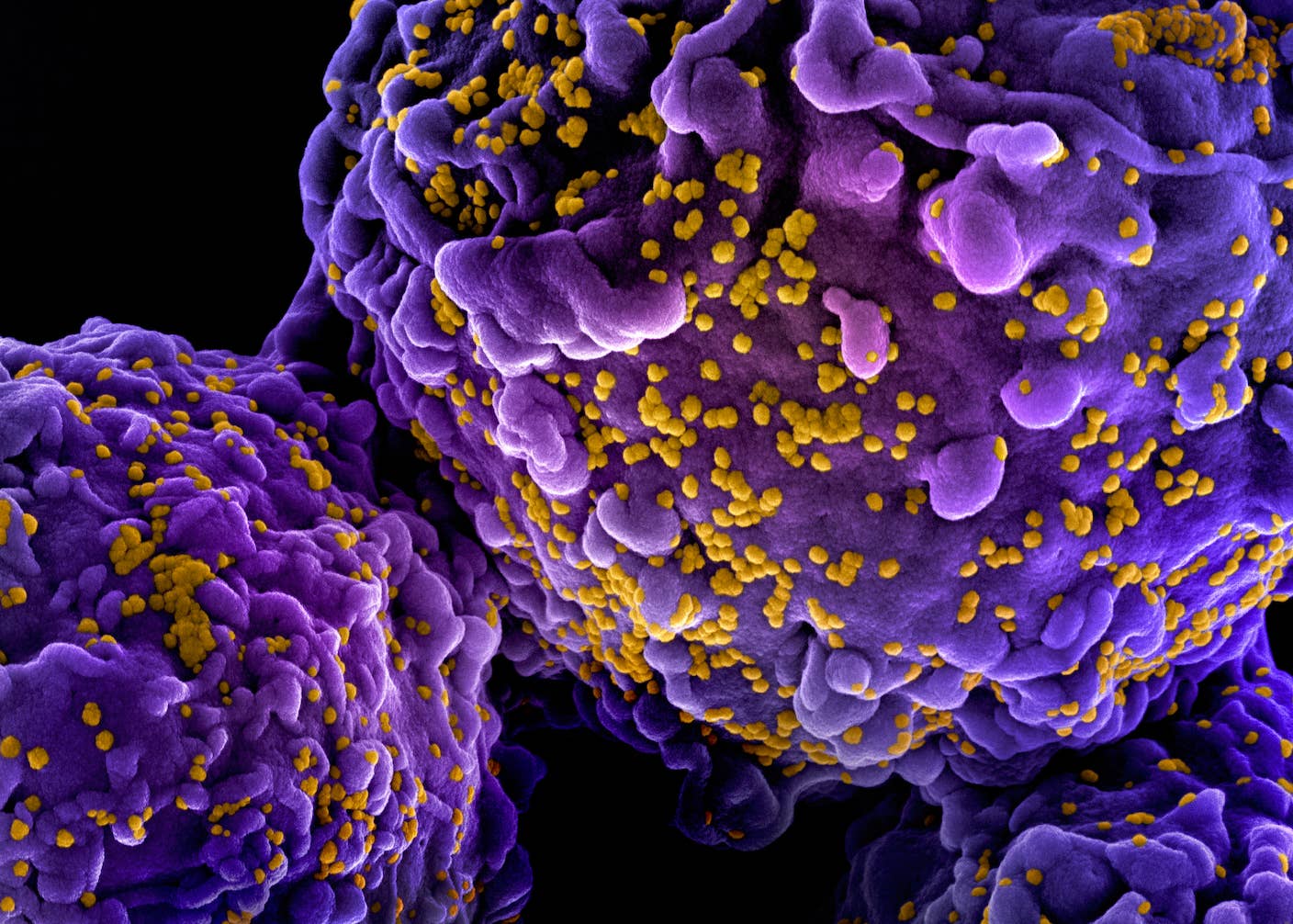

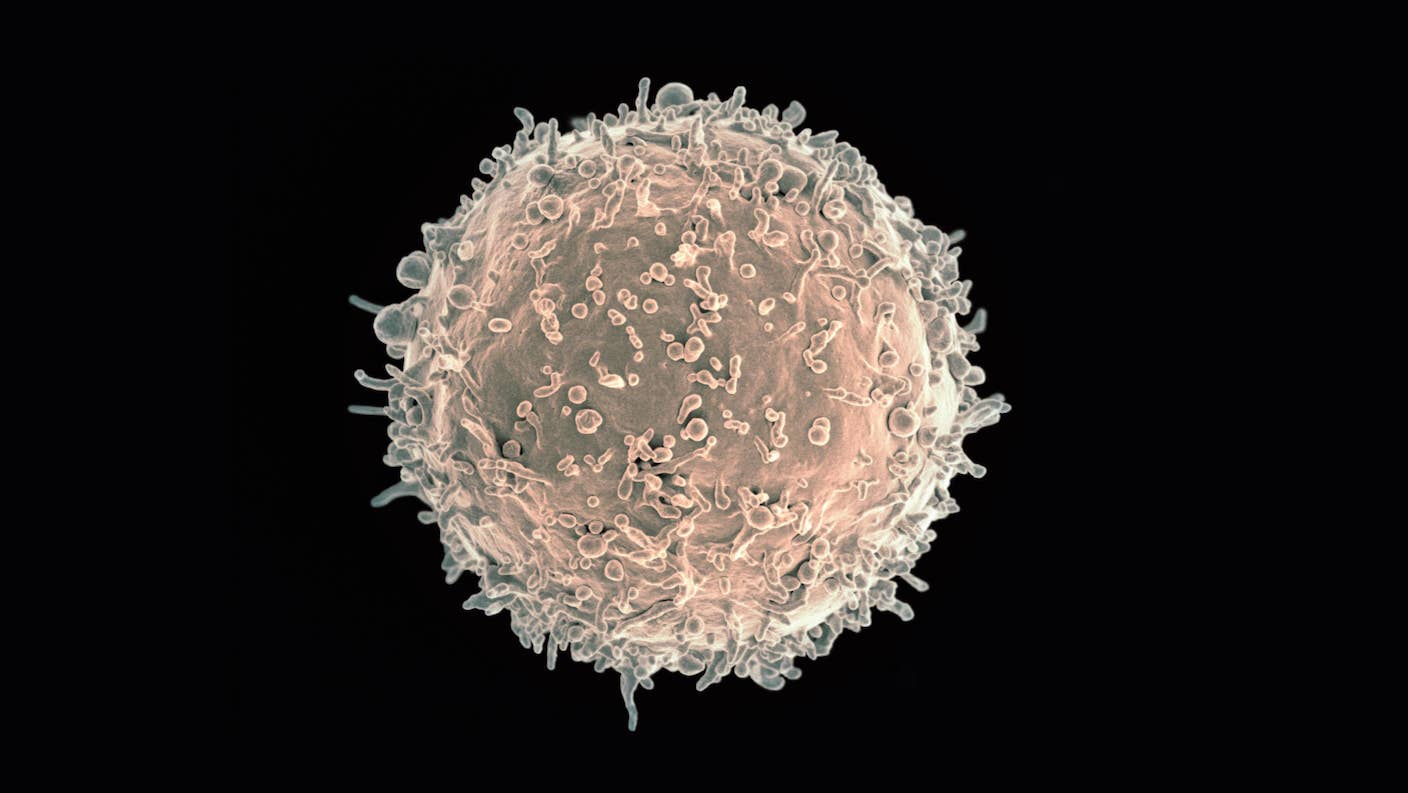

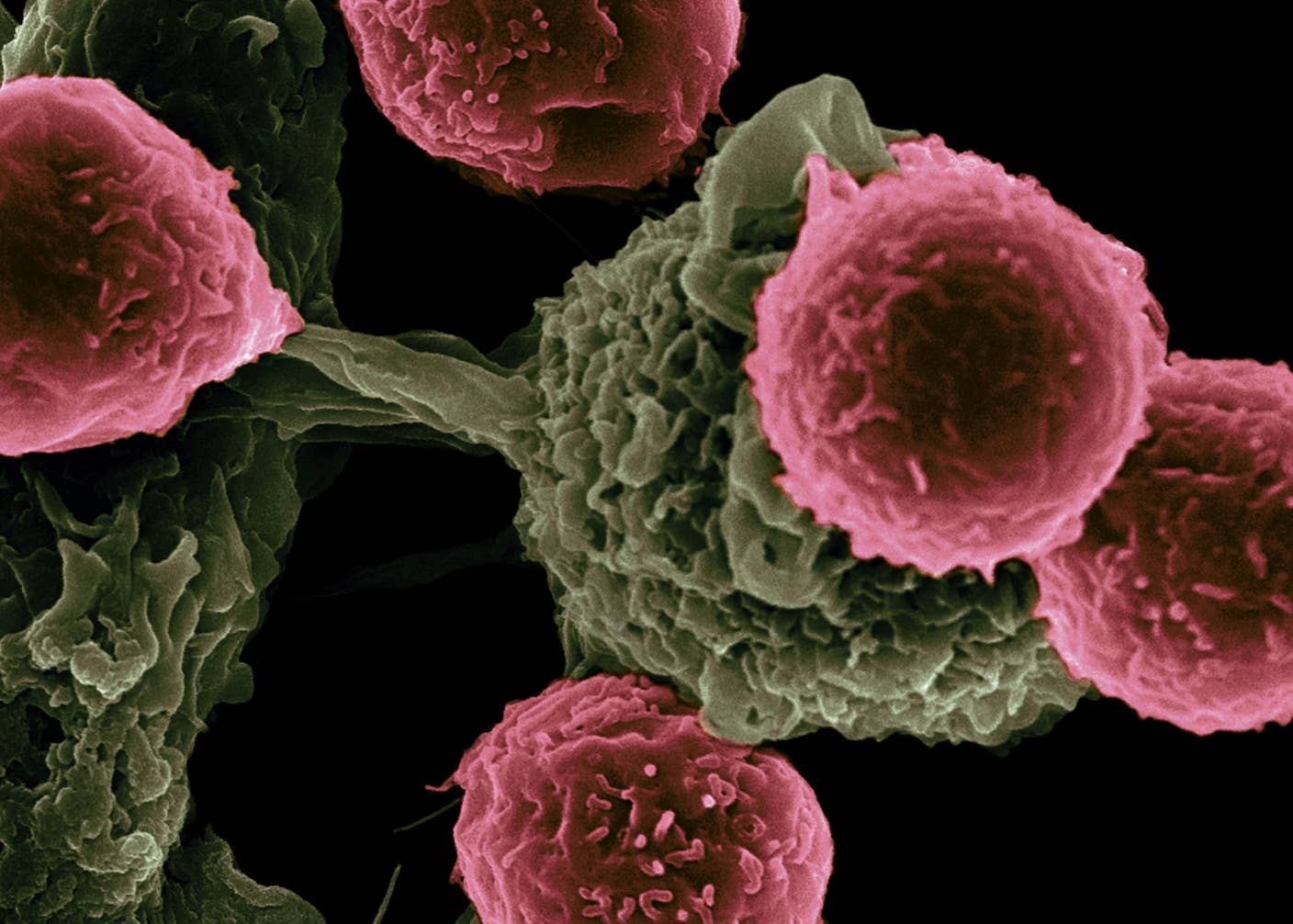

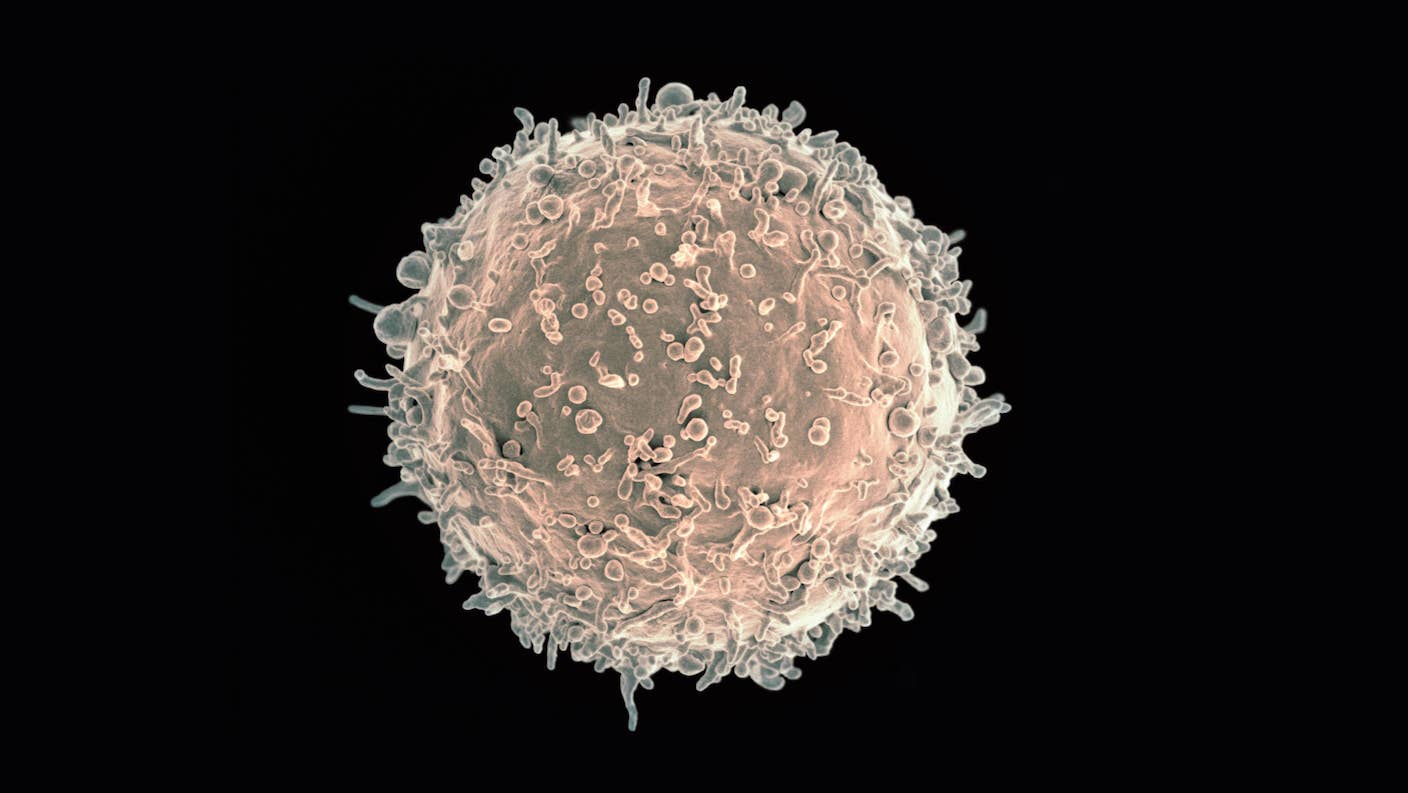

A Revolutionary Cancer Treatment Could Transform Autoimmune Disease

Amber Dance

Popular

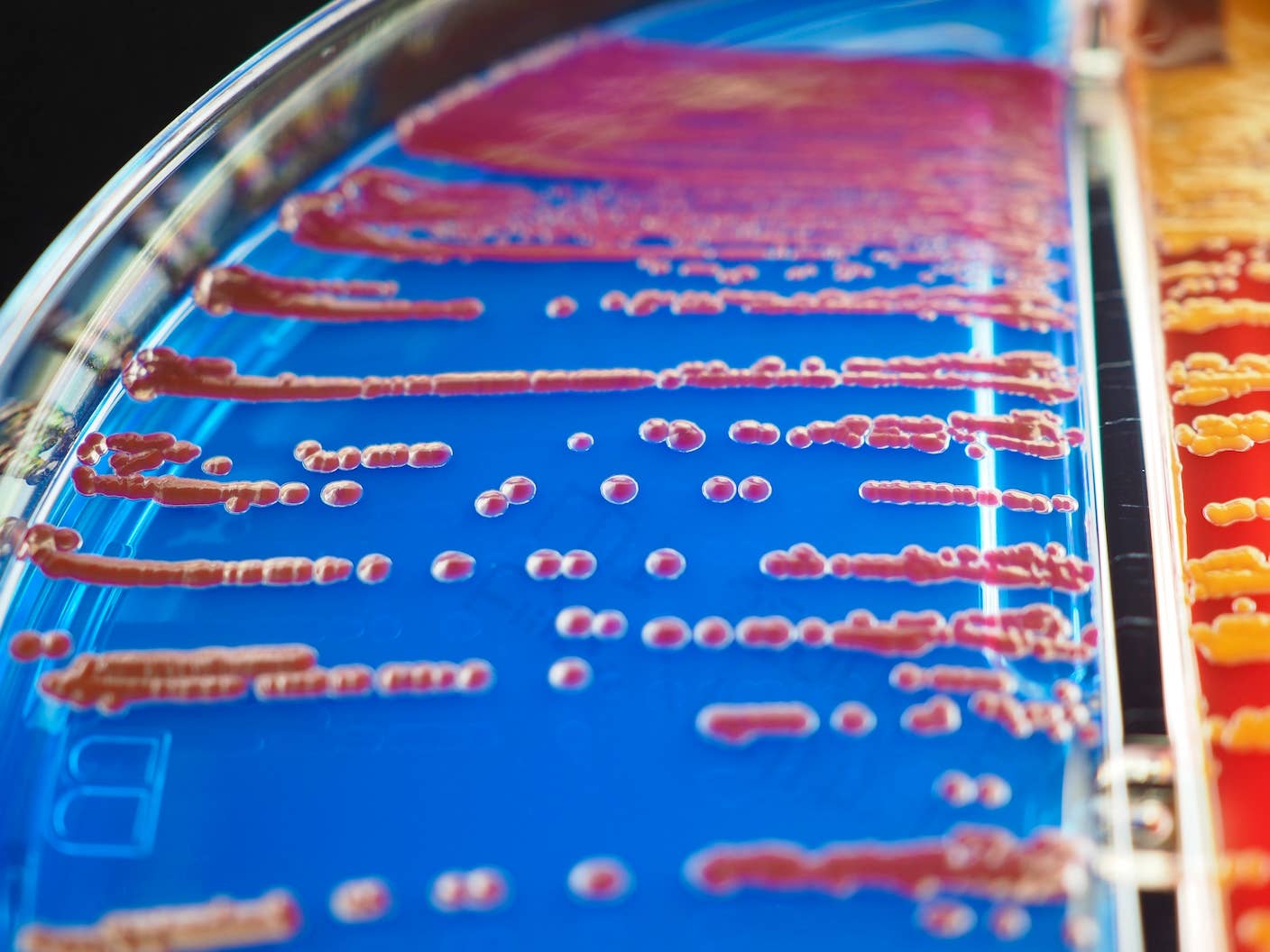

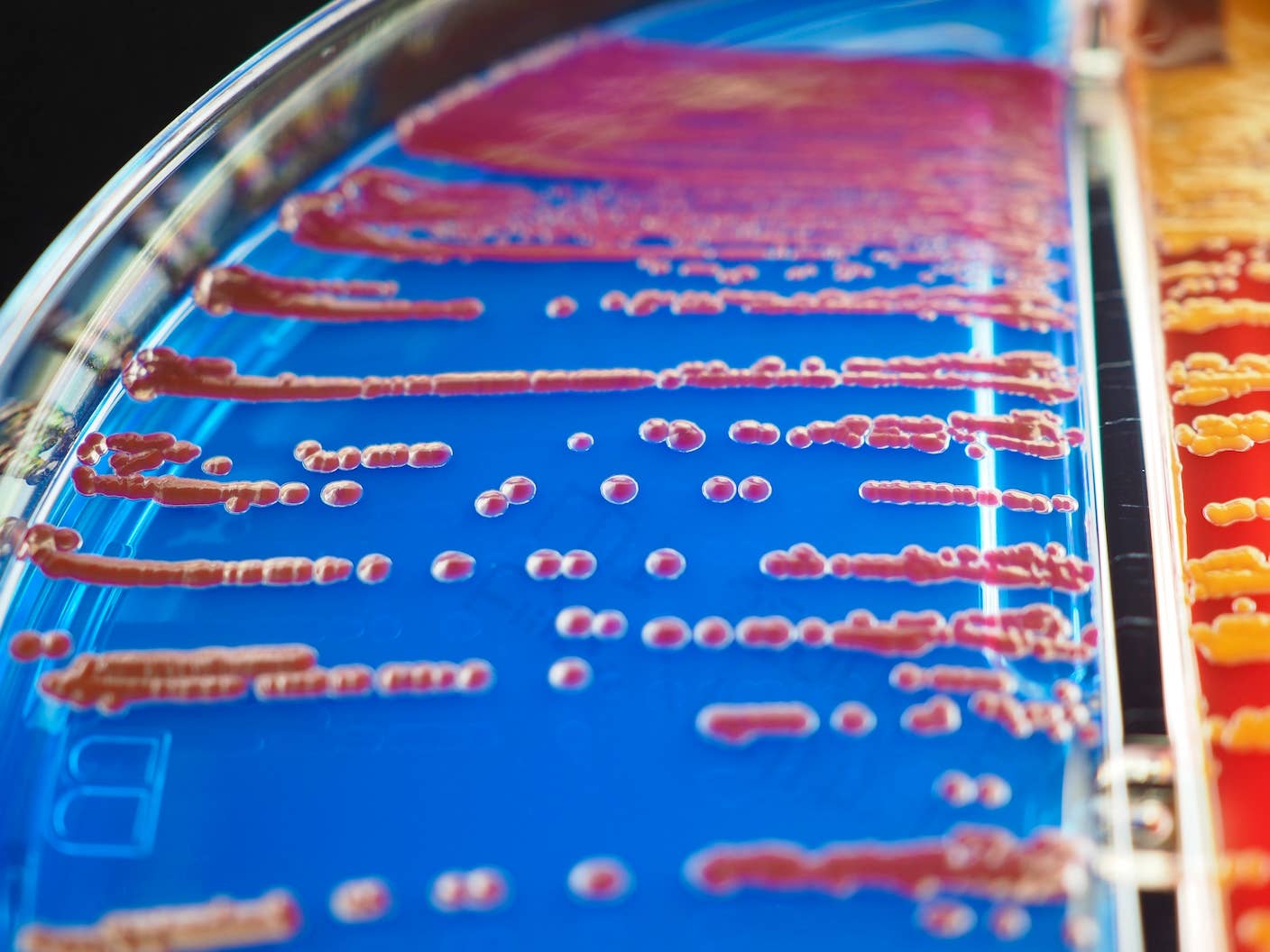

All Life Uses 20 Amino Acids. Scientists Just Deleted One in Bacteria.

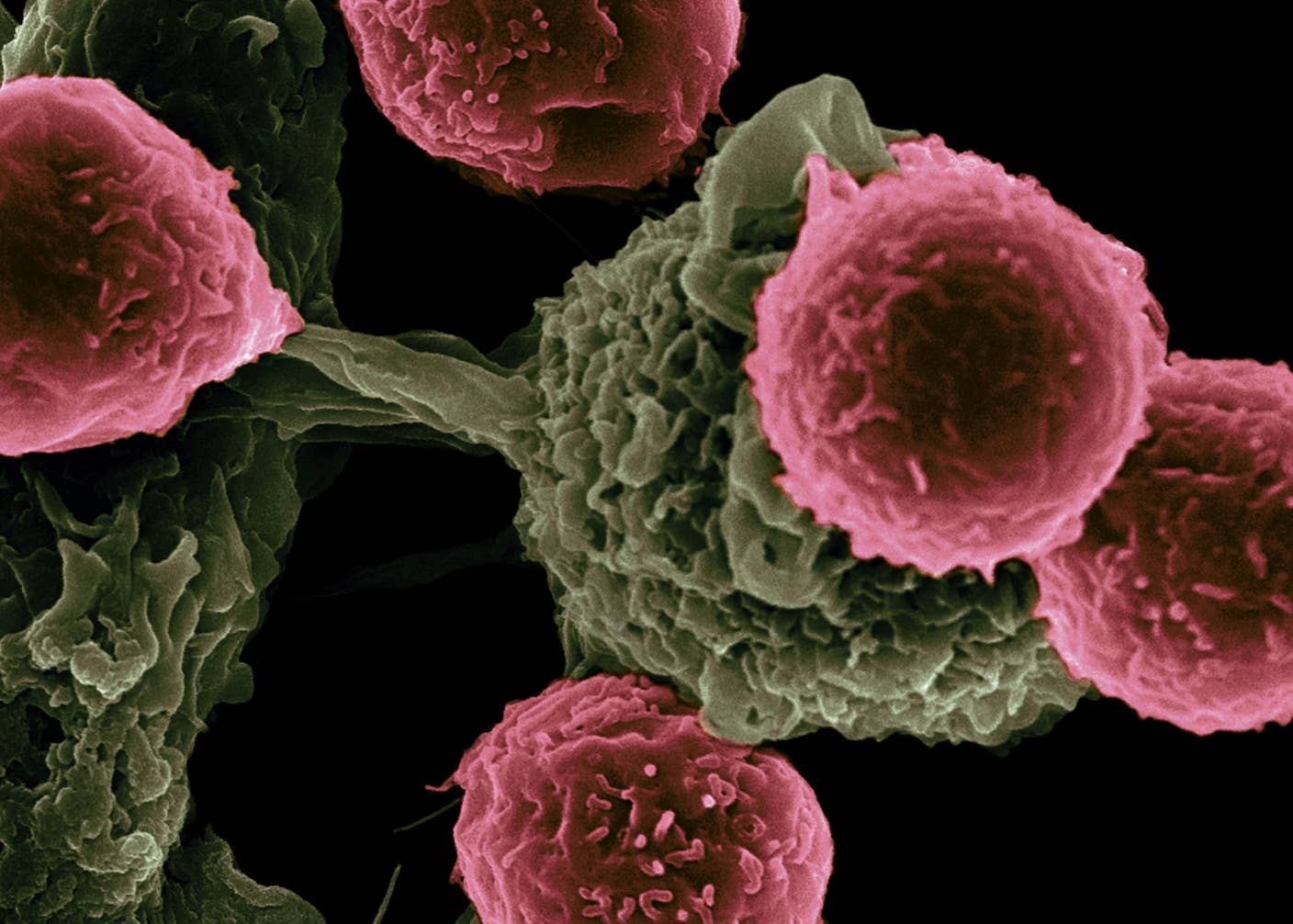

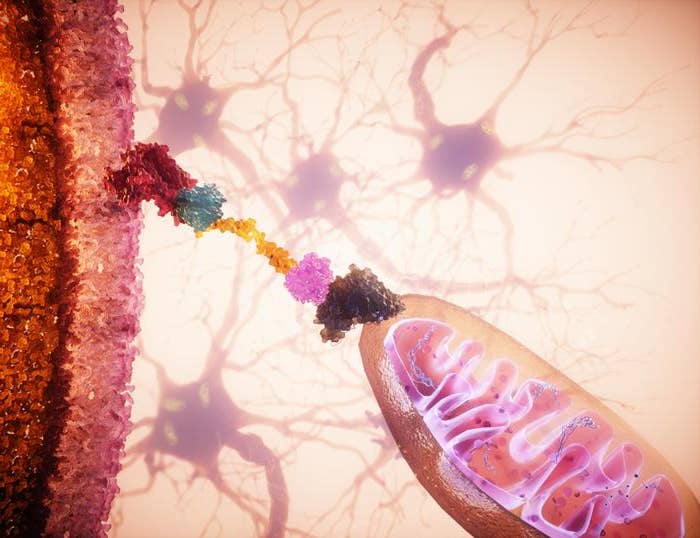

Scientists Revive Failing Cells With Mitochondria Transplants

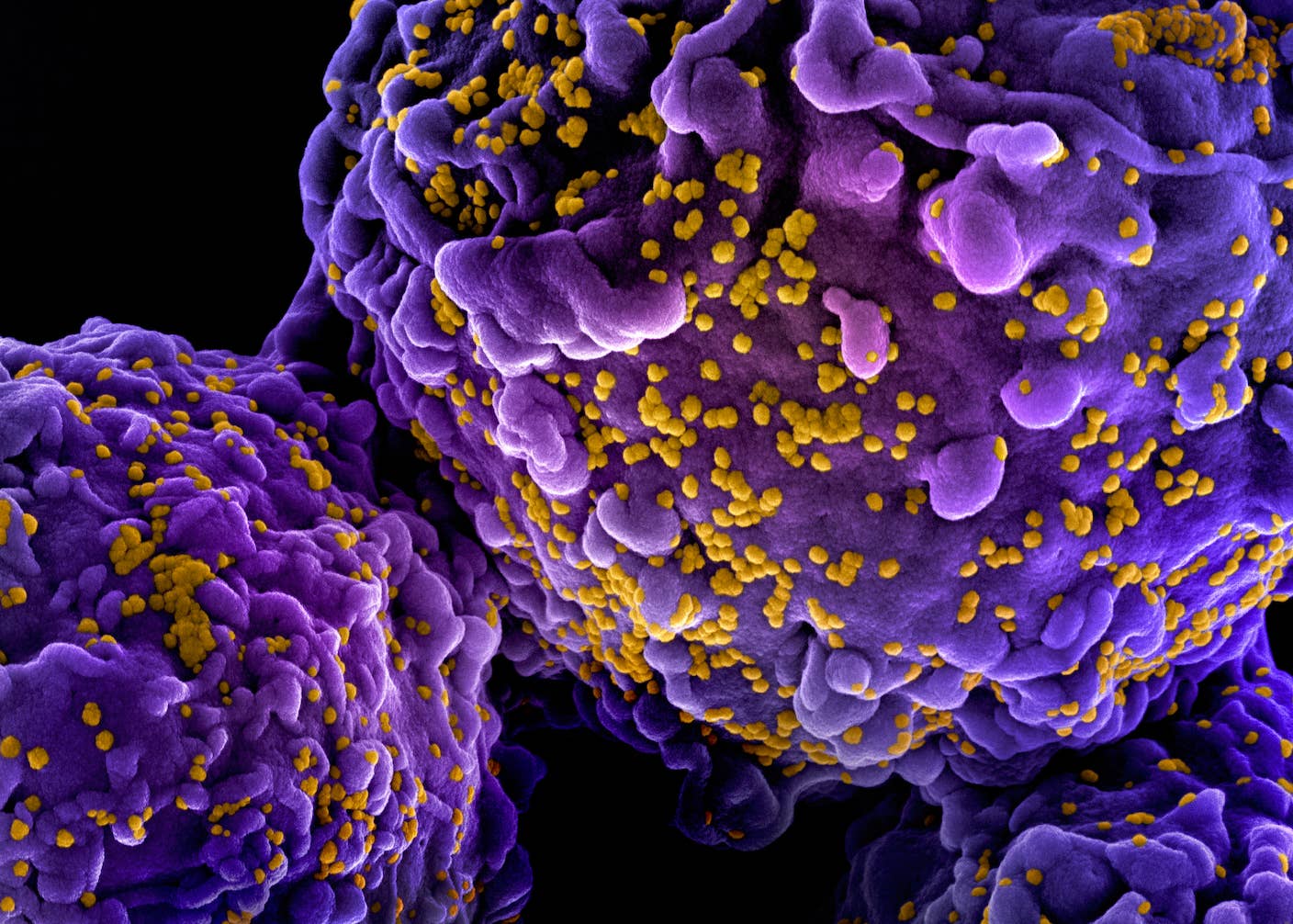

Norwegian Man Cured of HIV by His Brother’s Stem Cells

One Shot Just Crushed Three Deadly Autoimmune Diseases

Scientists Grow Electronics Inside the Brains of Living Mice

MIT Mined Bacteria for the Next CRISPR—and Found Hundreds of Potential New Tools

Computing

In the Scramble to Power AI, Investors Bet $140 Million on Data Centers at Sea

Edd Gent

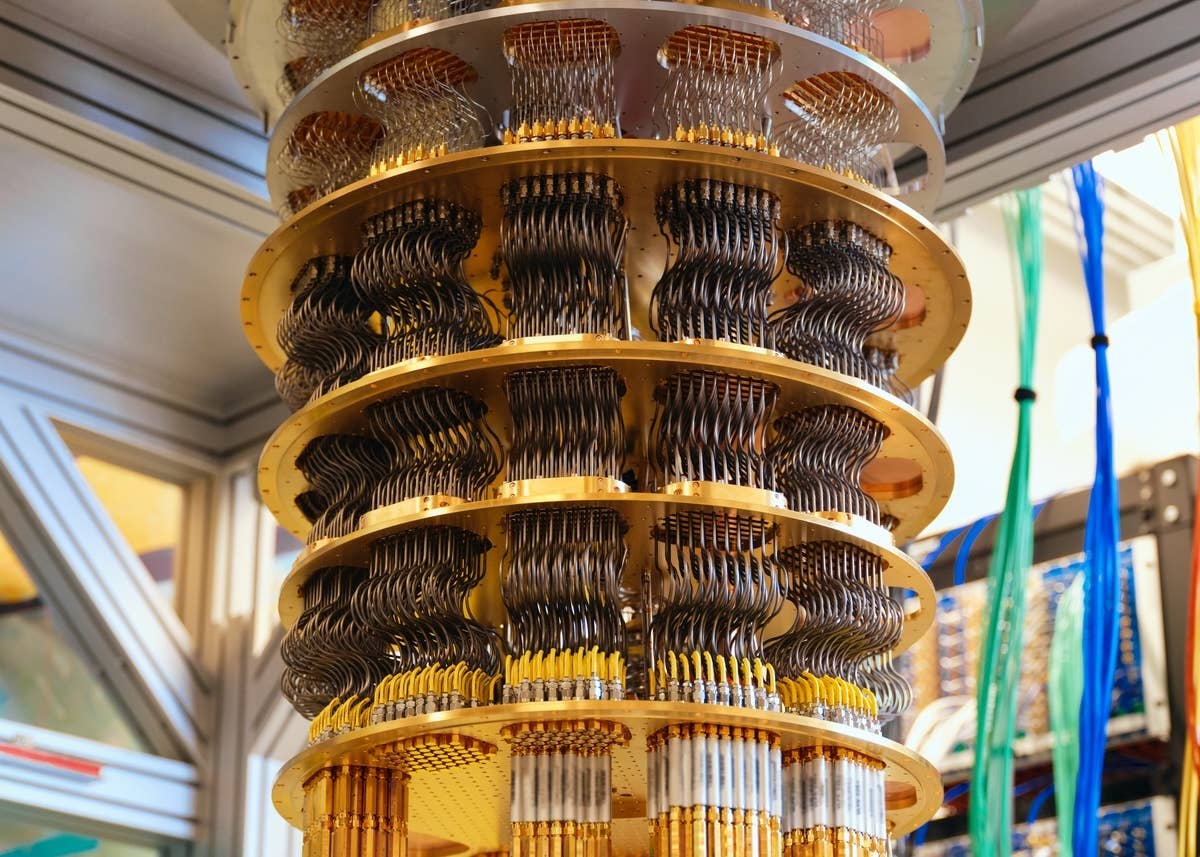

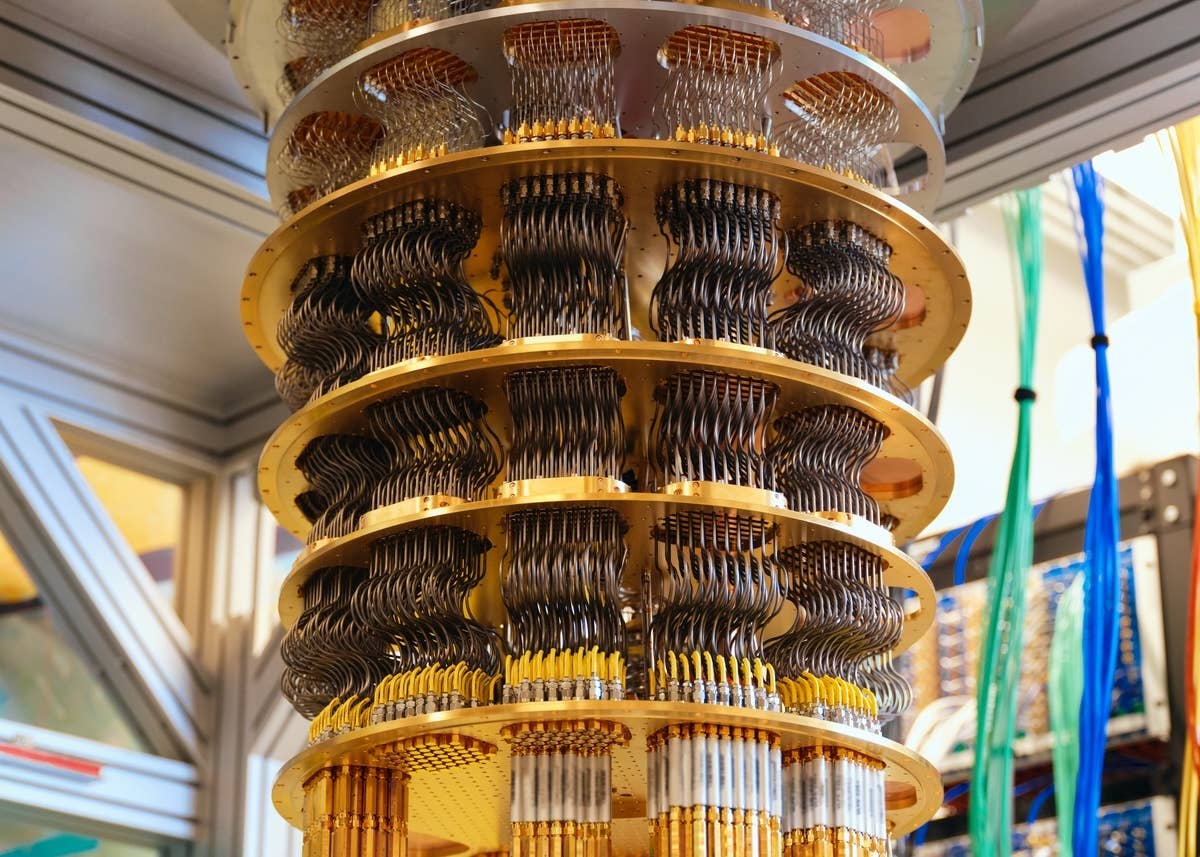

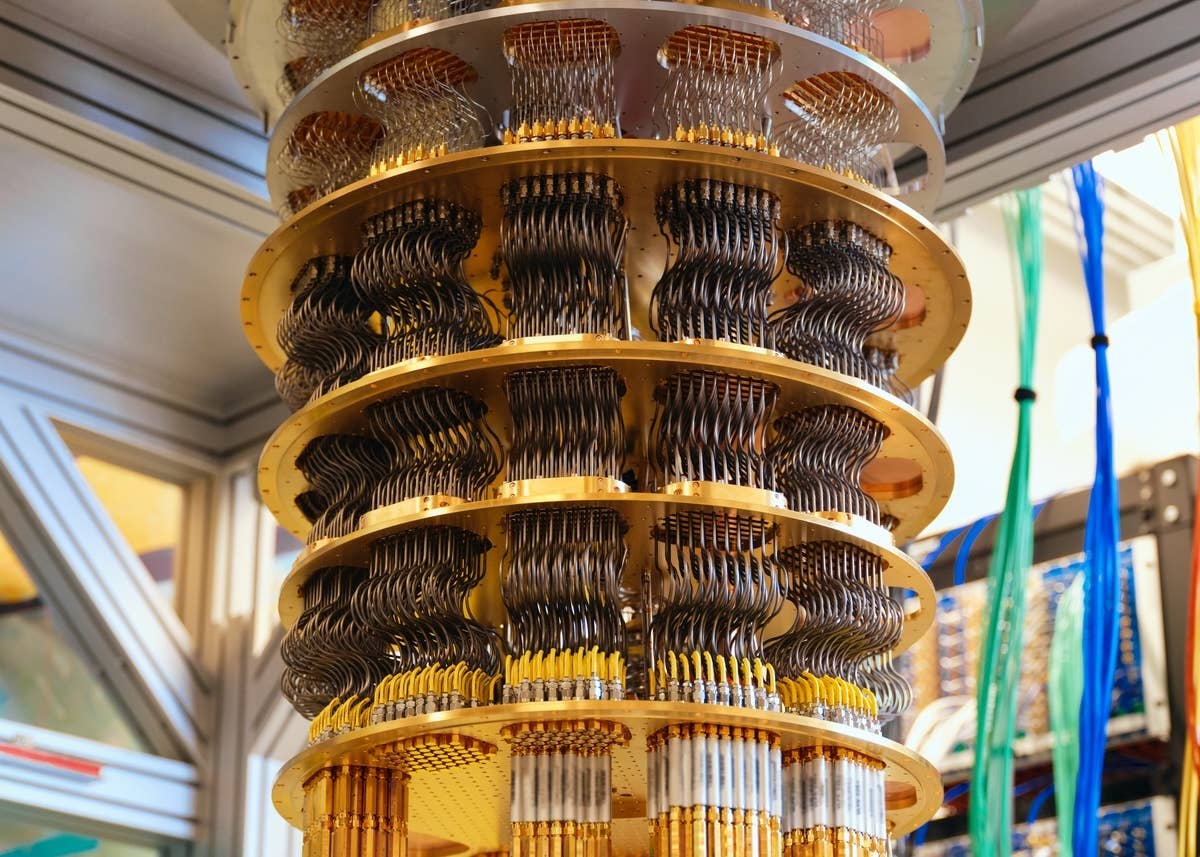

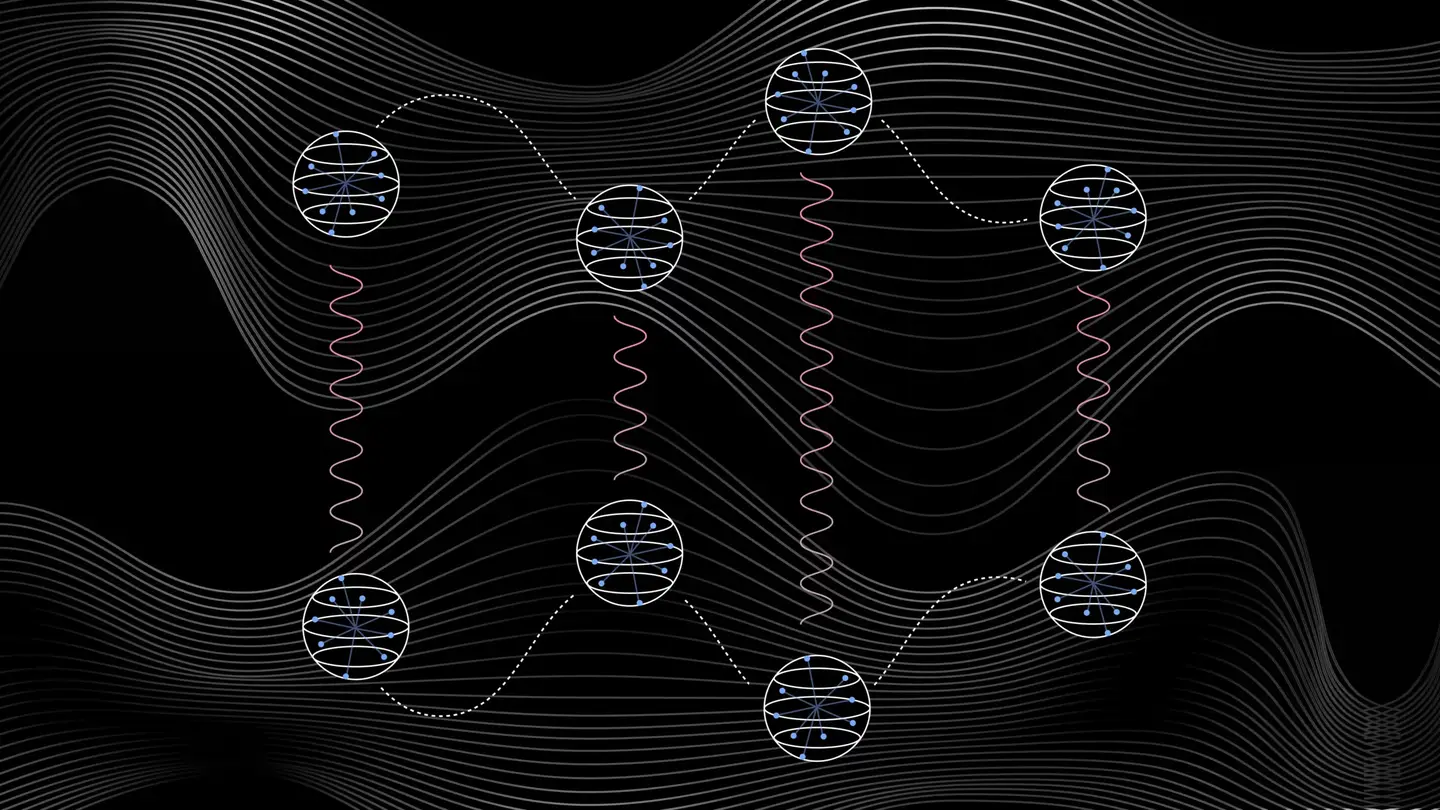

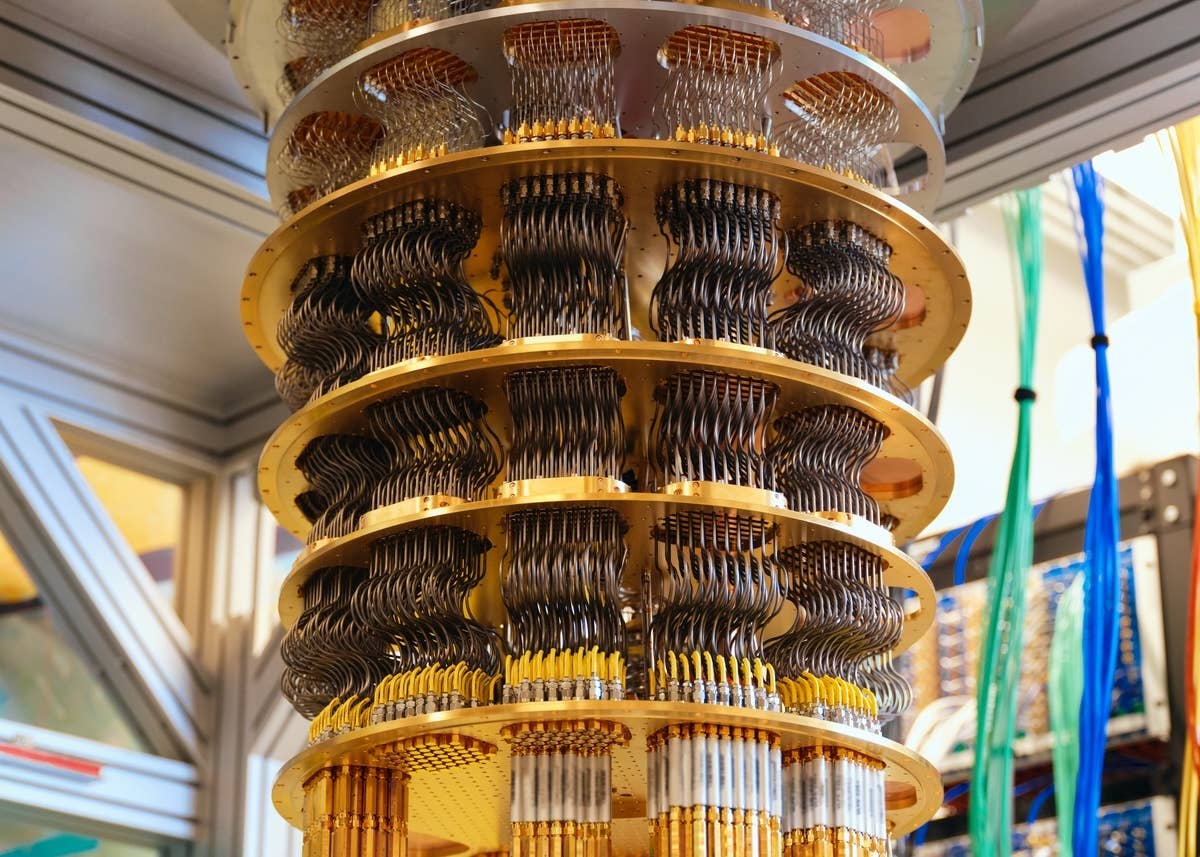

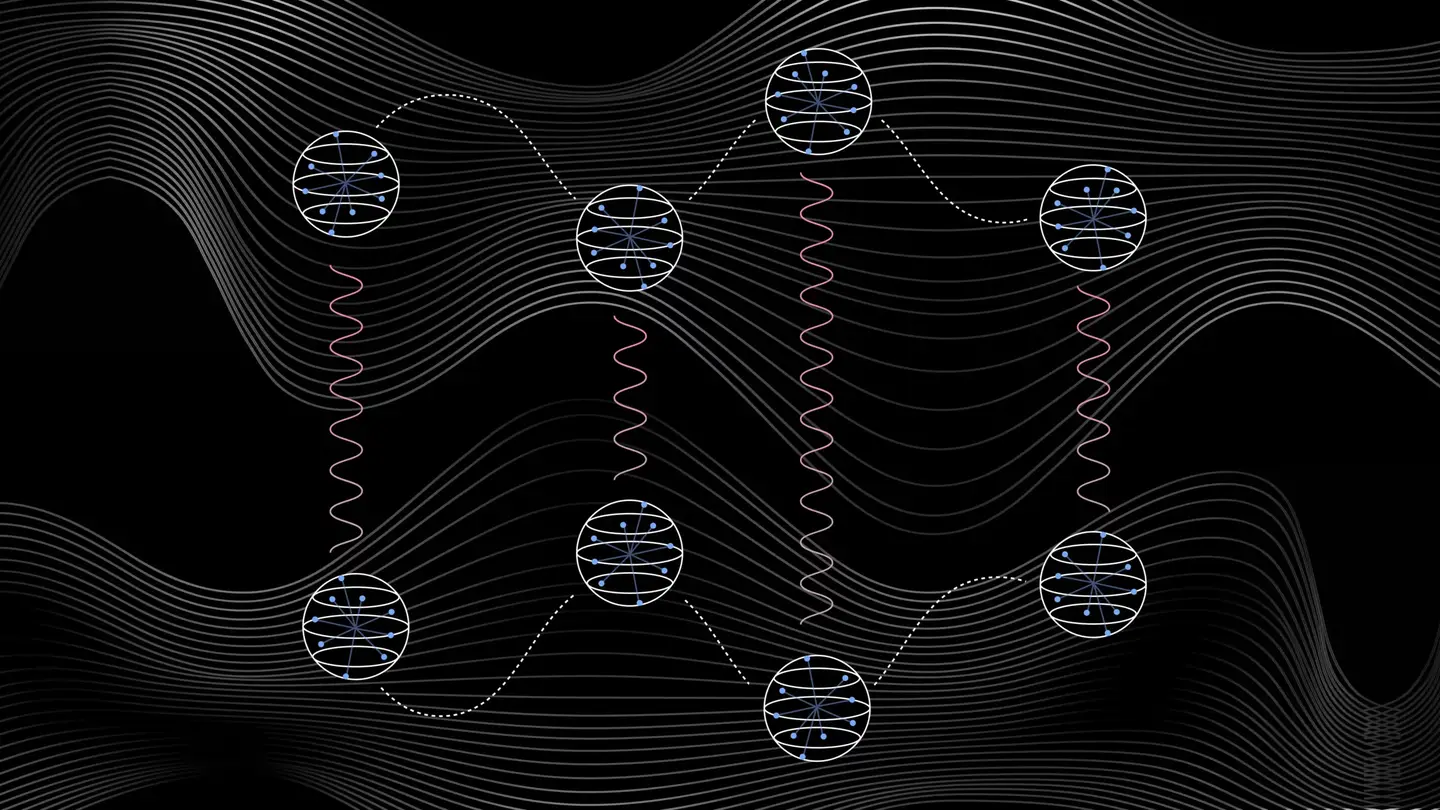

Quantum Computers Are Coming to Break Cryptography Faster Than Anyone Expected

Craig Costello

Printed Neurons That Mimic Brain Cells Could Slash AI’s Energy Bill

Edd Gent

Popular

Scientists Grow Electronics Inside the Brains of Living Mice

Anthropic’s Mythos AI Uncovered Serious Security Holes in Every Major OS and Browser

US Issues Grand Challenge: The First Fault-Tolerant Quantum Computer by 2028

These Mini Brains Just Learned to Solve a Classic Engineering Problem

Brain Implants Let Paralyzed People Type Nearly as Fast as Smartphone Users

Hackers Are Automating Cyberattacks With AI. Defenders Are Using It to Fight Back.

Space

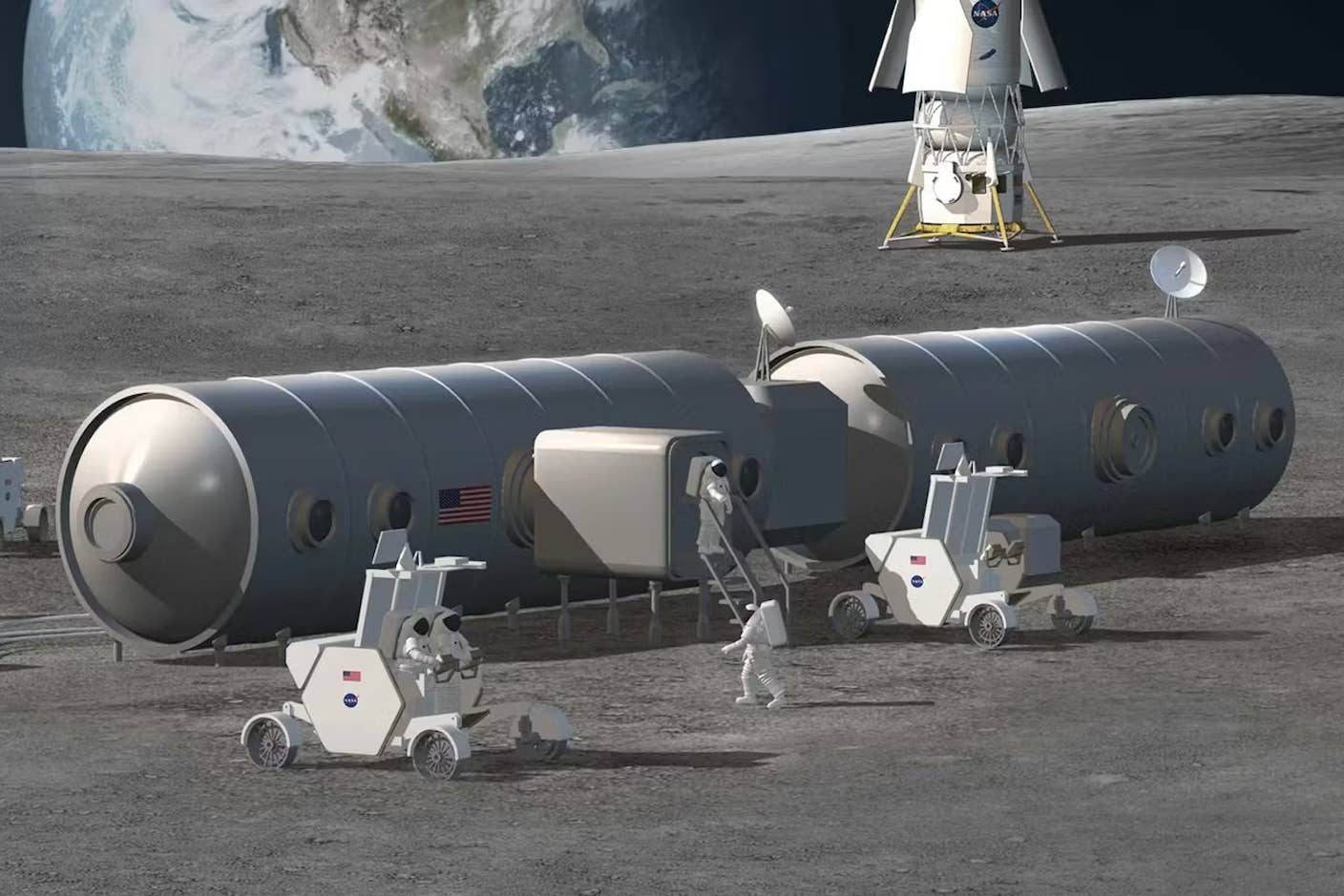

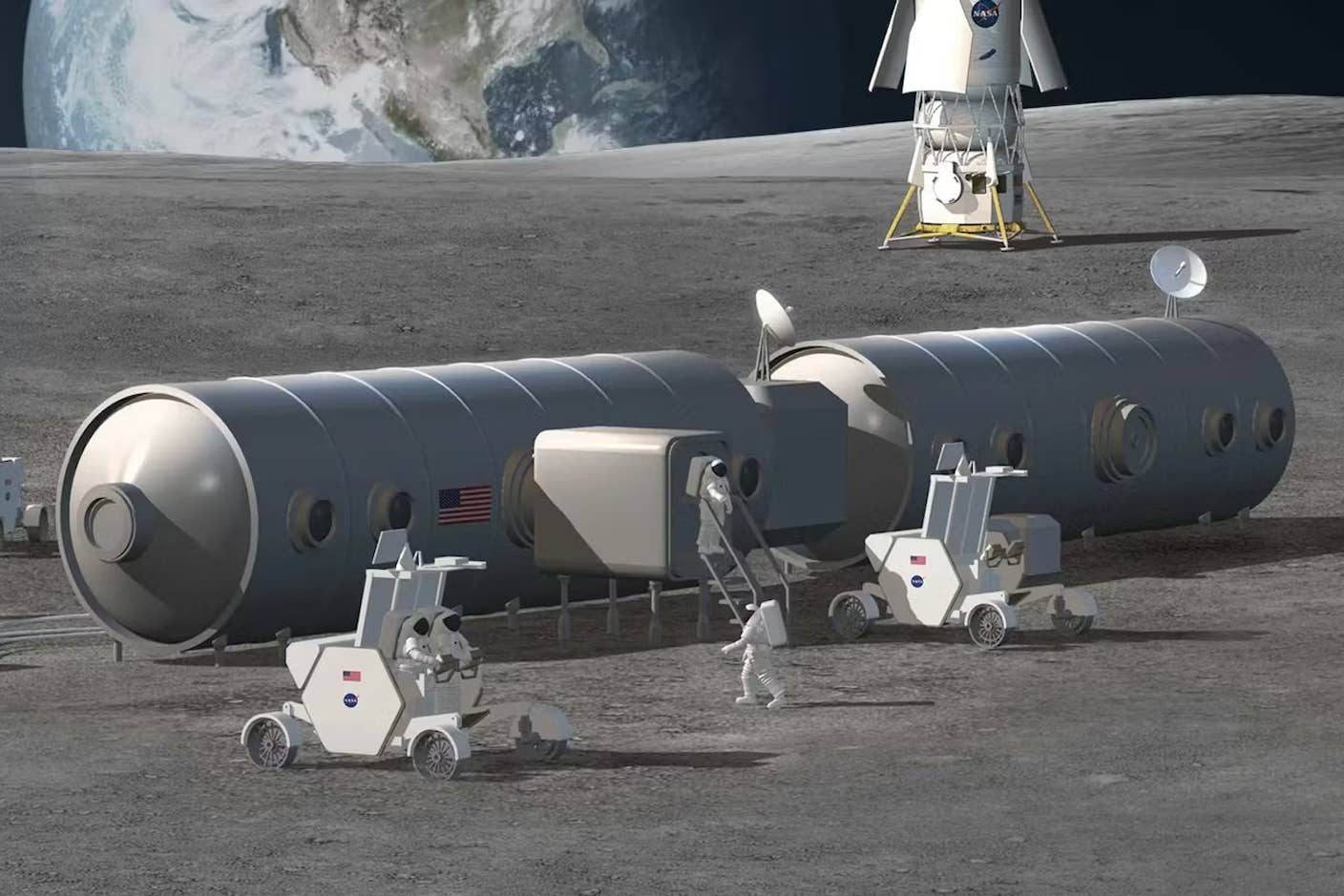

NASA Unveils Its $20 Billion Moon Base Plan—and a Nuclear Spacecraft for Mars

Edd Gent

The US Plans to Break Ground on a Permanent Moon Base by 2030. Here’s What It Will Take.

Fiona Henderson

andKevin Olsen

More Space Junk Is Plummeting to Earth. Earthquake Sensors Can Track It by the Sonic Booms.

Shelly Fan

Popular

Elon Musk Says SpaceX Is Pivoting From Mars to the Moon

What If We’re All Martians? The Intriguing Idea That Life on Earth Began on the Red Planet

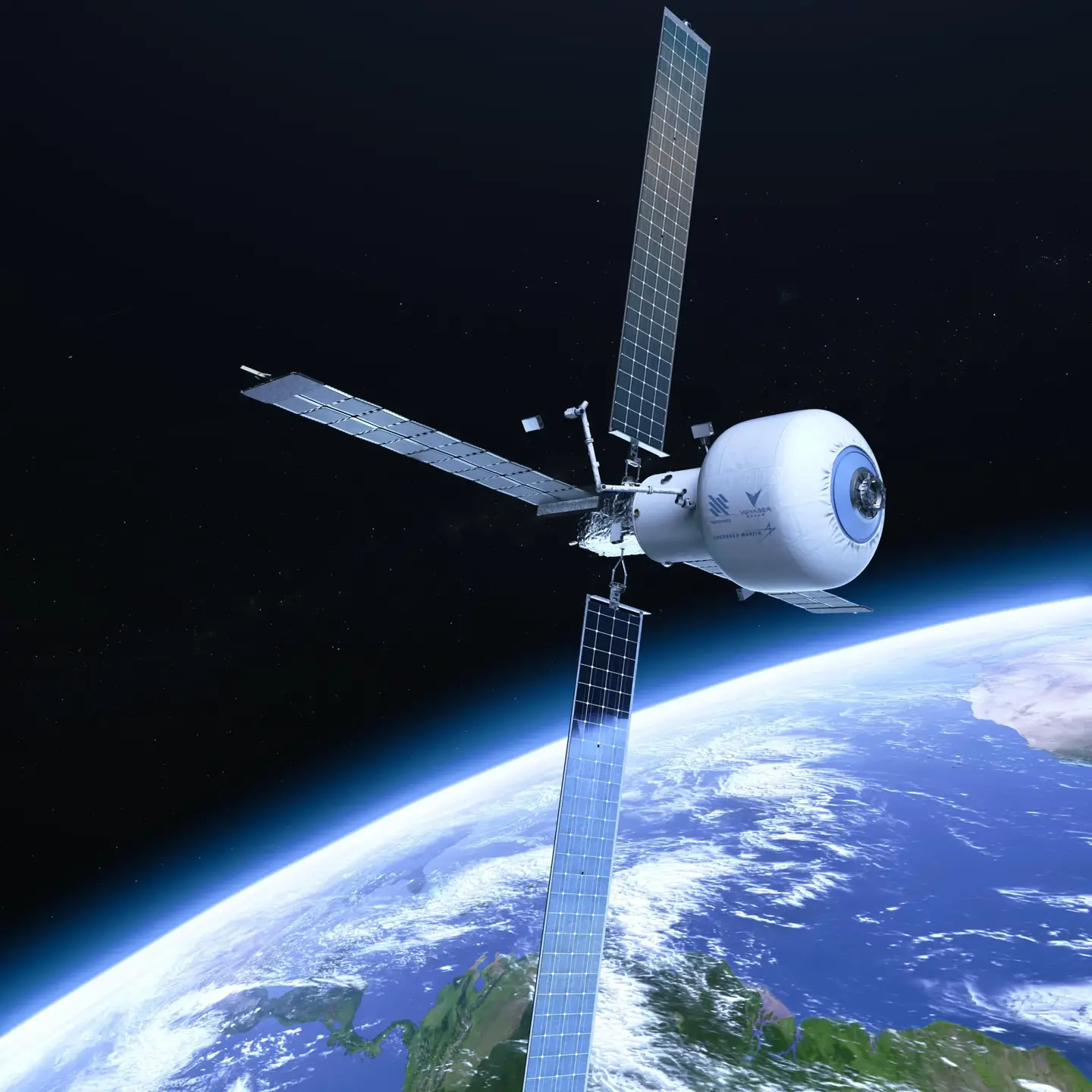

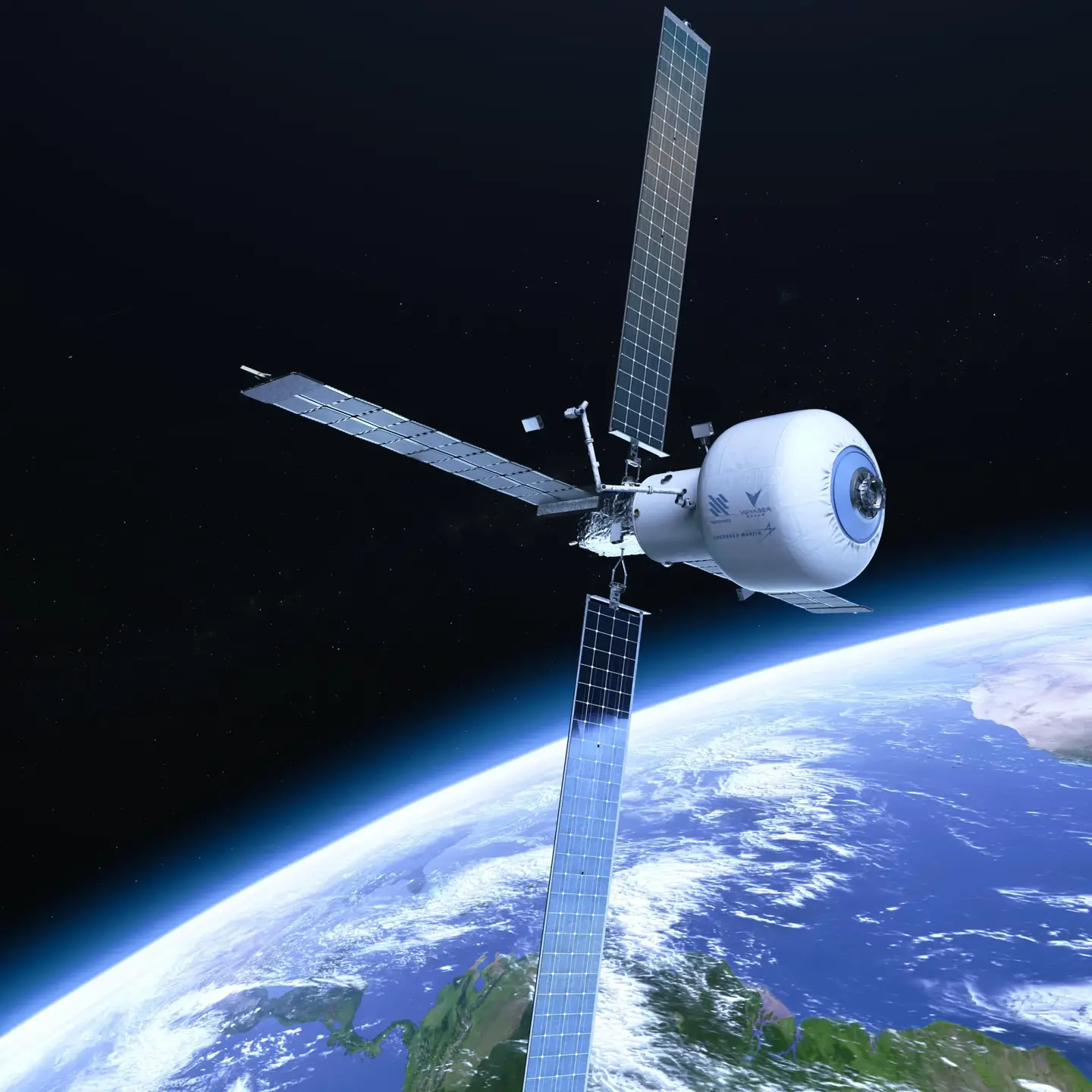

The Era of Private Space Stations Launches in 2026

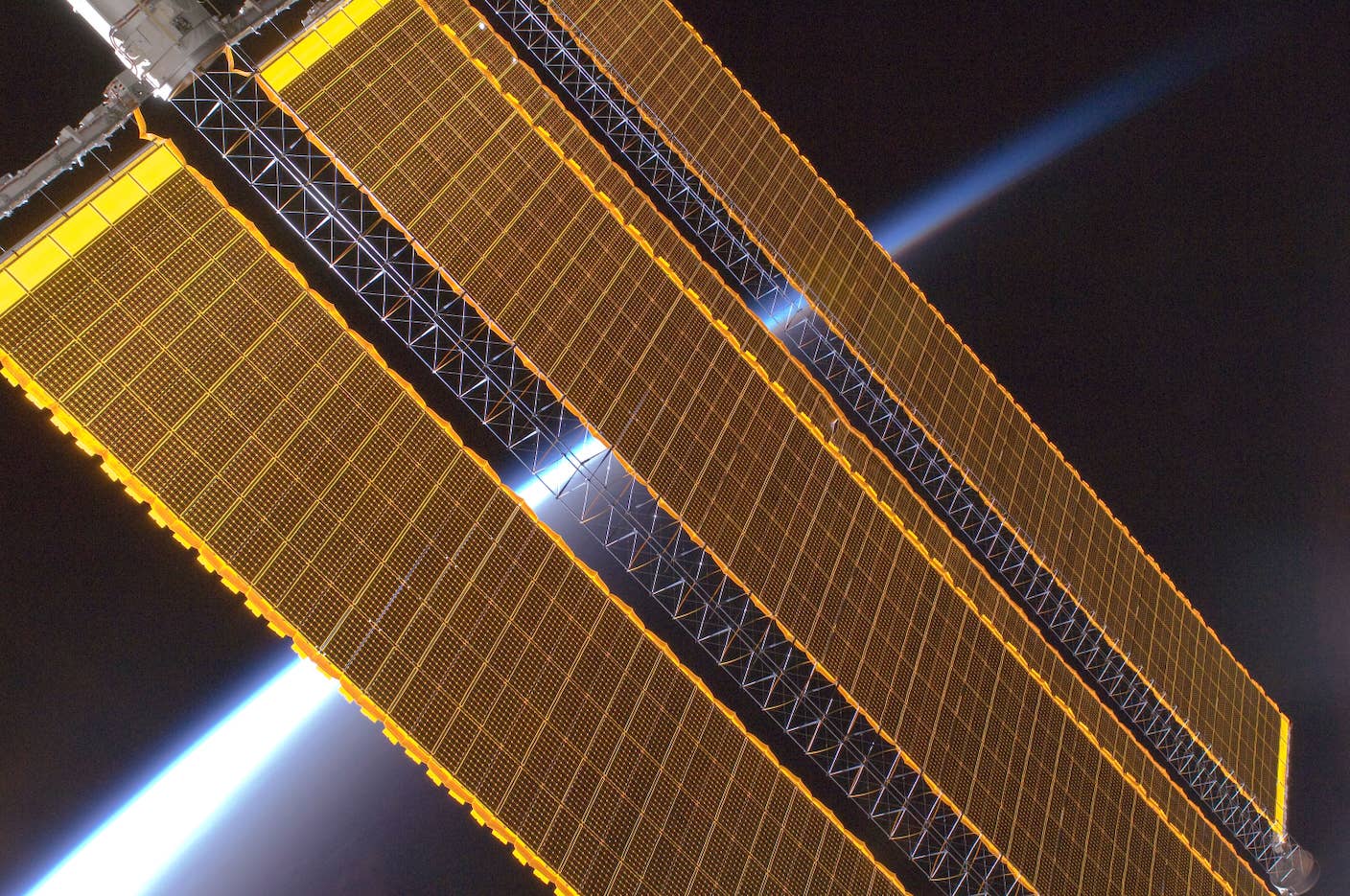

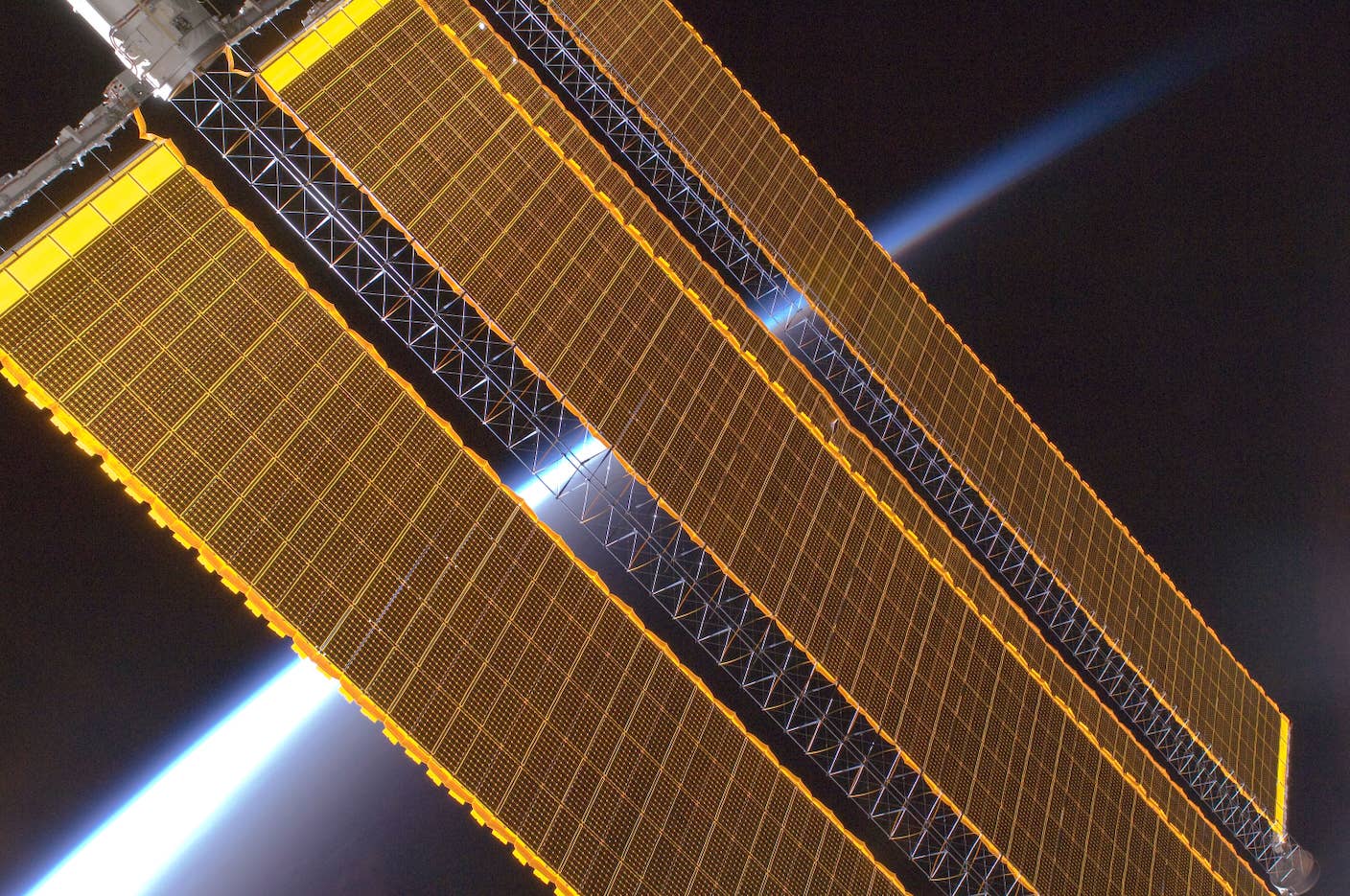

Data Centers in Space: Will 2027 Really Be the Year AI Goes to Orbit?

Scientists Say We Need a Circular Space Economy to Avoid Trashing Orbit

New Images Reveal the Milky Way’s Stunning Galactic Plane in More Detail Than Ever Before

Future

Data Centers Now Consume 6% of US Electricity—and the Backlash Has Begun

Edd Gent

You Probably Wouldn’t Notice if a Chatbot Slipped Ads Into Its Responses

Brian Jay Tang

andKang G. Shin

Quantum Computers Are Coming to Break Cryptography Faster Than Anyone Expected

Craig Costello

Popular

Industries Most Exposed to AI Are Not Only Seeing Productivity Gains but Jobs and Wage Growth Too

US Issues Grand Challenge: The First Fault-Tolerant Quantum Computer by 2028

Five Ways Quantum Technology Could Shape Everyday Life

Chatbots ‘Optimized to Please’ Make Us Less Likely to Admit When We’re Wrong

Google DeepMind Plans to Track AGI Progress With These 10 Traits of General Intelligence

Tech Companies Are Blaming Massive Layoffs on AI. What’s Really Going On?

Tech

These Seven AI Rings Translate Sign Language in Real Time

Shelly Fan

AI Companies Are Betting Billions on AI Scaling Laws. Will Their Wager Pay Off?

Nathan Garland

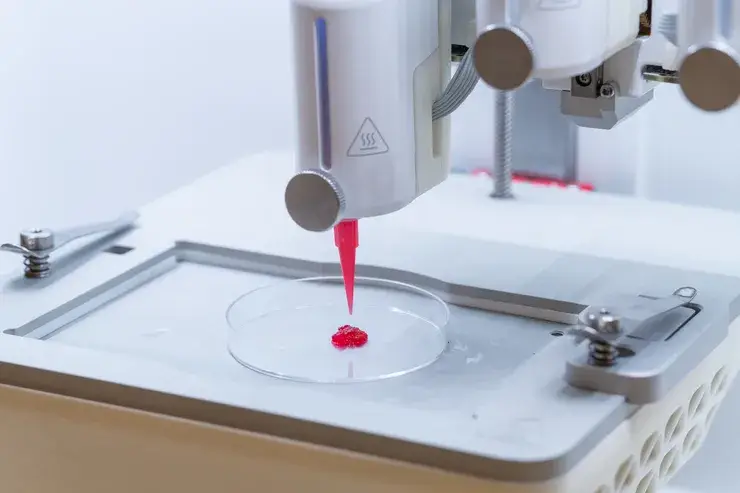

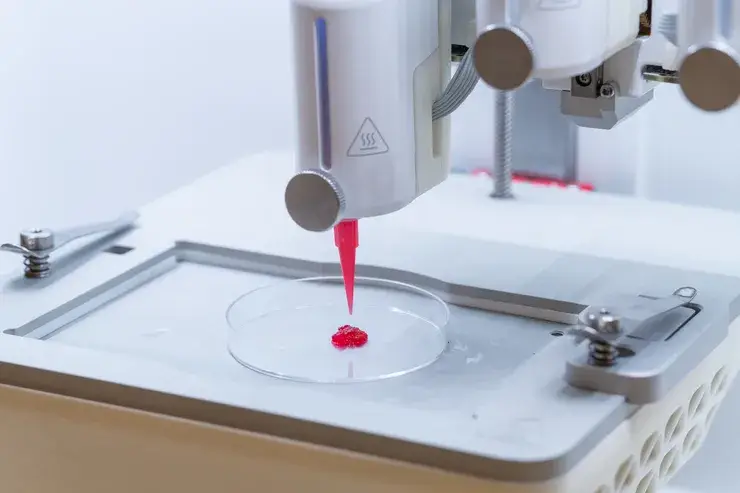

Super Precise 3D Printer Uses a Mosquito’s Needle-Like Mouth as a Nozzle

Shelly Fan

Popular

Is the AI Bubble About to Burst? What to Watch for as the Markets Wobble

OpenAI Slipped Shopping Into 800 Million ChatGPT Users’ Chats—Here’s Why That Matters

Scientists Hope 3D-Printed Skin Can Bring On-Demand Treatment for Serious Injuries

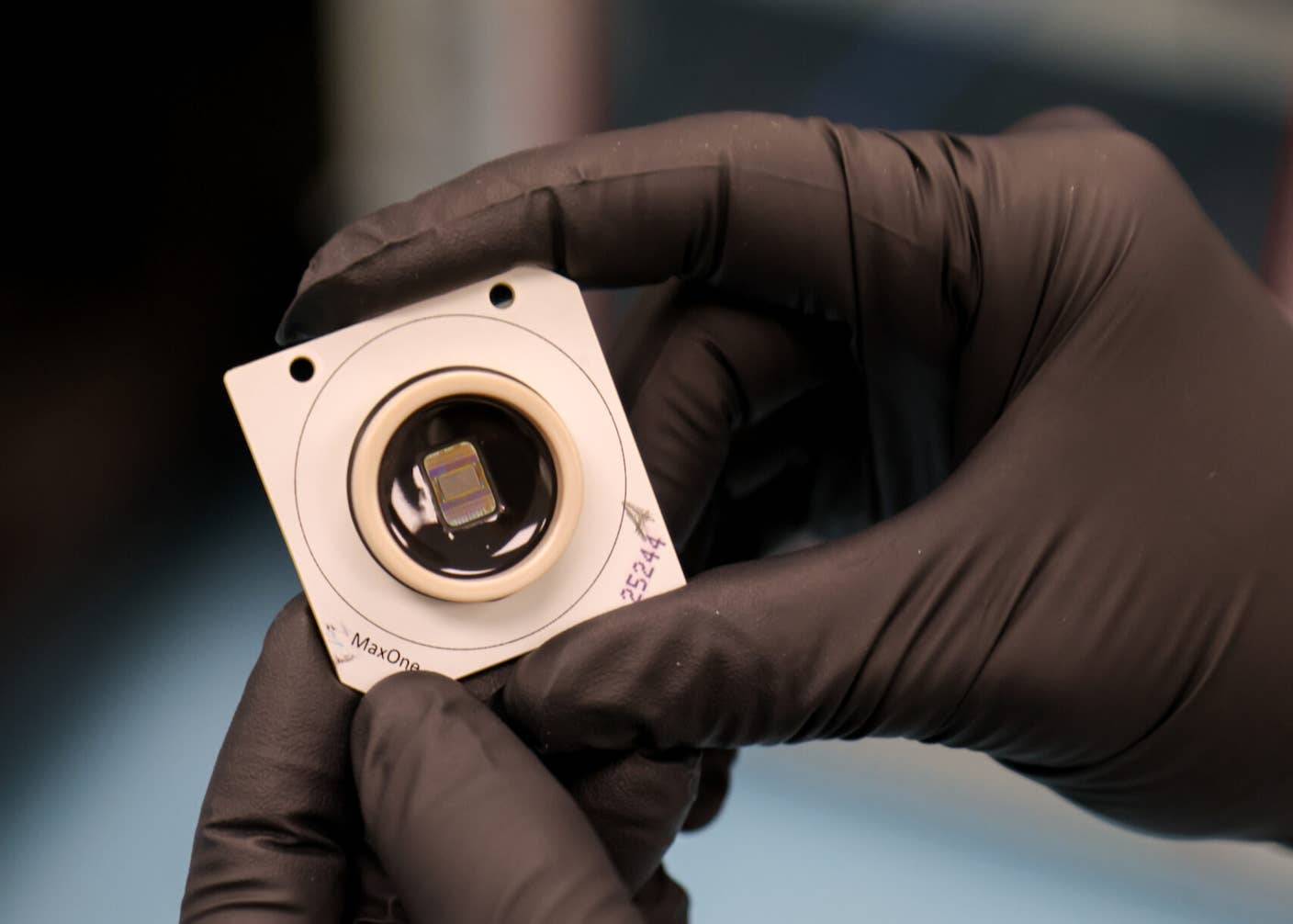

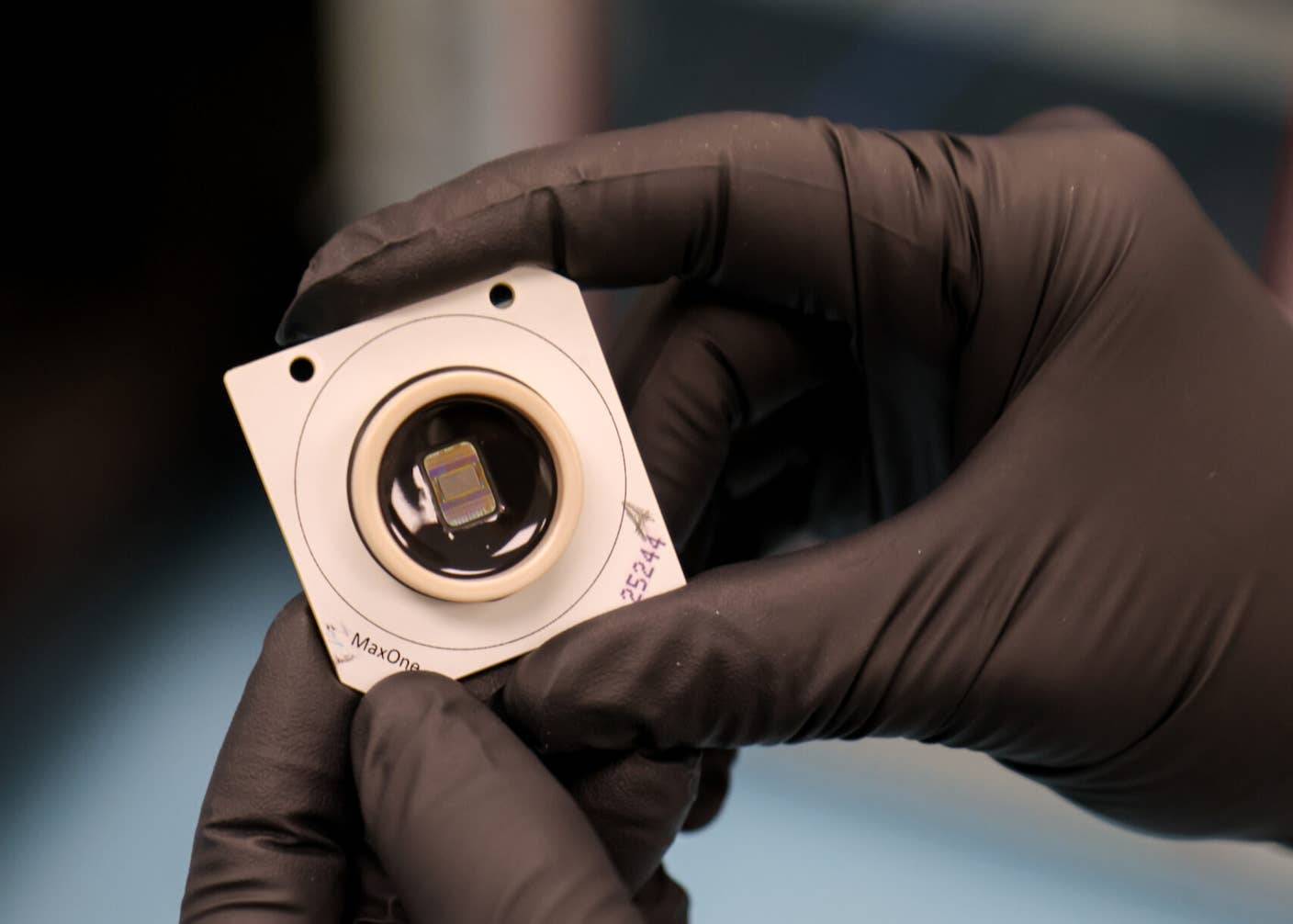

Scientists Just Made ‘Biological Qubits’ That Act as Quantum Sensors Inside Cells

Could Electric Brain Stimulation Make You Better at Math?

Stratolaunch’s Hypersonic Plane Breaks Mach 5 and Lands Without a Pilot

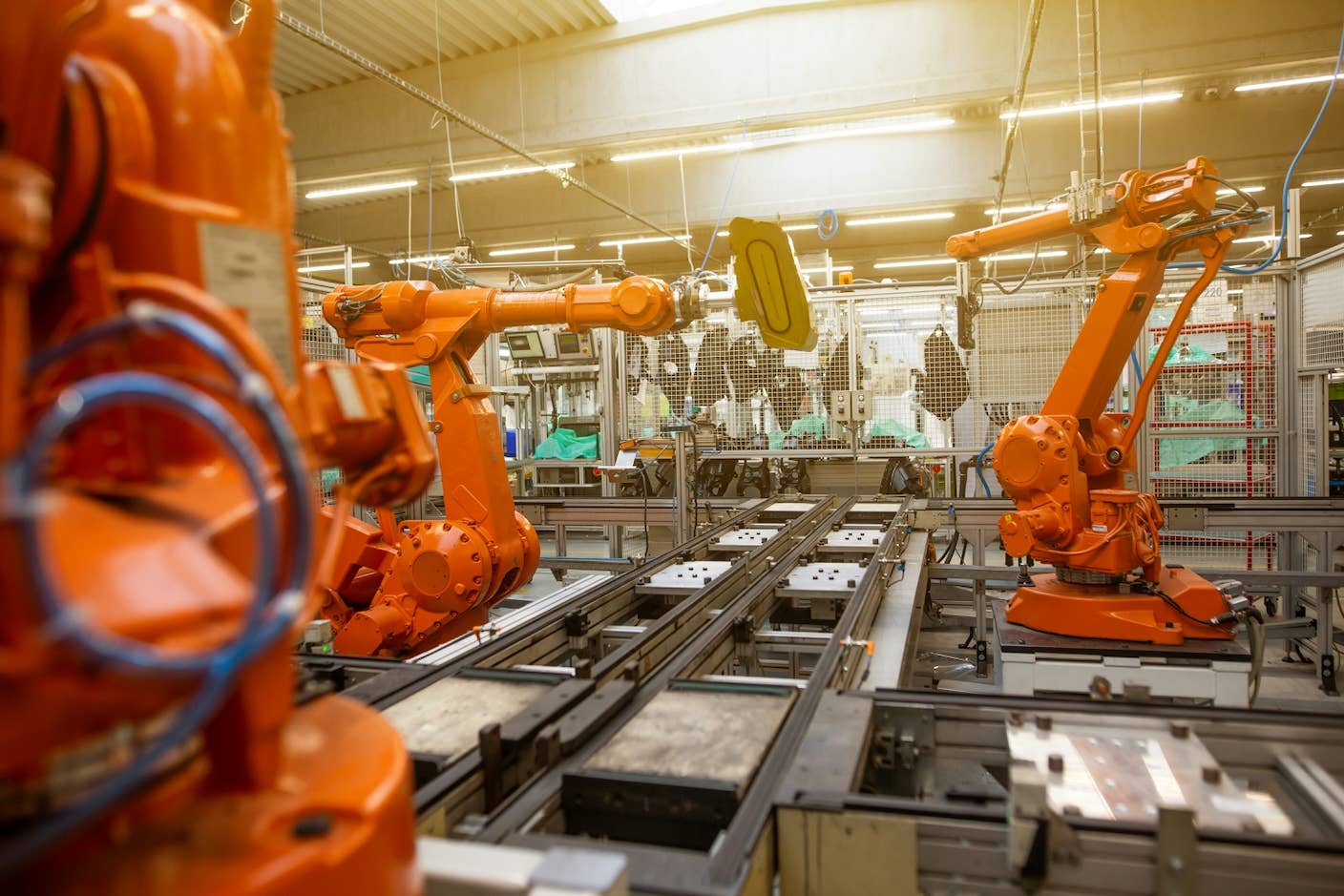

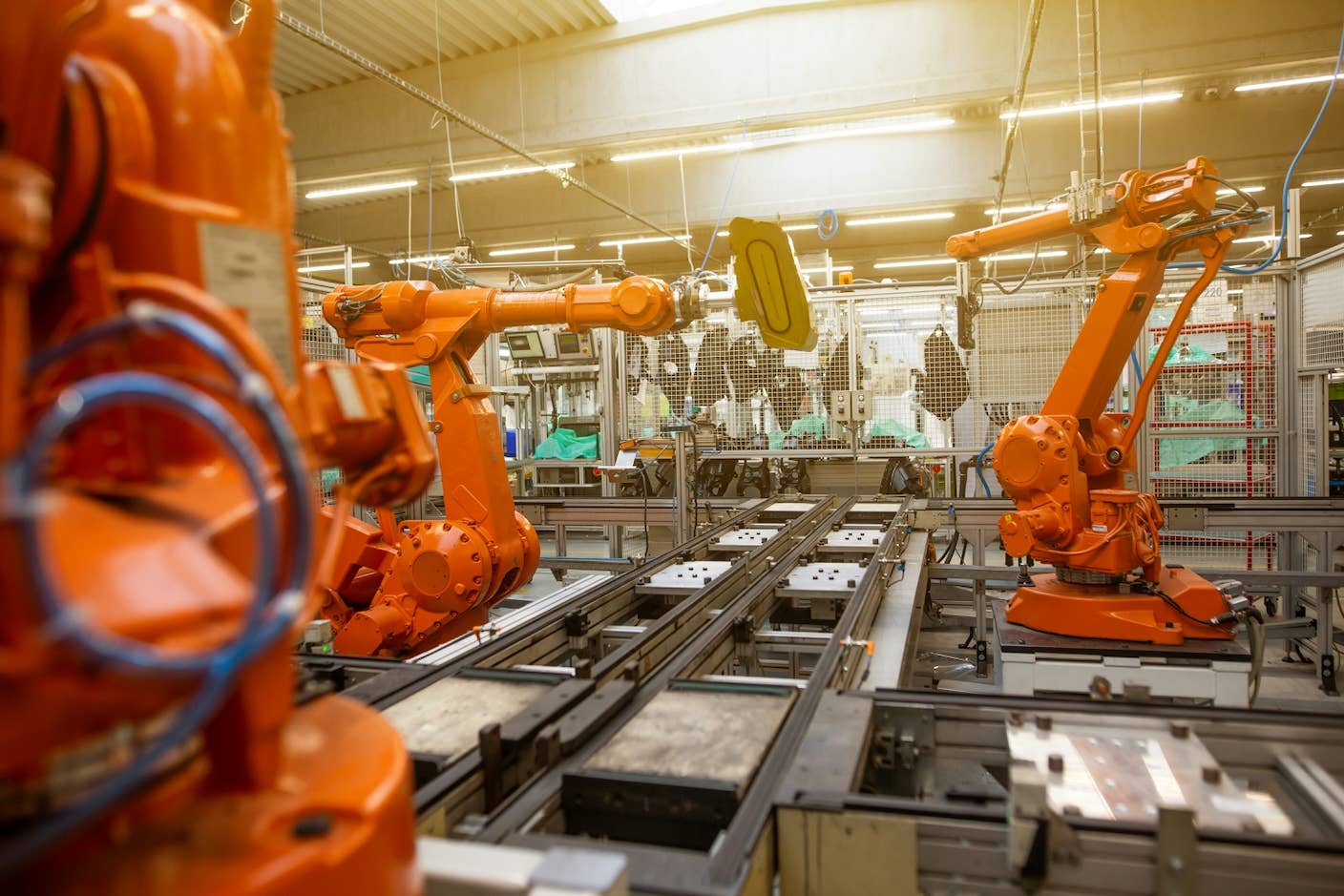

Robotics

AI Can Now Design and Run Thousands of Experiments Without Human Hands. We Aren’t Ready for the Risk to Biosecurity.

Stephen D. Turner

New Algae Robots Swarm Like Locusts at the Flick of a Switch

Shelly Fan

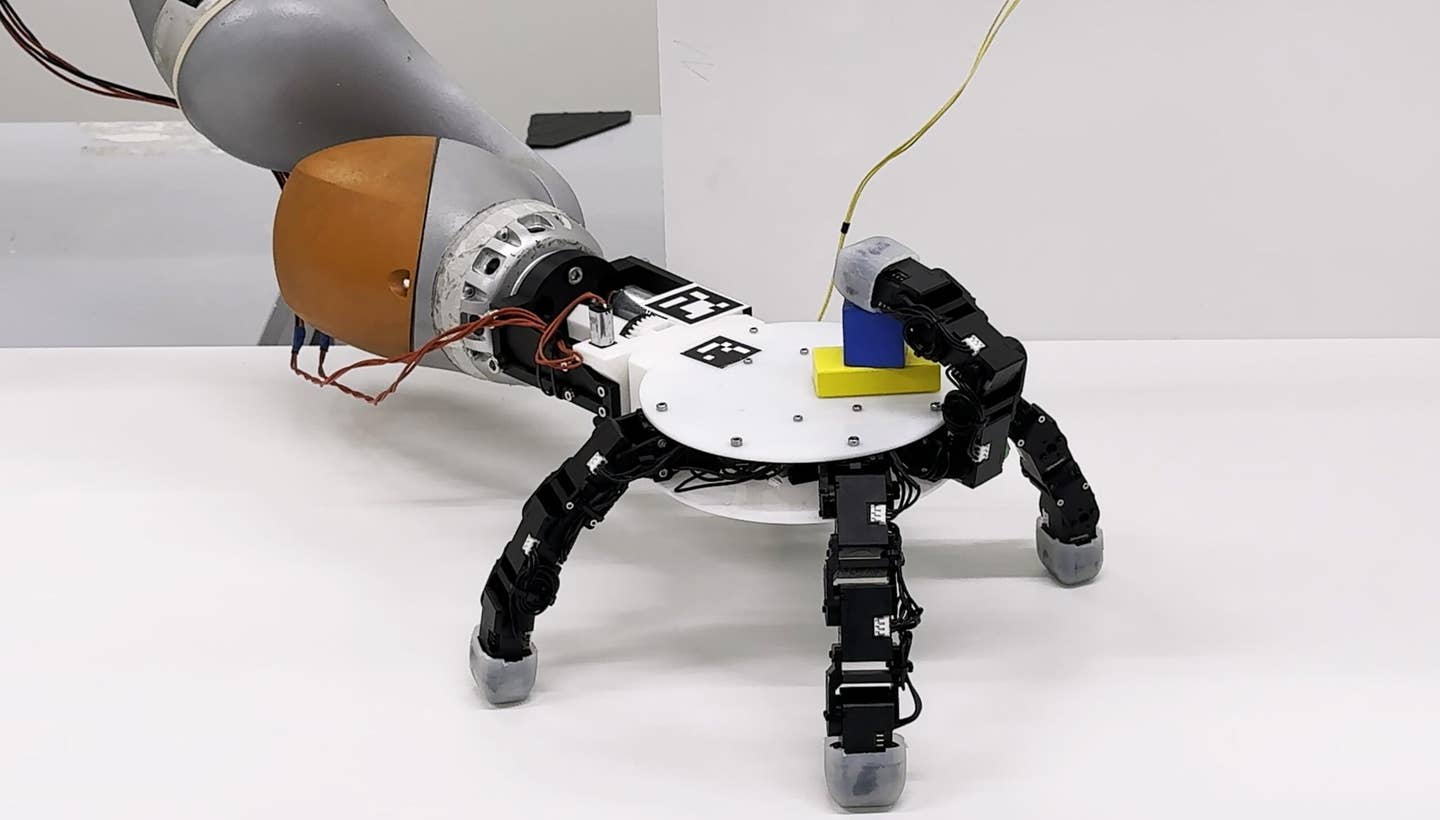

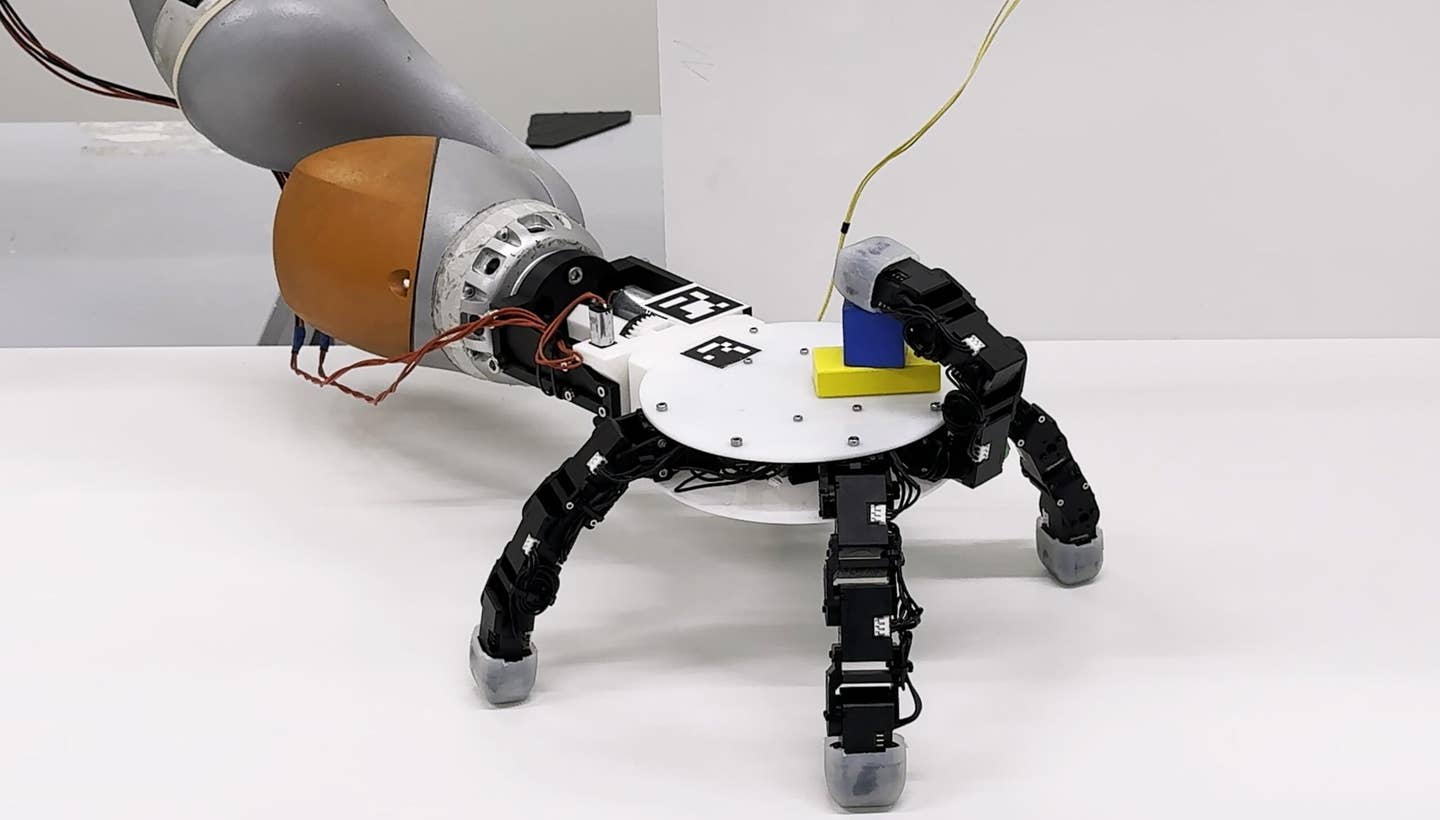

Robots With Different Designs Can Now Share Skills

Edd Gent

Popular

Sony’s Table-Tennis Robot Beat Elite Human Players With Unorthodox Moves

A Humanoid Robot Beat the Human World Record for a Half Marathon

Thousands of Everyday Drone Pilots Are Making a Google Street View From Above

This ‘Machine Eye’ Could Give Robots Superhuman Reflexes

This Robotic Hand Detaches and Skitters About Like Thing From ‘The Addams Family’

Waymo Closes in on Uber and Lyft Prices, as More Riders Say They Trust Robotaxis

Science

AI Lab Partners Are Rewiring the Hunt for New Drugs

Shelly Fan

The Fully Anesthetized Brain Can Still Track a Podcast

Shelly Fan

Physicists Have Measured ‘Negative Time’ in the Lab

Howard Wiseman

Popular

The Heart Rarely Gets Cancer. Scientists Think They Know Why.

How Does Imagination Really Work in the Brain? New Theory Upends What We Knew

What We Actually See—and Don’t See—Shows Consciousness Is Only the Tip of the Iceberg

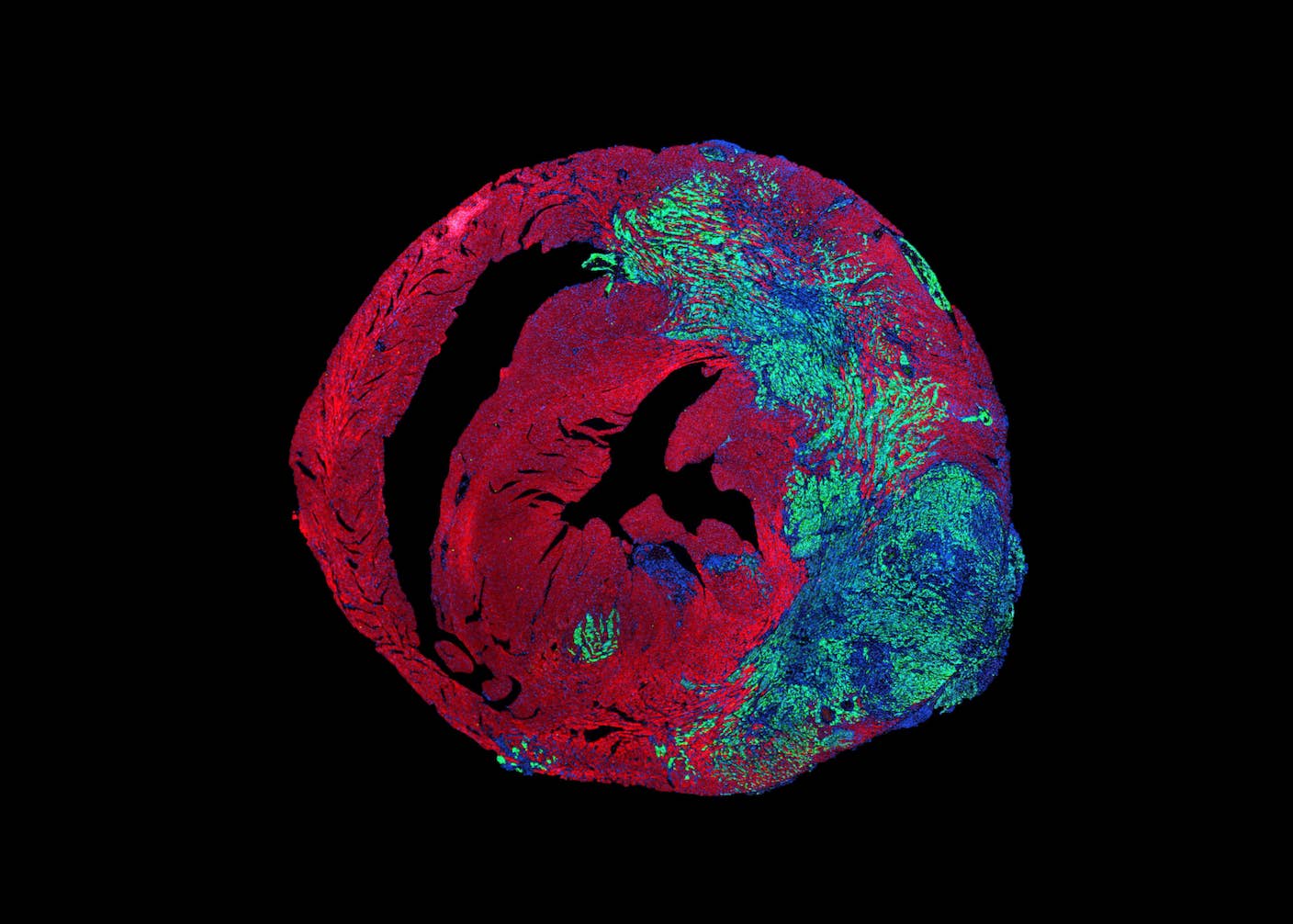

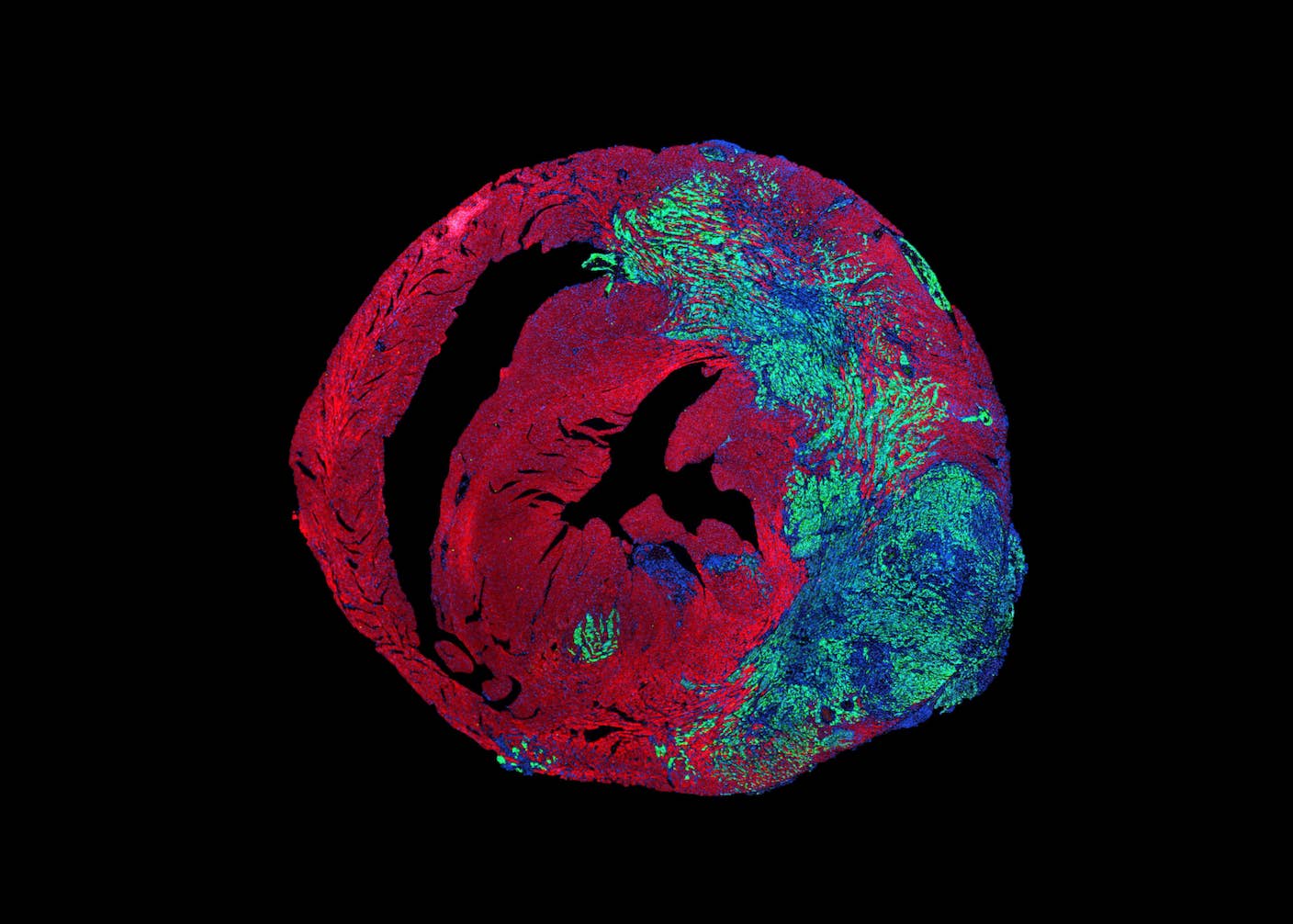

These Mini Brains Just Learned to Solve a Classic Engineering Problem

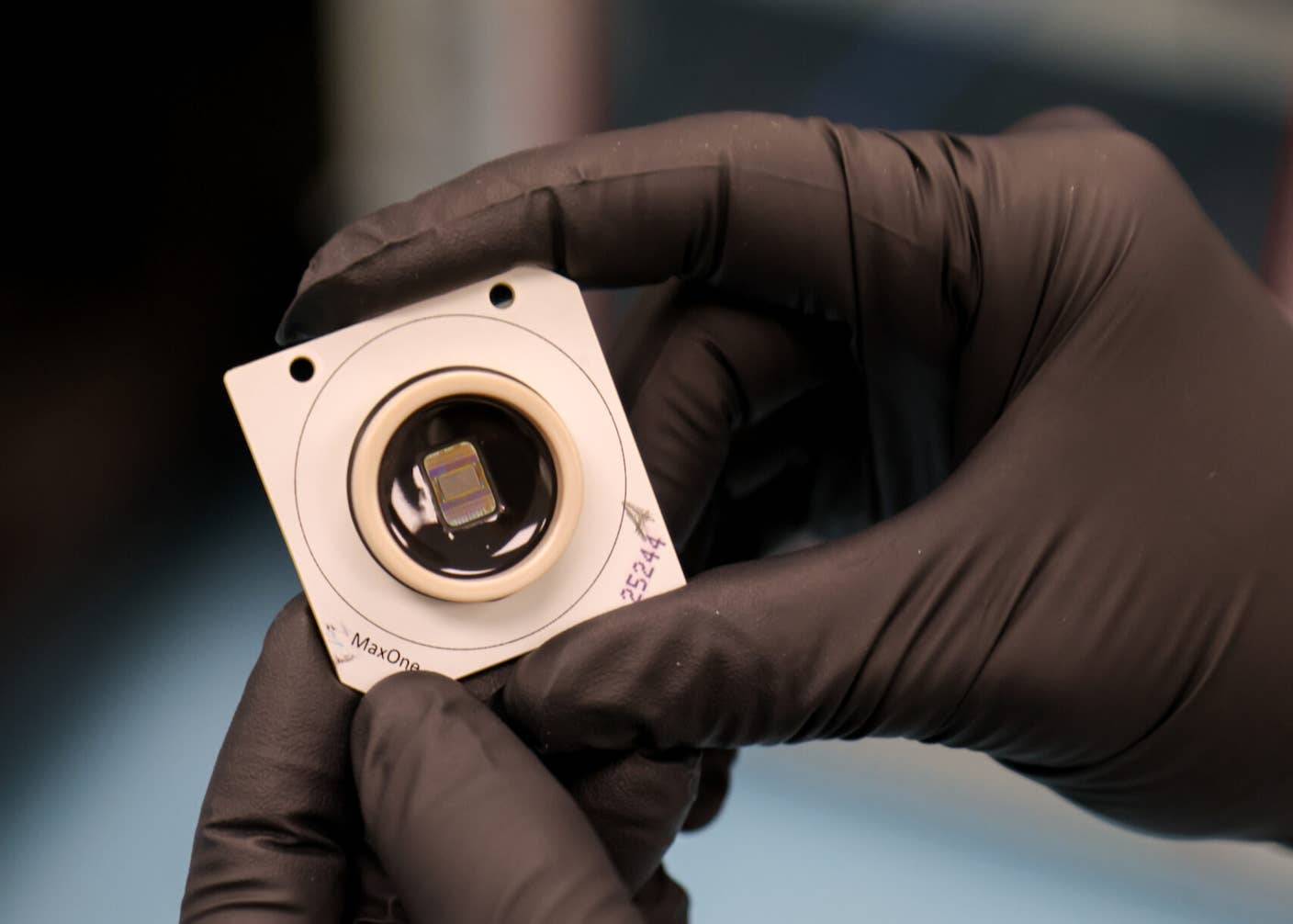

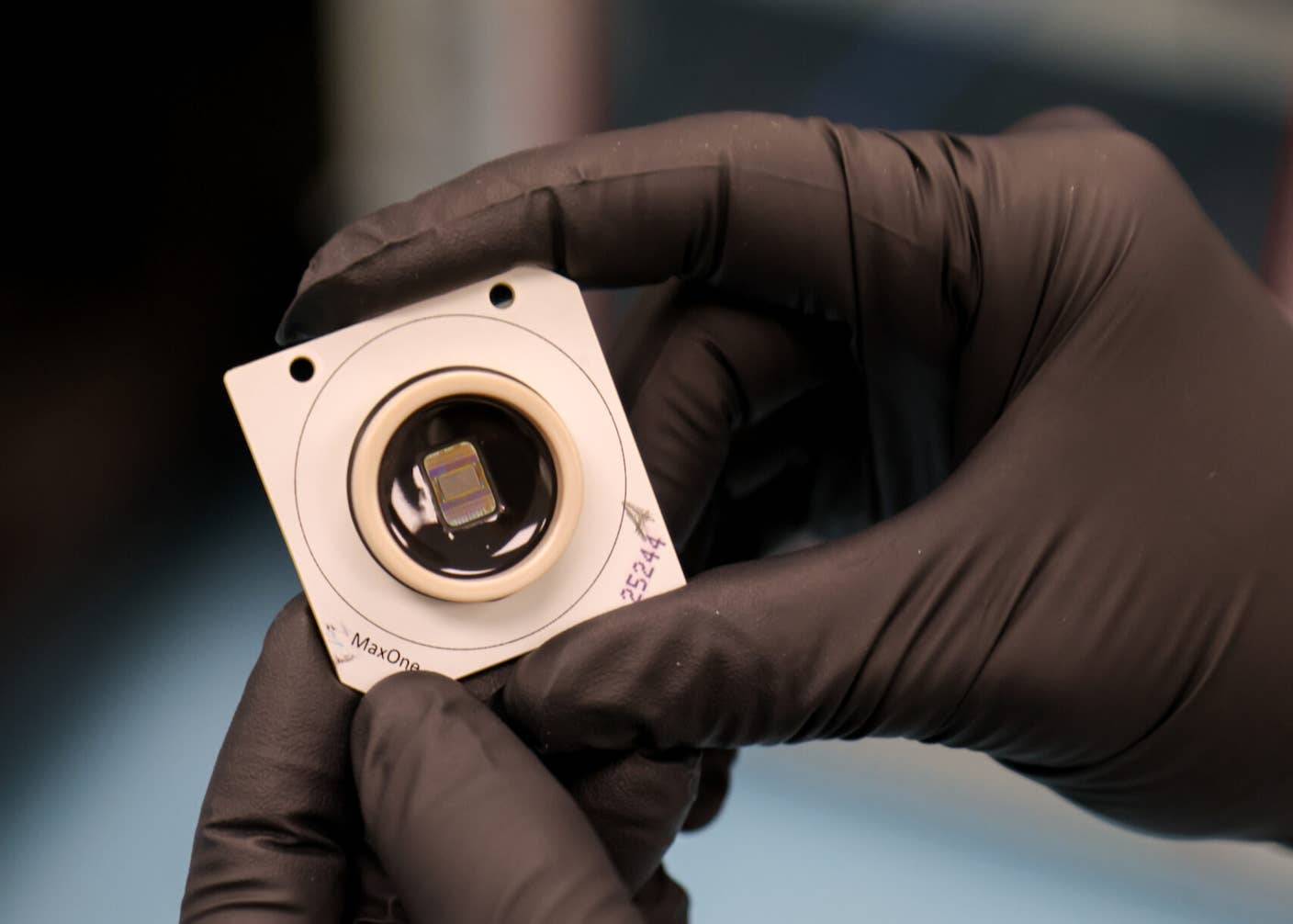

New Device Detects Brain Waves in Mini Brains Mimicking Early Human Development

This Brain Pattern Could Signal the Moment Consciousness Slips Away