The SpiNNaker Supercomputer, Modeled After the Human Brain, Is Up and Running

Share

We've long used the brain as inspiration for computers, but the SpiNNaker supercomputer, switched on this month, is probably the closest we’ve come to recreating it in silicon. Now scientists hope to use the supercomputer to model the very thing that inspired its design.

The brain is the most complex machine in the known universe, but that complexity comes primarily from its architecture rather than the individual components that make it up. Its highly interconnected structure means that relatively simple messages exchanged between billions of individual neurons add up to carry out highly complex computations.

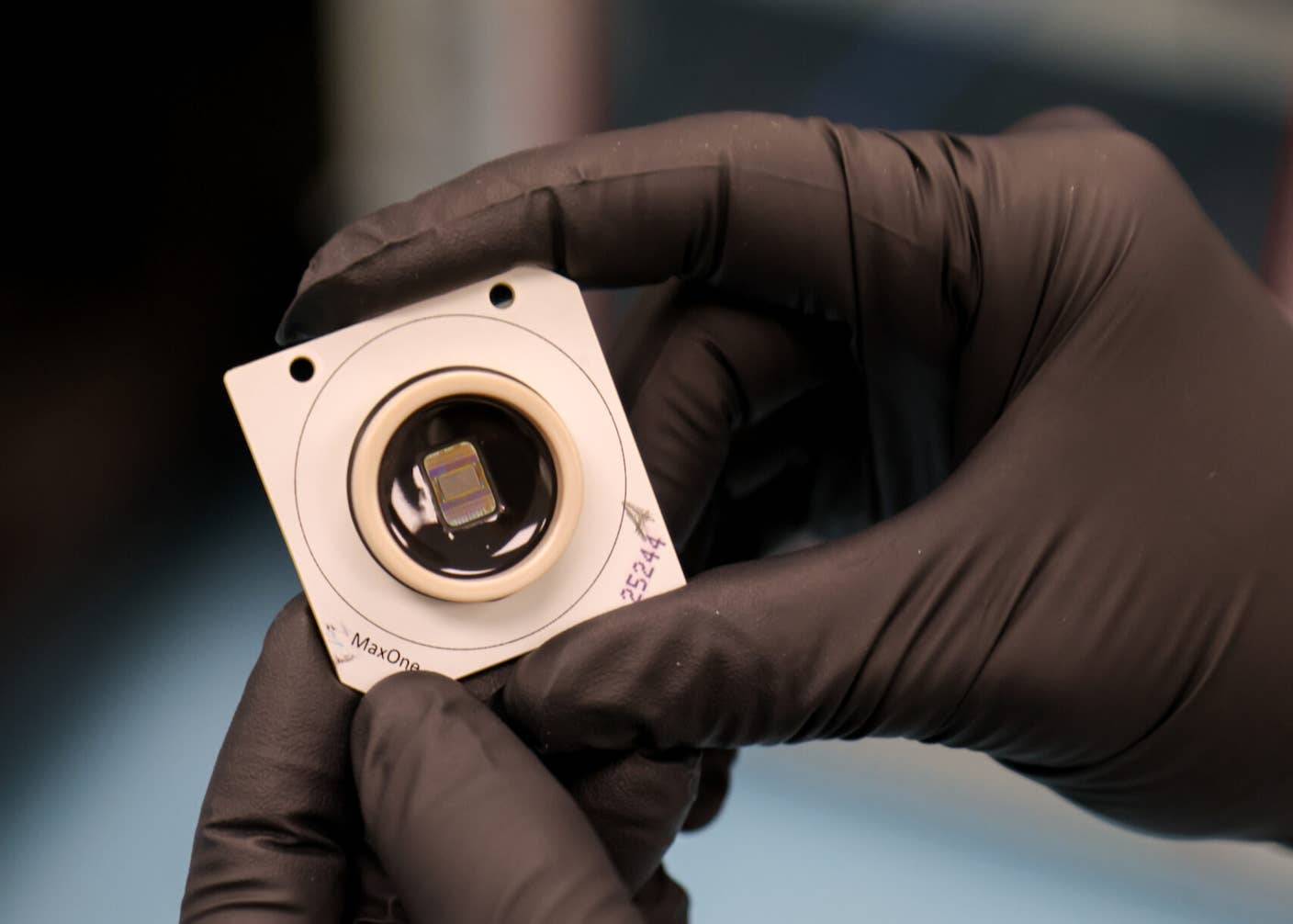

That’s the paradigm that has inspired the ‘Spiking Neural Network Architecture” (SpiNNaker) supercomputer at the University of Manchester in the UK. The project is the brainchild of Steve Furber, the designer of the original ARM processor. After a decade of development, a million-core version of the machine that will eventually be able to simulate up to a billion neurons was switched on earlier this month.

The idea of splitting computation into very small chunks and spreading them over many processors is already the leading approach to supercomputing. But even the most parallel systems require a lot of communication, and messages may have to pack in a lot of information, such as the task that needs to be completed or the data that needs to be processed.

In contrast, messages in the brain consist of simple electrochemical impulses, or spikes, passed between neurons, with information encoded primarily in the timing or rate of those spikes (which is more important is a topic of debate among neuroscientists). Each neuron is connected to thousands of others via synapses, and complex computation relies on how spikes cascade through these highly-connected networks.

The SpiNNaker machine attempts to replicate this using a model called Address Event Representation. Each of the million cores can simulate roughly a million synapses, so depending on the model, 1,000 neurons with 1,000 connections or 100 neurons with 10,000 connections. Information is encoded in the timing of spikes and the identity of the neuron sending them. When a neuron is activated it broadcasts a tiny packet of data that contains its address, and spike timing is implicitly conveyed.

By modeling their machine on the architecture of the brain, the researchers hope to be able to simulate more biological neurons in real time than any other machine on the planet. The project is funded by the European Human Brain Project, a ten-year science mega-project aimed at bringing together neuroscientists and computer scientists to understand the brain, and researchers will be able to apply for time on the machine to run their simulations.

Importantly, it’s possible to implement various different neuronal models on the machine. The operation of neurons involves a variety of complex biological processes, and it’s still unclear whether this complexity is an artefact of evolution or central to the brain’s ability to process information. The ability to simulate up to a billion simple neurons or millions of more complex ones on the same machine should help to slowly tease out the answer.

Even at a billion neurons, that still only represents about one percent of the human brain, so it’s still going to be limited to investigating isolated networks of neurons. But the previous 500,000-core machine has already been used to do useful simulations of the Basal Ganglia—an area affected in Parkinson’s disease—and an outer layer of the brain that processes sensory information.

The full-scale supercomputer will make it possible to study even larger networks previously out of reach, which could lead to breakthroughs in our understanding of both the healthy and unhealthy functioning of the brain.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

And while neurological simulation is the main goal for the machine, it could also provide a useful research tool for roboticists. Previous research has already shown a small board of SpiNNaker chips can be used to control a simple wheeled robot, but Furber thinks the SpiNNaker supercomputer could also be used to run large-scale networks that can process sensory input and generate motor output in real time and at low power.

That low power operation is of particular promise for robotics. The brain is dramatically more power-efficient than conventional supercomputers, and by borrowing from its principles SpiNNaker has managed to capture some of that efficiency. That could be important for running mobile robotic platforms that need to carry their own juice around.

This ability to run complex neural networks at low power has been one of the main commercial drivers for so-called neuromorphic computing devices that are physically modeled on the brain, such as IBM’s TrueNorth chip and Intel’s Loihi. The hope is that complex artificial intelligence applications normally run in massive data centers could be run on edge devices like smartphones, cars, and robots.

But these devices, including SpiNNaker, operate very differently from the leading AI approaches, and its not clear how easy it would be to transfer between the two. The need to adopt an entirely new programming paradigm is likely to limit widespread adoption, and the lack of commercial traction for the aforementioned devices seems to back that up.

At the same time, though, this new paradigm could potentially lead to dramatic breakthroughs in massively parallel computing. SpiNNaker overturns many of the foundational principles of how supercomputers work that make it much more flexible and error-tolerant.

For now, the machine is likely to be firmly focused on accelerating our understanding of how the brain works. But its designers also hope those findings could in turn point the way to more efficient and powerful approaches to computing.

Image Credit: Adrian Grosu / Shutterstock.com

Related Articles

These Mini Brains Just Learned to Solve a Classic Engineering Problem

Google DeepMind Plans to Track AGI Progress With These 10 Traits of General Intelligence

Tech Companies Are Blaming Massive Layoffs on AI. What’s Really Going On?

What we’re reading