In early 2013, a distributed computing project called Einstein@Home registered a petaFLOP/s (10^15 floating-point operations per second) of computing power for the first time. Considering that Einstein@Home is a volunteer network of personal computers crunching numbers in their downtime—that’s quite a feat. What’s even more remarkable is Einstein@Home isn’t the first to make a petaFLOP/s.

You might be thinking, of course it isn’t. IBM’s Roadrunner supercomputer was the first back in June 2008. And you’d be off by eight months. In fact, the distributed computing network Folding@Home first recorded a petaFLOP/s in September 2007.

As home computers have become near ubiquitous, unused computing power has risen in tandem. Just think how often your laptop is asleep on your desk. So, why let all those idle processors go to waste? Einstein@Home—a web of over 335,000 private personal computers—and other projects like it aim to put lazy machines to work.

It’s a brilliant idea. Like Airbnb or Getaround, distributed computing locates unused resources and links them up with users. However, unlike Airbnb and other “sharing economy” businesses, distributed computing is strictly volunteer—and it’s been around for fourteen years.

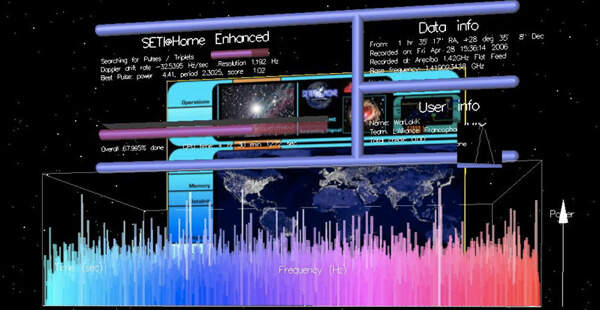

Large scale distributed computing first went mainstream with SETI@Home in 1999. (When I heard about SETI@Home, I couldn’t sign up my sluggish brick of a laptop fast enough.) The software behind SETI@Home was relaunched as BOINC in 2002. And ten years on, BOINC boasts 2.5 million users and on average roughly seven petaFLOP/s of computing power. That would earn BOINC fifth on the Top500 list of most powerful supercomputers.

Over half of BOINC’s users volunteer their machines for SETI@Home. But there are 82 other projects listed by BOINC stats. (And probably more that aren’t listed.) Volunteers donate their computers to “cure diseases, study global warming, and discover pulsars.” In fact, it’s pretty much the same list of problems researchers use regular old run-of-the-mill supercomputers to crack.

Einstein@Home is searching for evidence of gravity waves, and along the way has discovered 46 new radio pulsars. SETI@Home continues to sift radio waves from Arecibo for signs of extraterrestrial intelligence. (No calls yet.) Folding@Home models protein folding and has spawned 100 scientific papers. And Folding@Home’s novel use of GPUs (that 2007 mark was set thanks in large part to linked PlayStation 3’s) is now making its way into supercomputers like Titan, currently the world’s most powerful boolean beast.

We spend millions to build the latest supercomputers, but distributed computing freely liberates supercomputing power from already existing untapped resources. And it gives folks the chance to participate in cutting-edge science. Generally, though, it’s just a great cause. (You can sign up here.)