Google’s Robot Car Crash Is a Very Positive Sign

Share

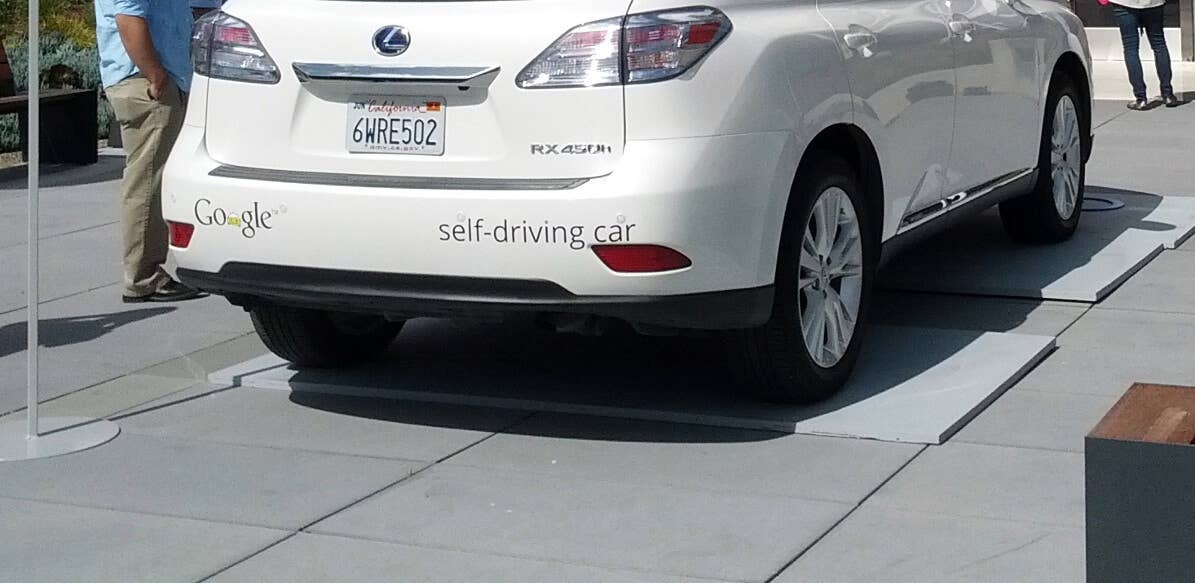

Reports released reveal that one of Google’s Gen-2 vehicles (the Lexus) has had a fender-bender (with a bus) with some responsibility assigned to the system. This is the first crash of this type — all other impacts have been reported as fairly clearly the fault of the other driver.

This crash ties into an upcoming article I will be writing about driving in places where everybody violates the rules. I just landed from a trip to India, which is one of the strongest examples of this sort of road system, far more chaotic than California, but it got me thinking a bit more about the problems.

Google is thinking about them too. Google reports it just recently started experimenting with new behaviors, in this case when making a right turn on a red light off a major street where the right lane is extra wide. In that situation it has become common behavior for cars to effectively create two lanes out of one, with a straight through group on the left, and right turners hugging the curb. The vehicle code would have there be only one lane, and the first person not turning would block everybody turning right, who would find it quite annoying. (In India, the lane markers are barely suggestions, and drivers — which consist of every width of vehicle you can imagine — dynamically form their own patterns as needed.)

As such, Google wanted their car to be a good citizen and hug the right curb when doing a right turn. So they did, but found the way blocked by sandbags on a storm drain. So they had to “merge” back with the traffic in the left side of the lane. They did this when a bus was coming up on the left, and they made the assumption, as many would make, that the bus would yield and slow a bit to let them in. The bus did not, and the Google car hit it, but at very low speed. The Google car could have probably solved this with faster reflexes and a better read of the bus’ intent, and probably will in time, but more interesting is the question of what you expect of other drivers. The law doesn’t imagine this split lane or this “merge.” And of course the law doesn’t require people to slow down to let you in.

But driving in so many cities requires constantly expecting the other guy to slow down and let you in. (In places like Indonesia, the rules actually give the right-of-way to the guy who cuts you off, because you can see him and he can’t easily see you, so it’s your job to slow. Of course, robocars see in 360 degrees, so no car has a better view of the situation.)

While some people like to imagine that important ethical questions for robocars revolve around choosing who to kill in an accident, that’s actually an extremely rare event. The real ethical issues revolve around this issue of how to drive when driving involves routinely breaking the law — not once in a 100 lifetimes, but once every minute. Or once every second, as is the case in India. To solve this problem, we must come up with a resolution, and we must eventually get the law to accept it the same way it accepts it for all the humans out there, who are almost never ticketed for these infractions.

So why is this a good thing? Because Google is starting to work on problems like these, and you need to solve these problems to drive even in orderly places like California. And yes, you are going to have some mistakes and some dings on the way there, and that’s a good thing, not a bad thing. Mistakes in negotiating who yields to who are very unlikely to involve injury, as long as you don’t involve things smaller than cars (such as pedestrians). Robocars will need to not always yield in a game of chicken, or they can’t survive on the roads.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

In this case, Google says it learned that big vehicles are much less likely to yield. In addition, it sounds like the vehicle’s confusion over the sandbags probably made the bus driver decide the vehicle was stuck. It’s still unclear to me why the car wasn’t able to abort its merge when it saw the bus was not going to yield, since the description has the car sideswiping the bus, not the other way around.

Nobody wants accidents — and some will play this accident as more than it is — but neither do we want so much caution that we never learn these lessons.

It’s also a good reminder that even Google, though it is the clear leader in the space, still has lots of work to do. A lot of people I talk to imagine that the tech problems have all been solved and all that’s left is getting legal and public acceptance. There is great progress being made, but nobody should expect these cars to be perfect today. That’s why they run with safety drivers, and did even before the law demanded it. This time the safety driver also decided the bus would yield and so let the car try its merge. But expect more of this as time goes forward.

Brad Templeton is Singularity University's Networks and Computing Chair. This article was originally published on Brad's blog.

Image Credit: Travis Wise/FlickrCC

Brad Templeton is a developer of and commentator on self-driving cars, software architect, board member of the Electronic Frontier Foundation, internet entrepreneur, futurist lecturer, writer and observer of cyberspace issues, hobby photographer, and an artist. Templeton has been a consultant on Google's team designing a driverless car and lectures and blogs about the emerging technology of automated transportation. He is also noted as a speaker and writer covering copyright law and political and social issues related to computing and networks. He is a director of the futurist Foresight Nanotech Institute, a think tank and public interest organization focused on transformative future technologies. Templeton was founder, publisher and software architect at ClariNet Communications Corp., which in the 1990s became the first internet-based business, creating an electronic newspaper. He has been active in the computer network community since 1979, participated in the building and growth of USENET from its earliest days, and in 1987 founded and edited a special USENET conference devoted to comedy. Templeton has been involved in the development of important pieces of software including VisiCalc, the world's first computer spreadsheet, and Stuffit for archiving and compressing computer files. In 1996, ClariNet joined the ACLU and others in opposing the Communications Decency Act, part of the Telecom bill passed during Clinton Administration. The U.S. Supreme Court sided with the plaintiffs and ruled that the Act violated the First Amendment in seeking to impose anti-indecency standards on the internet.

Related Articles

An AI Solution to an 80‑Year‑Old Problem Has Shocked Mathematicians

AI Lab Partners Are Rewiring the Hunt for New Drugs

New Algae Robots Swarm Like Locusts at the Flick of a Switch

What we’re reading