What's New Today

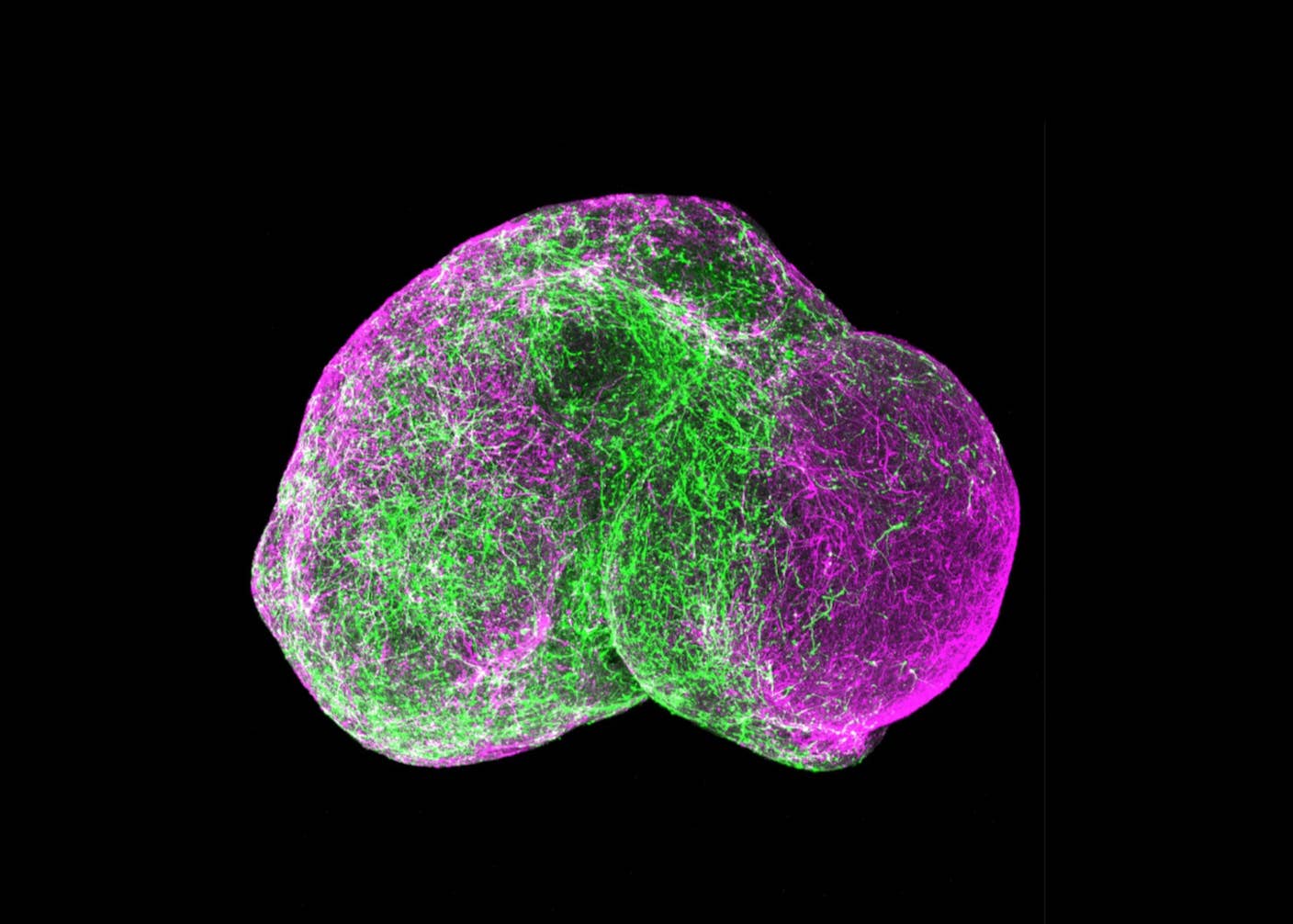

Scientists Grow Electronics Inside the Brains of Living Mice

Shelly Fan

Latest Stories

How to Build Better Digital Twins of the Human Brain

Andrea Luppi,

Gustavo Deco

andMorten L. Kringelbach

Computing

Anthropic’s Mythos AI Uncovered Serious Security Holes in Every Major OS and Browser

Edd Gent

Artificial Intelligence

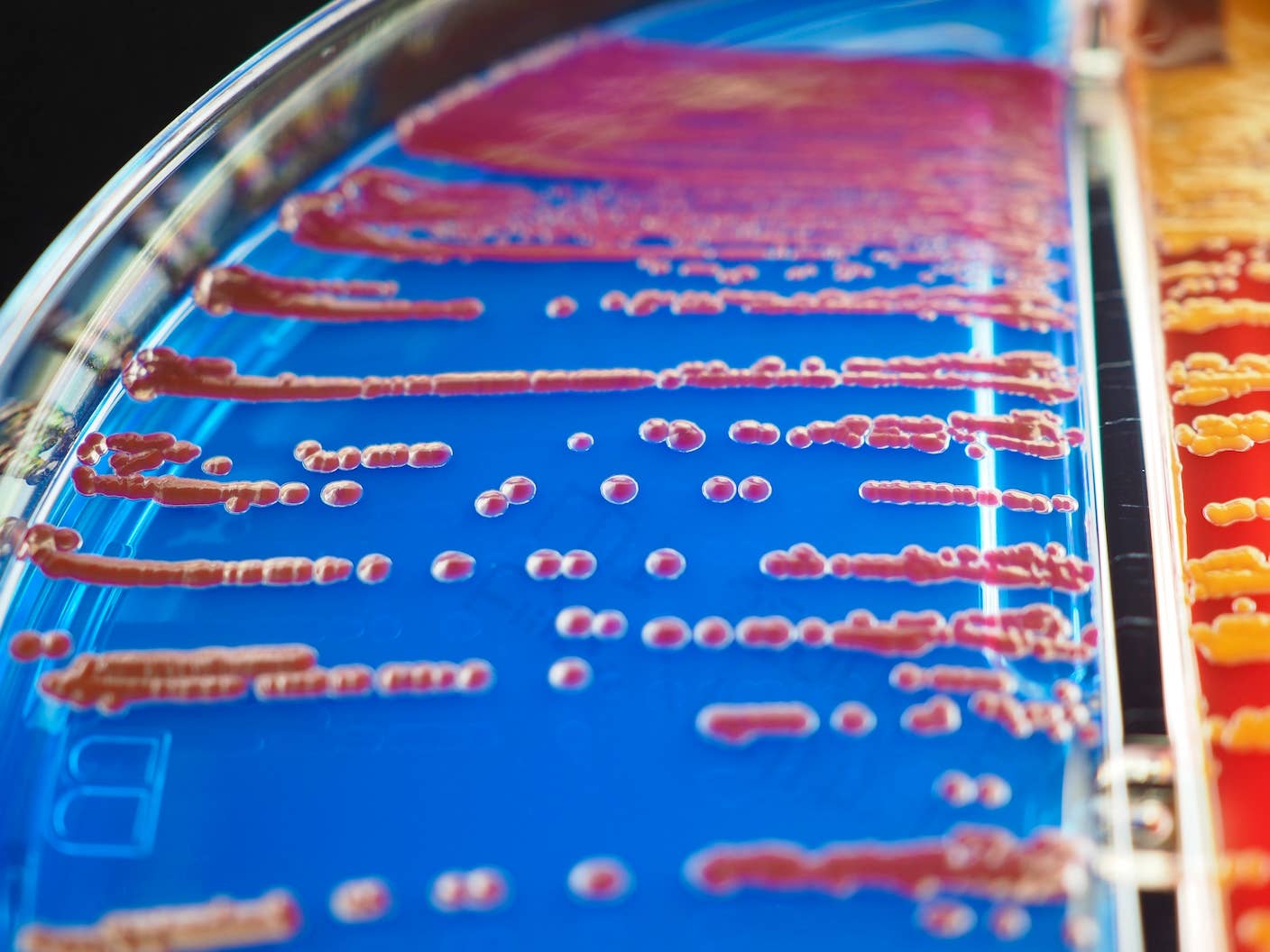

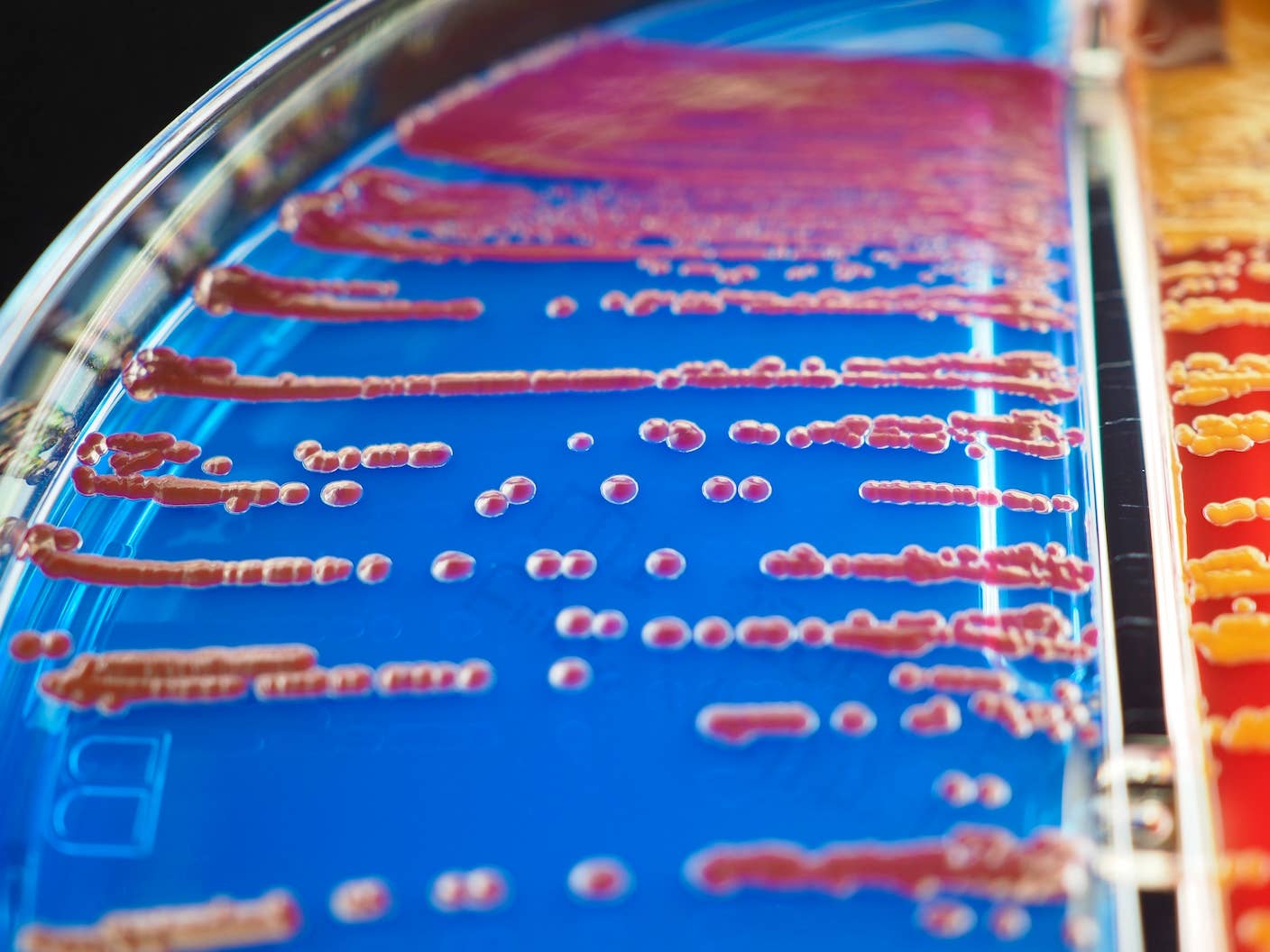

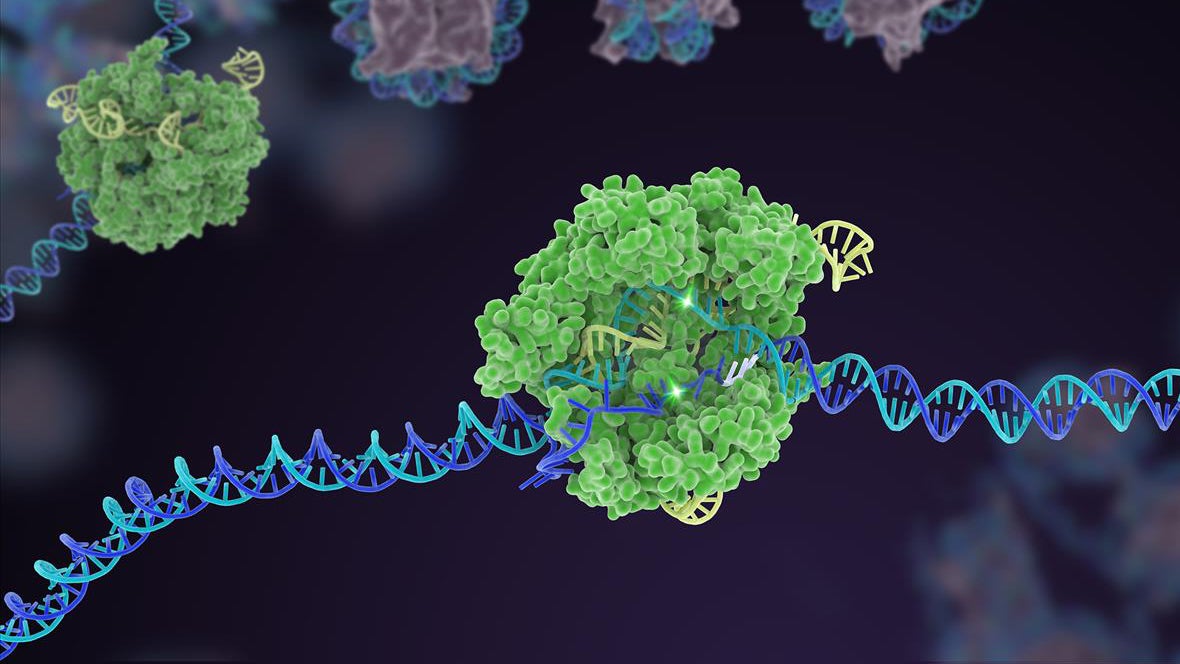

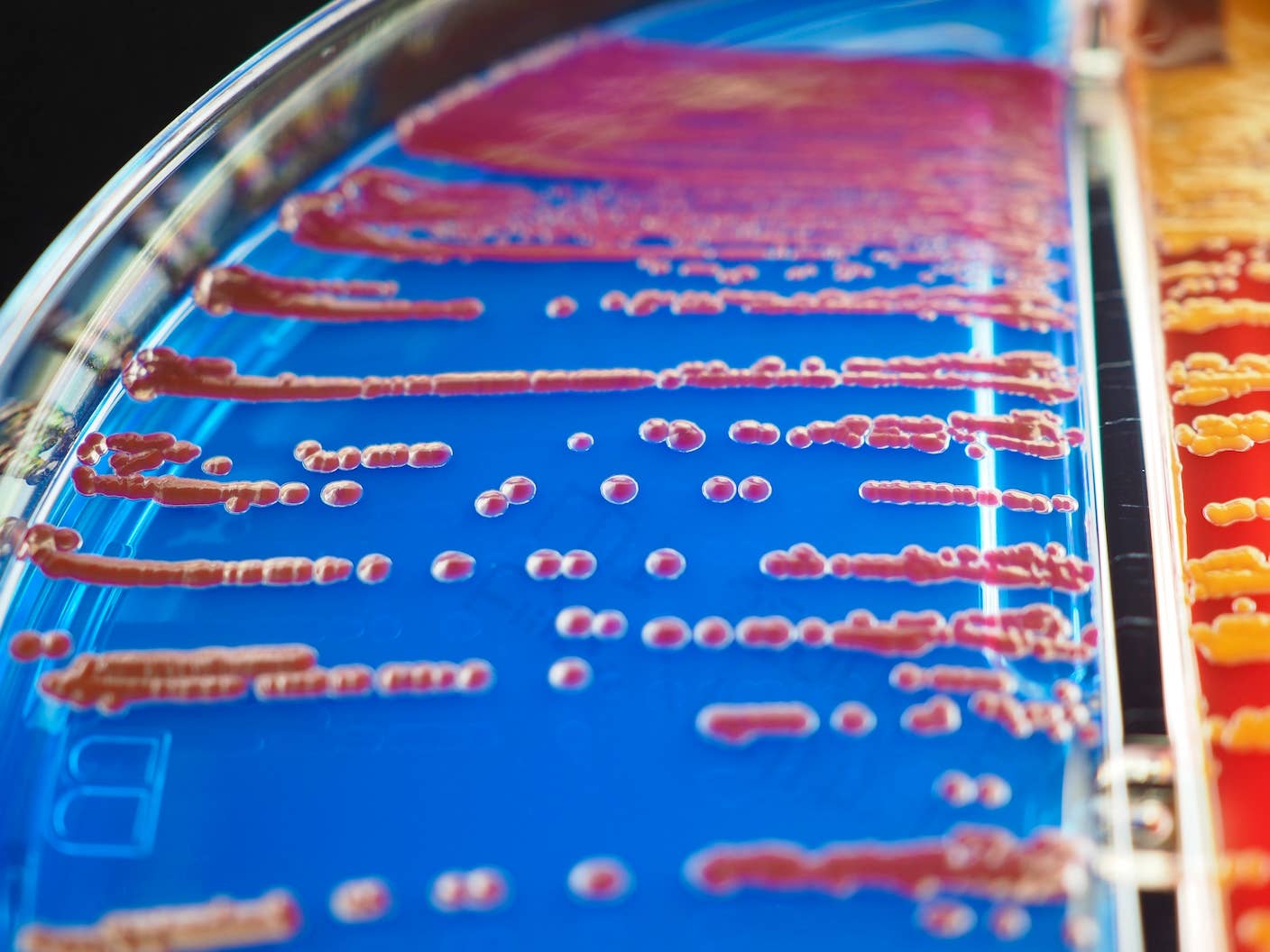

MIT Mined Bacteria for the Next CRISPR—and Found Hundreds of Potential New Tools

Shelly Fan

Computing

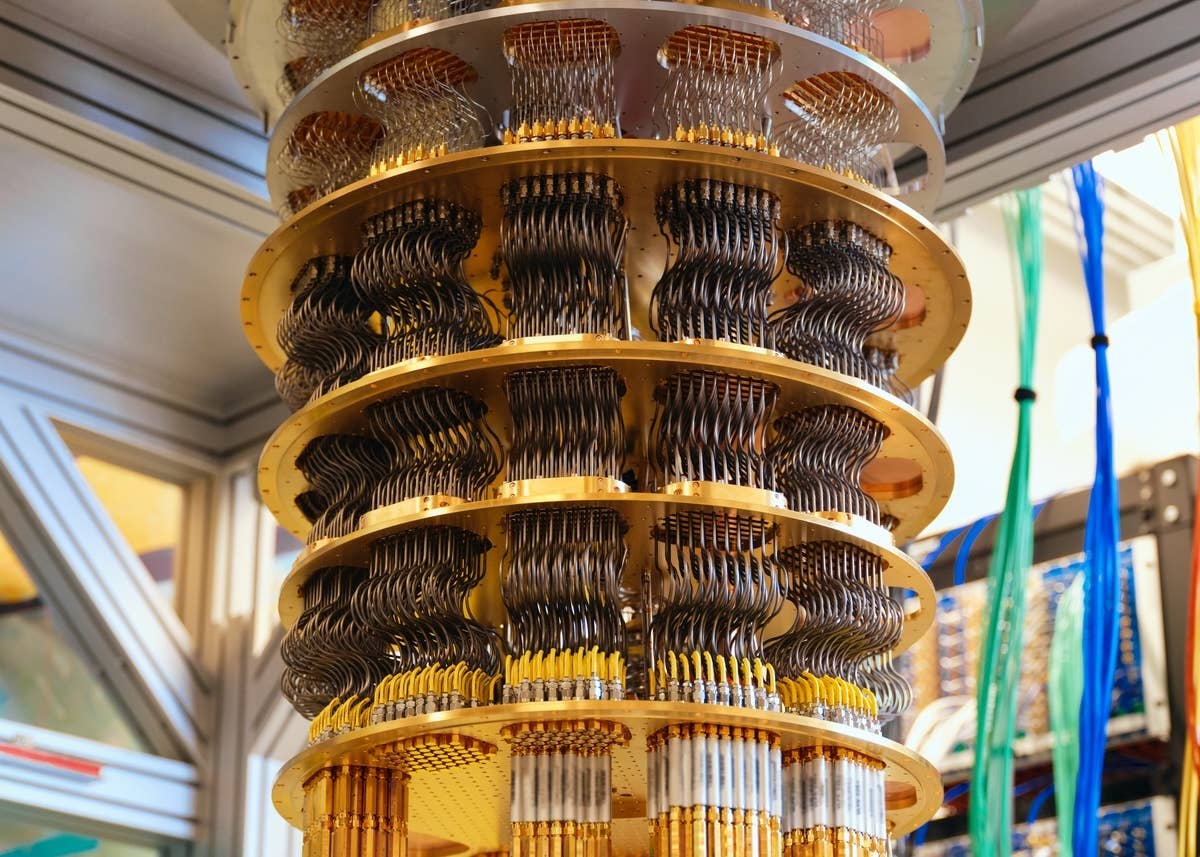

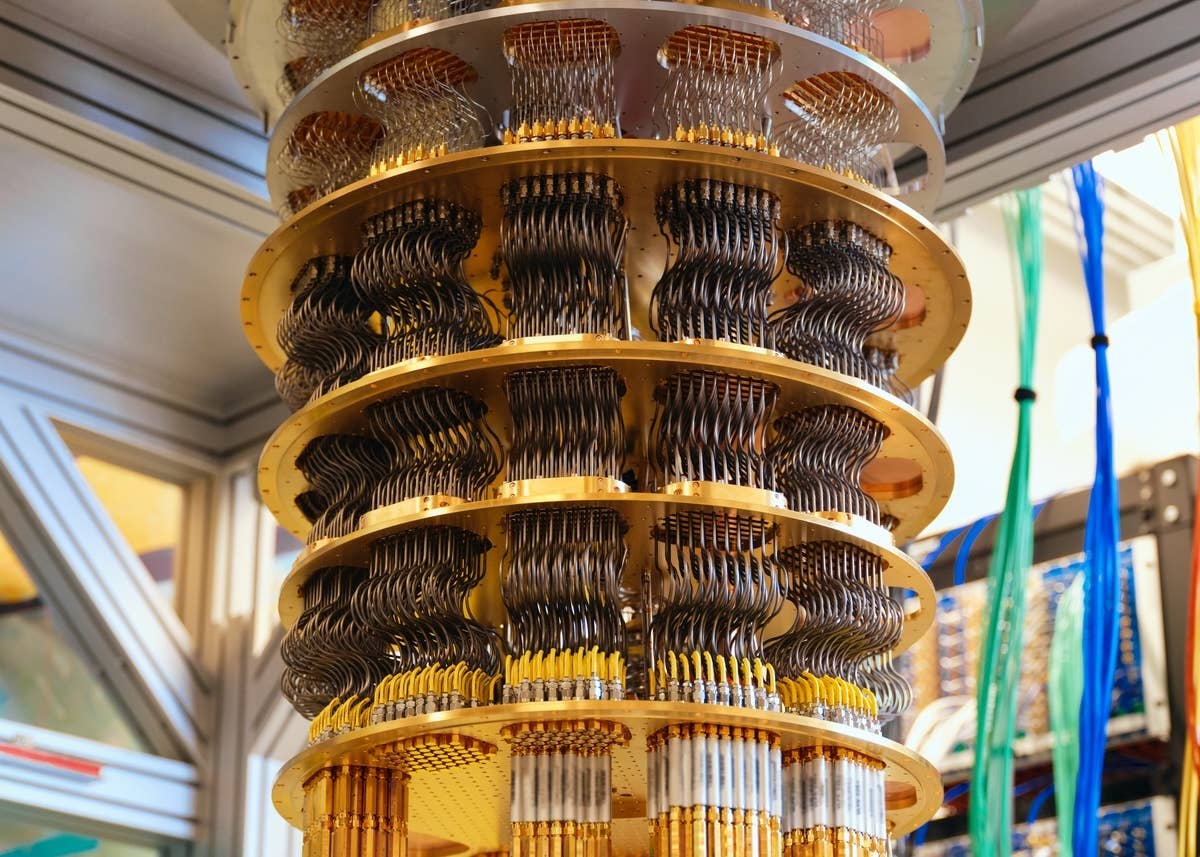

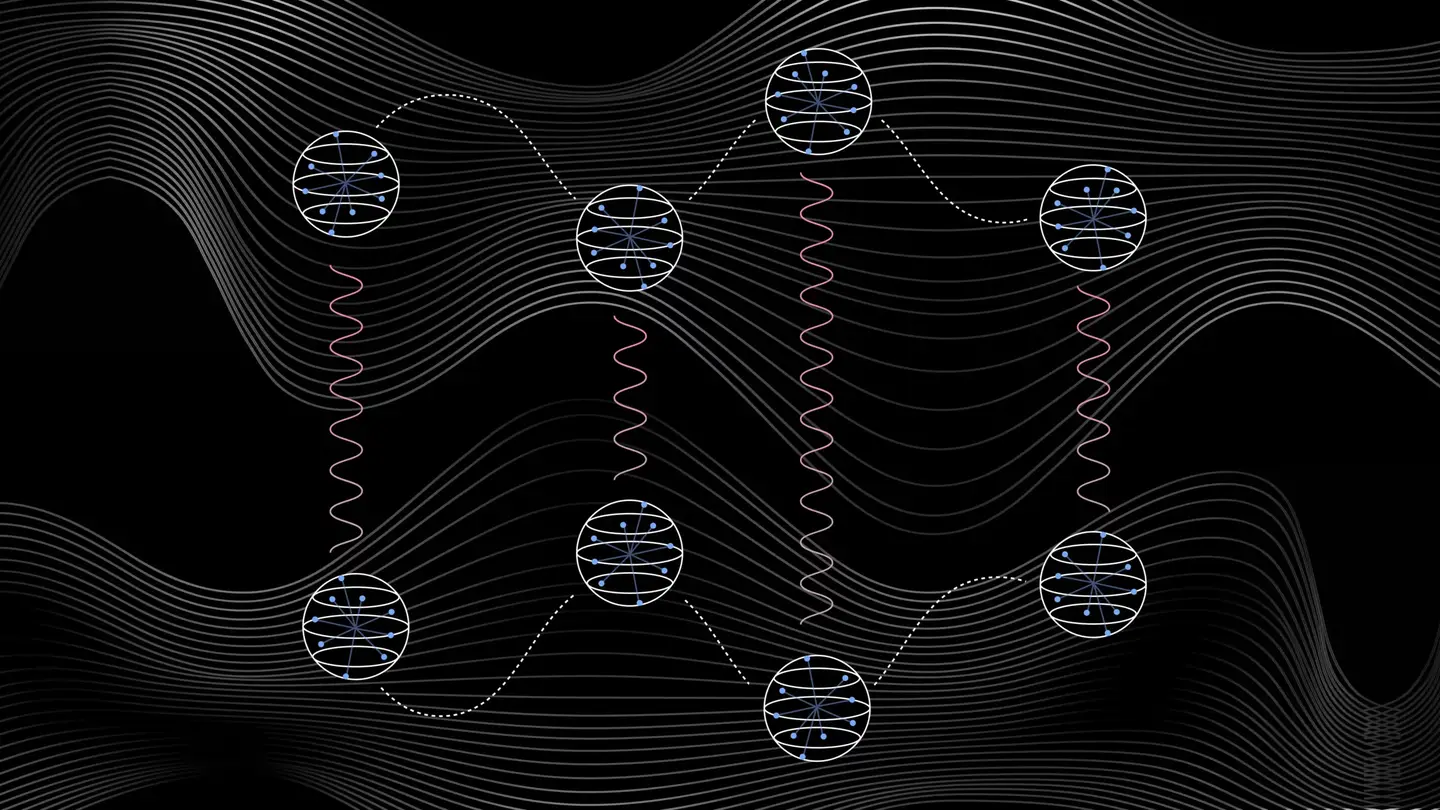

US Issues Grand Challenge: The First Fault-Tolerant Quantum Computer by 2028

Edd Gent

This Week’s Awesome Tech Stories From Around the Web (Through April 4)

SingularityHub Staff

Future

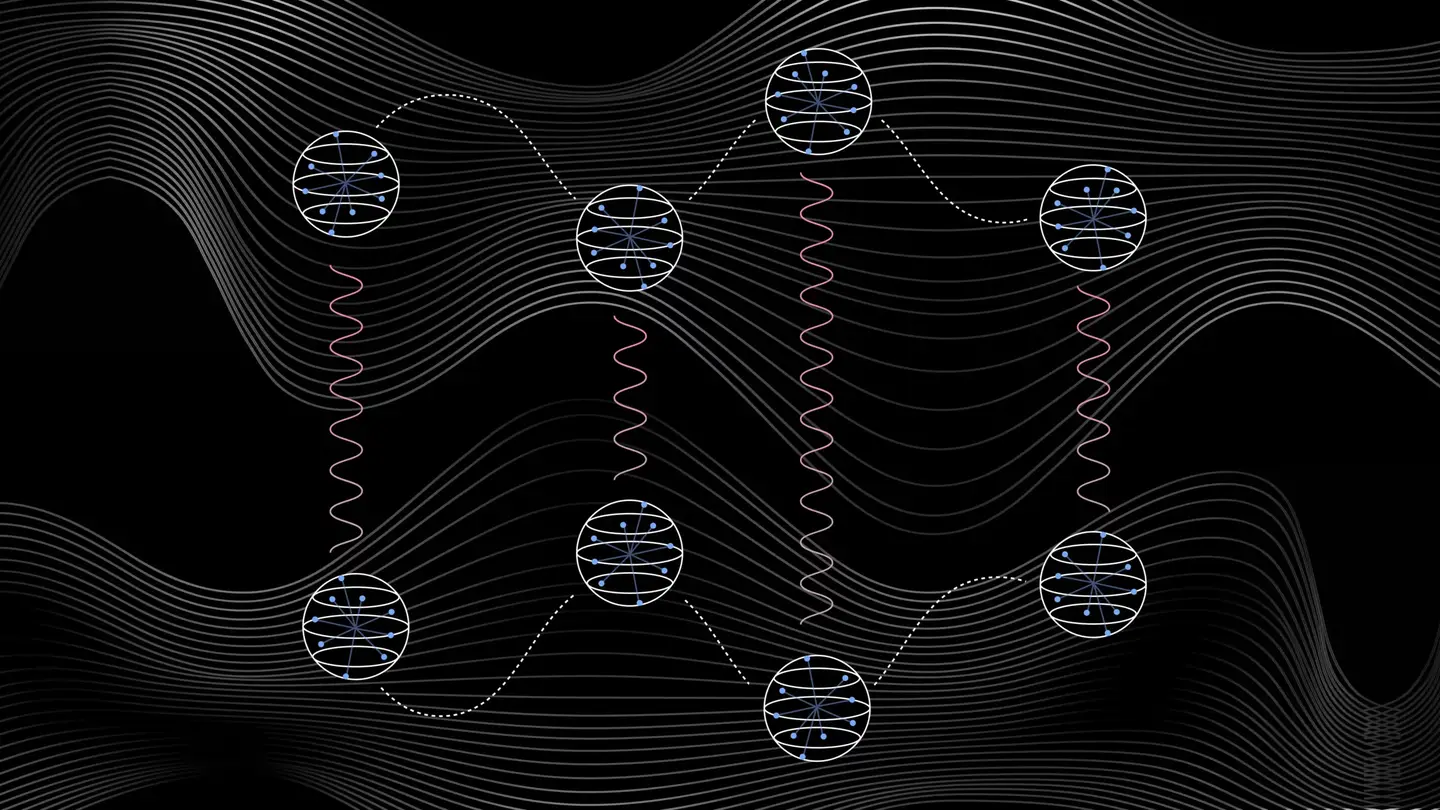

Five Ways Quantum Technology Could Shape Everyday Life

Adam Urwick,

Salil Gunashekar

andTeodora Chis

What we’re reading

DON'T MISS A TREND

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

100% Free. No Spam. Unsubscribe any time.

Join 50,000+ researchers, entrepreneurs, science enthusiasts, technophiles, and the insatiably curious

FeaturedBiotechnology

Souped-Up CRISPR Gene Editor Replicates and Spreads Like a Virus

Shelly Fan

Editor's Picks

Explore topics

- Artificial Intelligence

- Biotechnology

- Computing

- Energy

- Future

- Robotics

- Science

- Space

- Tech

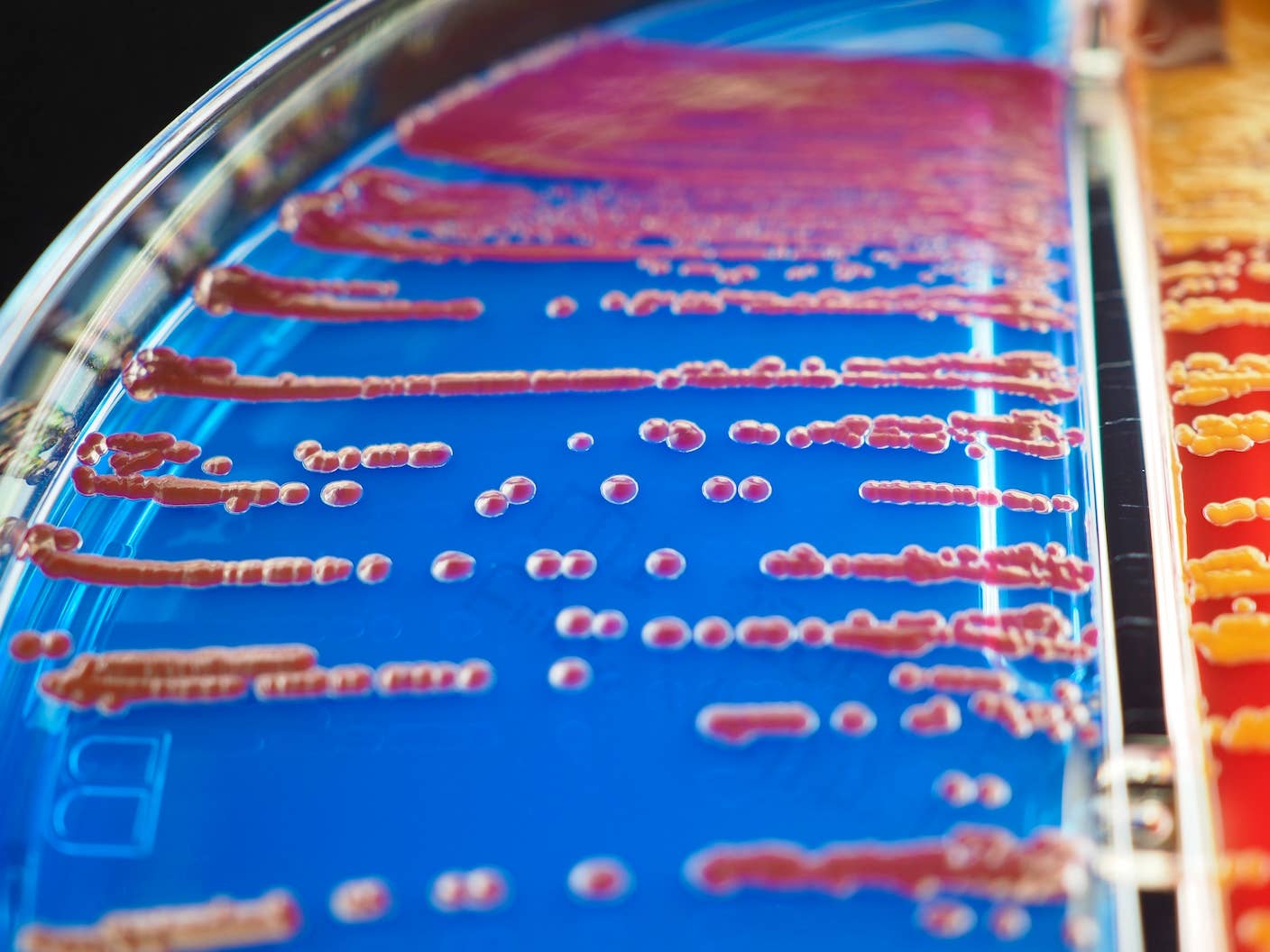

MIT Mined Bacteria for the Next CRISPR—and Found Hundreds of Potential New Tools

Shelly Fan

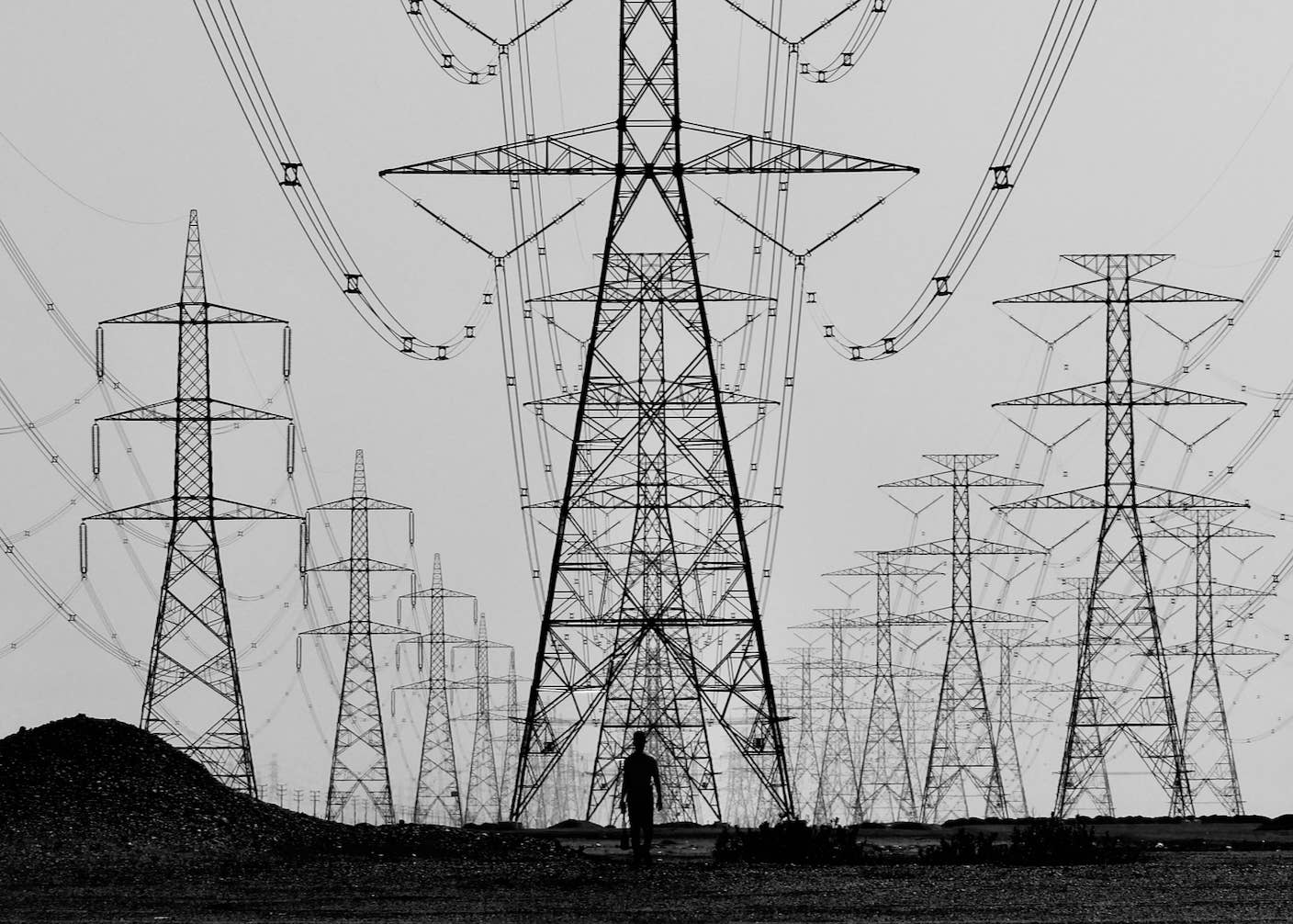

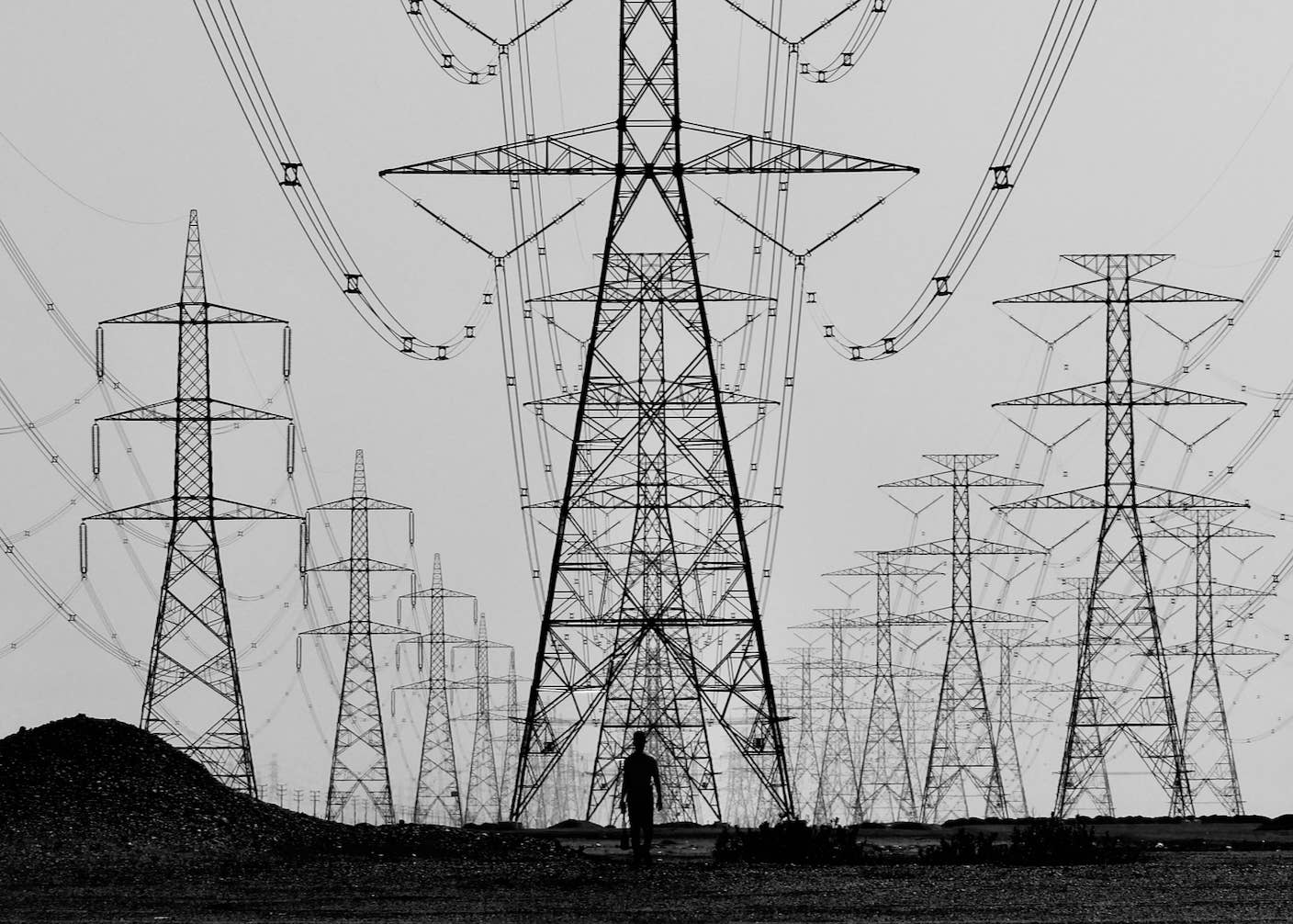

The Mad Scramble to Power AI Is Rewiring the US Grid

Edd Gent

Chatbots ‘Optimized to Please’ Make Us Less Likely to Admit When We’re Wrong

Shelly Fan

Google DeepMind Plans to Track AGI Progress With These 10 Traits of General Intelligence

Edd Gent

Tech Companies Are Blaming Massive Layoffs on AI. What’s Really Going On?

Uri Gal

Hackers Are Automating Cyberattacks With AI. Defenders Are Using It to Fight Back.

Edd Gent