Losing Control: The Dangers of Killer Robots

Share

New technology could lead humans to relinquish control over decisions to use lethal force. As artificial intelligence advances, the possibility that machines could independently select and fire on targets is fast approaching. Fully autonomous weapons, also known as “killer robots,” are quickly moving from the realm of science fiction toward reality.

The unmanned Sea Hunter gets underway. At present it sails without weapons, but it exemplifies the move toward greater autonomy. Image credit: U.S. Navy/John F. Williams

These weapons, which could operate on land, in the air or at sea, threaten to revolutionize armed conflict and law enforcement in alarming ways. Proponents say these killer robots are necessary because modern combat moves so quickly, and because having robots do the fighting would keep soldiers and police officers out of harm’s way. But the threats to humanity would outweigh any military or law enforcement benefits.

Removing humans from the targeting decision would create a dangerous world. Machines would make life-and-death determinations outside of human control. The risk of disproportionate harm or erroneous targeting of civilians would increase. No person could be held responsible.

Given the moral, legal and accountability risks of fully autonomous weapons, preempting their development, production and use cannot wait. The best way to handle this threat is an international, legally binding ban on weapons that lack meaningful human control.

Preserving empathy and judgment

At least 20 countries have expressed in U.N. meetings the belief that humans should dictate the selection and engagement of targets. Many of them have echoed arguments laid out in a new report, of which I was the lead author. The report was released in April by Human Rights Watch and the Harvard Law School International Human Rights Clinic, two organizations that have been campaigning for a ban on fully autonomous weapons.

Retaining human control over weapons is a moral imperative. Because they possess empathy, people can feel the emotional weight of harming another individual. Their respect for human dignity can — and should — serve as a check on killing.

Robots, by contrast, lack real emotions, including compassion. In addition, inanimate machines could not truly understand the value of any human life they chose to take. Allowing them to determine when to use force would undermine human dignity.

Human control also promotes compliance with international law, which is designed to protect civilians and soldiers alike. For example, the laws of war prohibit disproportionate attacks in which expected civilian harm outweighs anticipated military advantage. Humans can apply their judgment, based on past experience and moral considerations, and make case-by-case determinations about proportionality.

It would be almost impossible, however, to replicate that judgment in fully autonomous weapons, and they could not be preprogrammed to handle all scenarios. As a result, these weapons would be unable to act as “reasonable commanders,” the traditional legal standard for handling complex and unforeseeable situations.

In addition, the loss of human control would threaten a target’s right not to be arbitrarily deprived of life. Upholding this fundamental human right is an obligation during law enforcement as well as military operations. Judgment calls are required to assess the necessity of an attack, and humans are better positioned than machines to make them.

Promoting accountability

Keeping a human in the loop on decisions to use force further ensures that accountability for unlawful acts is possible. Under international criminal law, a human operator would in most cases escape liability for the harm caused by a weapon that acted independently. Unless he or she intentionally used a fully autonomous weapon to commit a crime, it would be unfair and legally problematic to hold the operator responsible for the actions of a robot that the operator could neither prevent nor punish.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

There are additional obstacles to finding programmers and manufacturers of fully autonomous weapons liable under civil law, in which a victim files a lawsuit against an alleged wrongdoer. The United States, for example, establishes immunity for most weapons manufacturers. It also has high standards for proving a product was defective in a way that would make a manufacturer legally responsible. In any case, victims from other countries would likely lack the access and money to sue a foreign entity. The gap in accountability would weaken deterrence of unlawful acts and leave victims unsatisfied that someone was punished for their suffering.

An opportunity to seize

At a U.N. meeting in Geneva in April, 94 countries recommended beginning formal discussions about “lethal autonomous weapons systems.” The talks would consider whether these systems should be restricted under the Convention on Conventional Weapons, a disarmament treaty that has regulated or banned several other types of weapons, including incendiary weapons and blinding lasers. The nations that have joined the treaty will meet in December for a review conference to set their agenda for future work. It is crucial that the members agree to start a formal process on lethal autonomous weapons systems in 2017.

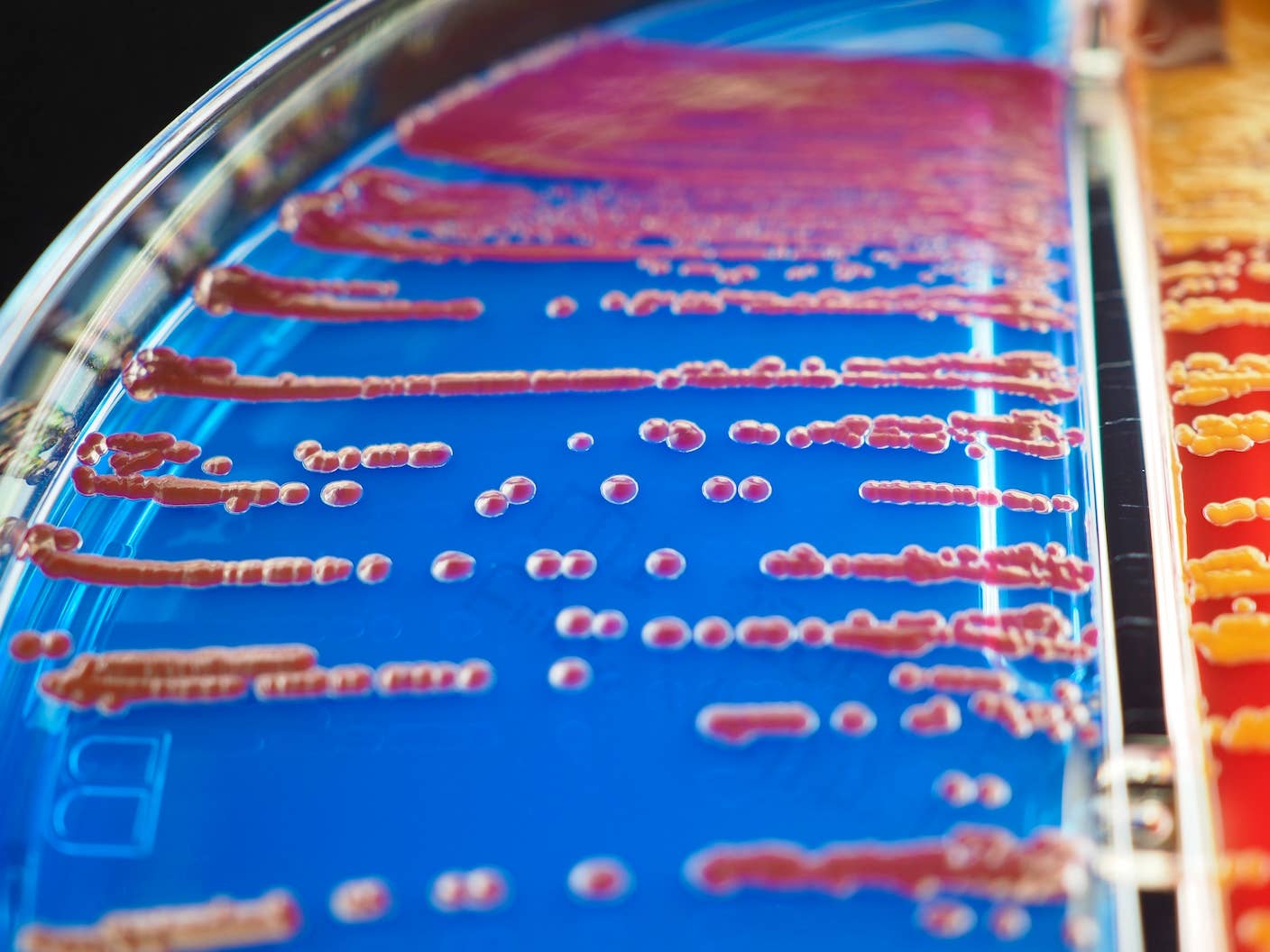

Disarmament law provides precedent for requiring human control over weapons. For example, the international community adopted the widely accepted treaties banning biological weapons, chemical weapons and landmines in large part because of humans’ inability to exercise adequate control over their effects. Countries should now prohibit fully autonomous weapons, which would pose an equal or greater humanitarian risk.

At the December review conference, countries that have joined the Convention on Conventional Weapons should take concrete steps toward that goal. They should initiate negotiations of a new international agreement to address fully autonomous weapons, moving beyond general expressions of concern to specific action. They should set aside enough time in 2017 — at least several weeks — for substantive deliberations.

While the process of creating international law is notoriously slow, countries can move quickly to address the threats of fully autonomous weapons. They should seize the opportunity presented by the review conference because the alternative is unacceptable: Allowing technology to outpace diplomacy would produce dire and unparalleled humanitarian consequences.

Bonnie Docherty, Lecturer on Law, Senior Clinical Instructor at Harvard Law School's International Human Rights Clinic, Harvard University

This article was originally published on The Conversation. Read the original article.

Bonnie Docherty is a Lecturer on Law and Senior Clinical Instructor at the International Human Rights Clinic at Harvard Law School. She is also a Senior Researcher in the Arms Division of Human Rights Watch. She is an expert on disarmament and international humanitarian law, particularly involving civilian protection during armed conflict. In recent years, she has authored several seminal reports in support of civil society’s campaign to ban fully autonomous weapons, also known as “killer robots.” Since 2001, she has played an active role, as both lawyer and field researcher, in the campaign against cluster munitions. Docherty participated in negotiations for the Convention on Cluster Munitions and has promoted strong implementation of the convention since its adoption in 2008. Her in-depth field investigations of cluster munition use in Afghanistan, Iraq, Lebanon, and Georgia helped galvanize international opposition to the weapons. Docherty has documented the broader effects of armed conflict on civilians in several other countries and also done research and advocacy related to incendiary weapons. Docherty received her A.B. from Harvard University and her J.D. from Harvard Law School.

Related Articles

Printed Neurons That Mimic Brain Cells Could Slash AI’s Energy Bill

MIT Mined Bacteria for the Next CRISPR—and Found Hundreds of Potential New Tools

The Mad Scramble to Power AI Is Rewiring the US Grid

What we’re reading