Atom Computing Says Its New Quantum Computer Has Over 1,000 Qubits

Share

The scale of quantum computers is growing quickly. In 2022, IBM took the top spot with its 433-qubit Osprey chip. Yesterday, Atom Computing announced they've one-upped IBM with a 1,180-qubit neutral atom quantum computer.

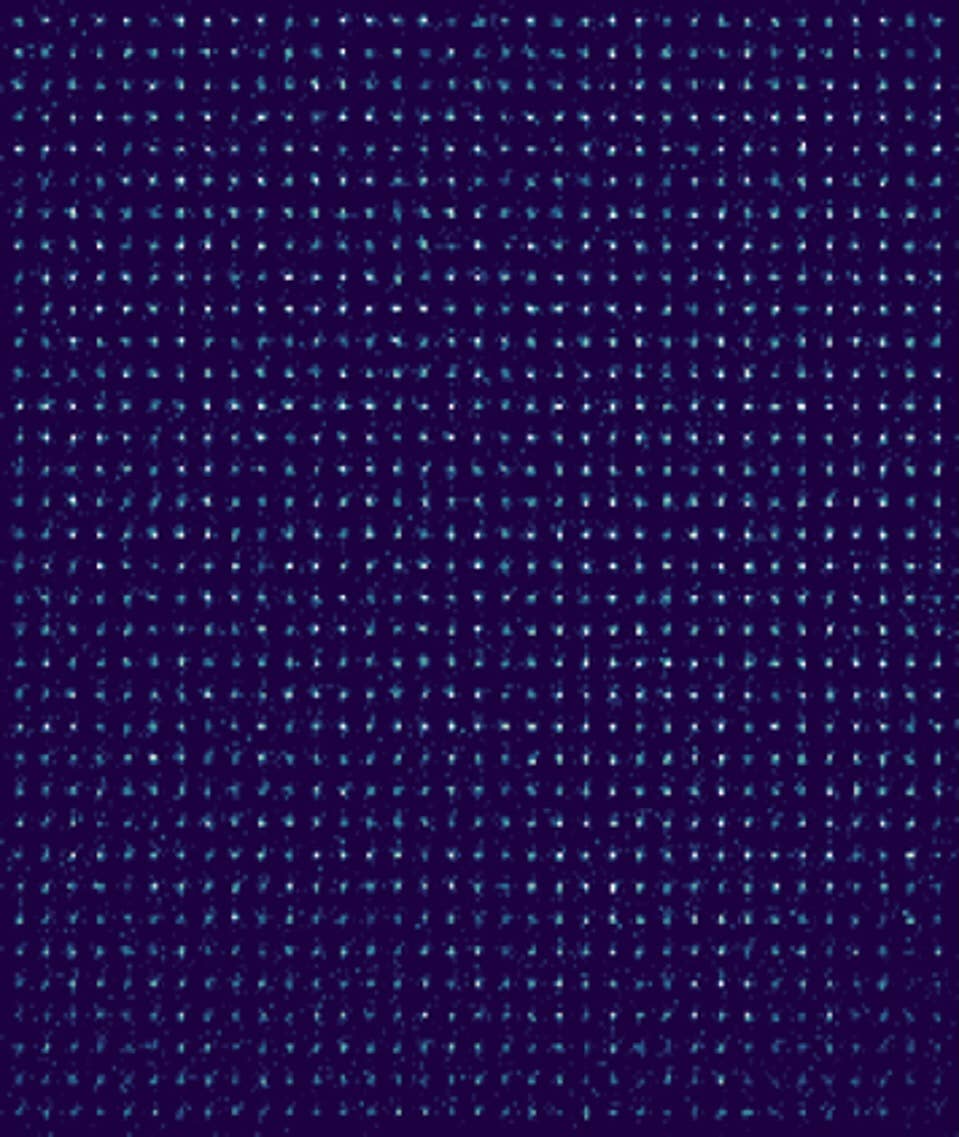

The new machine runs on a tiny grid of atoms held in place and manipulated by lasers in a vacuum chamber. The company's first 100-qubit prototype was a 10-by-10 grid of strontium atoms. The new system is a 35-by-35 grid of ytterbium atoms (shown above). (The machine has space for 1,225 atoms, but Atom has so far run tests with 1,180.)

Quantum computing researchers are working on a range of qubits—the quantum equivalent of bits represented by transistors in traditional computing—including tiny superconducting loops of wire (Google and IBM), trapped ions (IonQ), and photons, among others. But Atom Computing and other companies, like QuEra, believe neutral atoms—that is, atoms with no electric charge—have greater potential to scale.

This is because neutral atoms can maintain their quantum state longer, and they're naturally abundant and identical. Superconducting qubits are more susceptible to noise and manufacturing flaws. Neutral atoms can also be packed more tightly into the same space as they have no charge that might interfere with neighbors and can be controlled wirelessly. And neutral atoms allow for a room-temperature set-up, as opposed to the near-absolute zero temperatures required by other quantum computers.

The company may be onto something. They've now increased the number of qubits in their machine by an order of magnitude in just two years, and believe they can go further. In a video explaining the technology, Atom CEO Rob Hays says they see "a path to scale to millions of qubits in less than a cubic centimeter."

"We think that the amount of challenge we had to face to go from 100 to 1,000 is probably significantly higher than the amount of challenges we're gonna face when going to whatever we want to go to next—10,000, 100,000," Atom cofounder and CTO Ben Bloom told Ars Technica.

But scale isn't everything.

Quantum computers are extremely finicky. Qubits can be knocked out of quantum states by stray magnetic fields or gas particles. The more this happens, the less reliable the calculations. Whereas scaling got a lot of attention a few years ago, the focus has shifted to error-correction in service of scale. Indeed, Atom Computing's new computer is bigger, but not necessarily more powerful. The whole thing can't yet be used to run a single calculation, for example, due to the accumulation of errors as the qubit count rises.

There has been recent movement on this front, however. Earlier this year, the company demonstrated the ability to check for errors mid-calculation and potentially fix those errors without disturbing the calculation itself. They also need to keep errors to a minimum overall by increasing the fidelity of their qubits. Recent papers, each showing encouraging progress in low-error approaches to neutral atom quantum computing, give fresh life to the endeavor. Reducing errors may be, in part, an engineering problem that can be solved with better equipment and design.

"The thing that has held back neutral atoms, until those papers have been published, have just been all the classical stuff we use to control the neutral atoms," Bloom said. "And what that has essentially shown is that if you can work on the classical stuff—work with engineering firms, work with laser manufacturers (which is something we're doing)—you can actually push down all that noise. And now all of a sudden, you're left with this incredibly, incredibly pure quantum system."

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

In addition to error-correction in neutral atom quantum computers, IBM announced this year they've developed error correction codes for quantum computing that could reduce the number of necessary qubits needed by an order of magnitude.

Still, even with error-correction, large-scale, fault-tolerant quantum computers will need hundreds of thousands or millions of physical qubits. And other challenges—such as how long it takes to move and entangle increasingly large numbers of atoms—exist too. Better understanding and working to solve these challenges is why Atom Computing is chasing scale at the same time as error-correction.

In the meantime, the new machine can be used on smaller problems. Bloom said if a customer is interested in running a 50-qubit algorithm—the company is aiming to offer the computer to partners next year—they'd run it multiple times using the whole computer to arrive at a reliable answer more quickly.

In a field of giants like Google and IBM, it's impressive a startup has scaled their machines so quickly. But Atom Computing's 1,000-qubit mark isn't likely to stand alone for long. IBM is planning to complete its 1,121-qubit Condor chip later this year. The company is also pursuing a modular approach—not unlike the multi-chip processors common in laptops and phones—where scale is achieved by linking many smaller chips.

We're still in the nascent stages of quantum computing. The machines are useful for research and experimentation but not practical problems. Multiple approaches making progress in scale and error correction—two of the field's grand challenges—is encouraging. If that momentum continues in the coming years, one of these machines may finally solve the first useful problem that no traditional computer ever could.

Image Credit: Atom Computing

Jason is editorial director at SingularityHub. He researched and wrote about finance and economics before moving on to science and technology. He's curious about pretty much everything, but especially loves learning about and sharing big ideas and advances in artificial intelligence, computing, robotics, biotech, neuroscience, and space.

Related Articles

In the Scramble to Power AI, Investors Bet $140 Million on Data Centers at Sea

Quantum Computers Are Coming to Break Cryptography Faster Than Anyone Expected

Printed Neurons That Mimic Brain Cells Could Slash AI’s Energy Bill

What we’re reading