How to Virtually ‘Possess’ Another Person’s Body Using Oculus Rift and Kinect

Share

Virtual reality can put you in another world—but what about another body? Yifei Chai, a student at the Imperial College London, is using the latest in virtual reality and 3D modeling hardware to virtually “possess” another person.

How does it work? One individual dons a headmounted, twin-angle camera and attaches electrical stimulators to their body. Meanwhile, another person wears an Oculus Rift virtual reality headset streaming footage from their friend's camera/view.

A Microsoft Kinect 3D sensor tracks the Rift wearer's body. The system shocks the appropriate muscles to force the possessed person to lift or lower their arms. The result? The individual wearing the Rift looks down and sees another body, a body that moves when they move—giving the illusion of inhabiting another's body.

The system is a rough prototype. There’s a noticeable delay between action and reaction, which lessens the illusion’s effectiveness (though it’s evidently still pretty spooky), and there’s a limit to how finely the possessor can control their friend.

Currently, Chai’s system stimulates 34 arm and shoulder muscles. He admits it's gained a lot more attention than expected. Even so, he hopes to improve it with high-definition versions of the Oculus Rift and Kinect to detect subtler movements.

Beyond offering a fundamentally novel experience, Chai thinks virtual reality systems like his might be used to encourage empathy by literally putting us in someone else’s shoes. This is akin to donning an age simulation suit, which saddles youthful users with a range of age-related maladies from joint stiffness to impaired vision.

The idea is we’re more patient and understanding with people facing challenges we ourselves have experienced. A care worker, for example, might be less apt to become frustrated with a patient after experiencing their challenges firsthand.

Virtual reality might also prove a useful therapy—a way to safely experience uncomfortable situations to ease anxiety and build habits for real world interaction. Training away an extreme fear of public speaking, for example, might include a program of standing and addressing virtual audiences.

For all these applications, the more immersive and realistic, the better. However, not all of them necessarily require control of another person’s movements—and they might be just as effective (and simpler) using digital avatars instead of real people.

That said, I couldn’t watch the video without getting hypothetical.

Chai’s system only allows for the translation of coarse, delayed movement. But what if it could translate fine, detailed movement in real time? Such a futuristic system would be more than just a cool or therapeutic experience. It would be a way to transport skills anywhere, anytime at very nearly light speed.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

Currently, a number of hospitals are using telepresence robots (basically a screen and camera on a robotic mount) to allow medical specialists to video chat live with patients, nurses, and doctors hundreds or thousands of miles away. This is a way to more efficiently spread expertise and talent through the system.

Now imagine having the ability to transport the hands of a top surgeon at the Mayo Clinic to a field hospital in Africa or a refugee camp in Lebanon. Geography would no longer limit those in need to the doctors nearby (often in short supply).

Virtual surgery could allow folks to volunteer their time without needing to travel to a war zone or move to a refugee camp full time.

But for such applications, it doesn't make sense to use human surrogates. You’d need to embed stimulators body-wide to even approach decent control of a human. A robot, on the other hand, is designed from the ground up for external control.

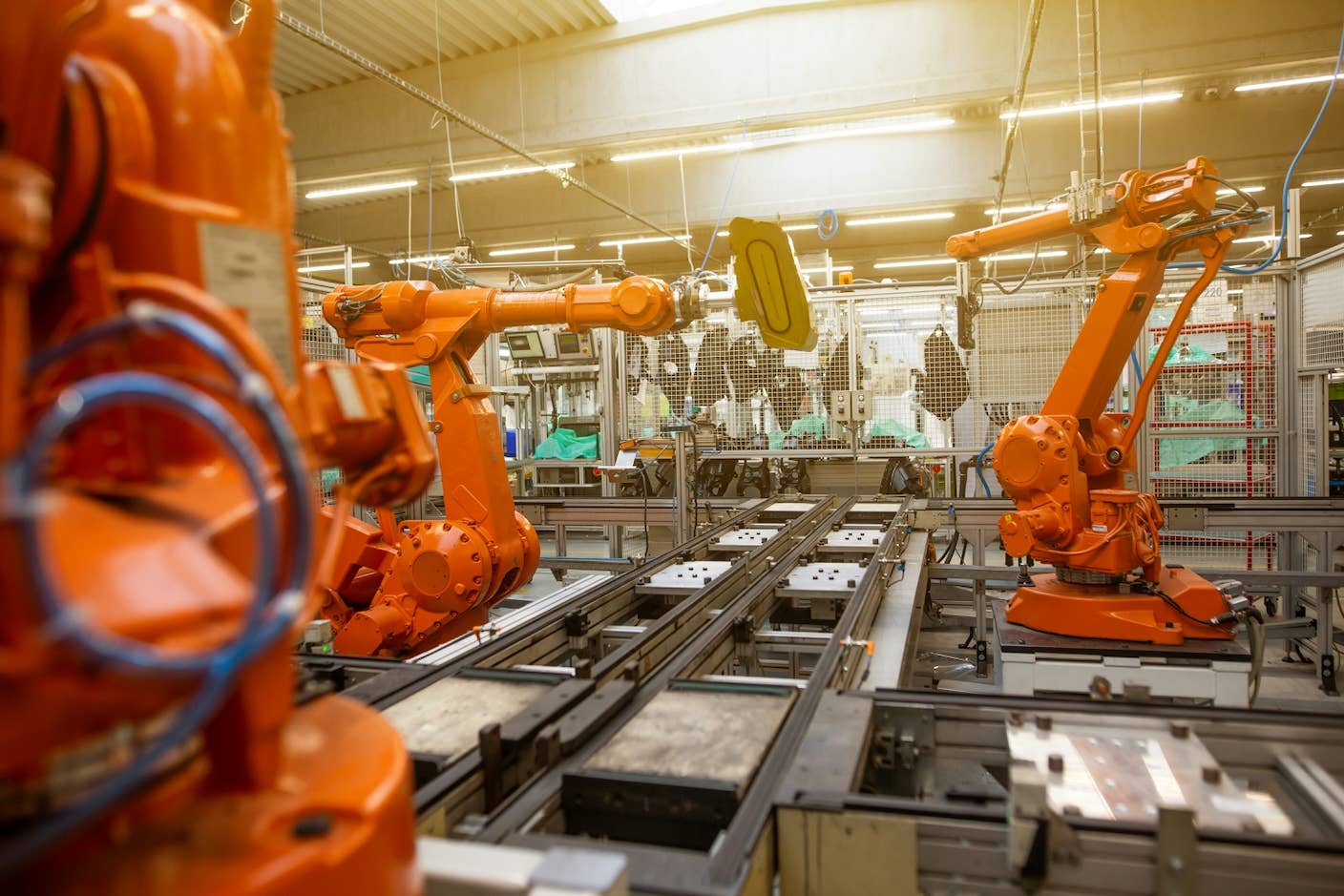

And beyond medical applications, we could remotely control robotic surrogates in factories or on construction sites. Heck, in the event of alien invasion, maybe we'd even hook up to giant mechs to do battle on behalf of all humanity. But I digress.

Robots are still a long way from nimbly navigating the real world. And there are other difficult problems beyond mere movement and control. The Da Vinci surgical robot, for example, allows surgeons to perform surgery at a short distance, but it can't yet translate fine touch sensations. Ideally, we’d translate movement, visuals, and sensation.

Will we control human or robot surrogates using virtual reality? Maybe not. The larger point, however, is the technology will likely find a broad range of applications beyond gaming and entertainment—many of which we've yet to fully imagine.

Image Credit: New Scientist/YouTube; BagoGames/Flickr

Jason is editorial director at SingularityHub. He researched and wrote about finance and economics before moving on to science and technology. He's curious about pretty much everything, but especially loves learning about and sharing big ideas and advances in artificial intelligence, computing, robotics, biotech, neuroscience, and space.

Related Articles

In the Scramble to Power AI, Investors Bet $140 Million on Data Centers at Sea

Robots With Different Designs Can Now Share Skills

Sony’s Table-Tennis Robot Beat Elite Human Players With Unorthodox Moves

What we’re reading