NPR Covers the Singularity – As The Biggest Threat to Humanity! (audio)

Share

Everyone has their own take on the concept of the Singularity. NPR's is a little apocalyptic. One of the National Public Radio's most popular programs, All Things Considered, recently aired a short segment on the birth of human-level artificial intelligence and what it might mean for the world. It was great to see a major media outlet give some air time to the Technlogical Singularity, but I was a touch disappointed that they painted the future in such dire tones. With clips from 2001: A Space Odyssey, and Terminator, NPR focused on how advanced forms of AI might be the 'last invention' humans ever produce. They spent most of their time with the Singularity Institute for Artificial Intelligence (SIAI), but managed to make room for Eric Horvitz from Microsoft Research and the Association for the Advancement of Artificial Intelligence as well as Peter Thiel from PayPal (a noted tech-enthusiast). You can listen to the eight minute piece in the audio clip below. NPR's take on the topic may show how fear and suspicion still dominate the public perception of artificial intelligence.

NPR's Martin Kaste spent most of his air time with Keefe Roedersheimer, a research fellow at SIAI, discussing his goal of understanding how artificial intelligence will progress in the years ahead. The fallout of that conversation: humanity has a lot to fear from AIs that become self-aware and decide that humanity stands in their way. This notion that it will be us versus the machines is furthered by Kaste's moments with Eliezer Yudkowsky, one of the co-founders of SIAI, who he met up with during Peter Thiel's recent fund-raising dinner for advanced technologies. Yudkowsky is quoted to show that even computers who are ambivalent towards humanity could be detrimental to our survival: "While it may not hate you, you're made of atoms that it can use for something else. So it's probably not a good thing to build that particular kind of A.I " Even after turning to Eric Horvitz as a reality check at the end of the segment, I get the feeling that NPR is painting the Singularity as a (very real?) possible death for humanity.

A complete transcript of the following segment from All Things Considered can be found on NPR.org.

Perhaps it's just my interpretation of the broadcast, but I think Kaste's take on the Singularity is more alarmist than helpful. I get the feeling he's either saying "humanity might be doomed, and there's only a small ragtag bunch of geniuses trying to save us all,". or possibly, "look at these nuts who think that computers are going to kill us." The former is probably too hyperbolic, the latter very unflattering to SIAI. (He does begin and end by pointing out the fact that SIAI operates out of an apartment in Berkeley.)

I have no argument with the view that the creation of human-level or higher artificial intelligence poses some serious risks. But I'm pretty sure that the most immediate dangers will arise from how humans use AI against one another. Cyber warfare attacks are already here. They can cause millions in damages, drastically harm industrial/military/government projects, and are probably being pursued by the most powerful nations around the world. As artificial intelligence improves and is used towards these ends, the attacks (and defenses) will become more sophisticated. I'm sure there will be similar threats in other sectors (finance, personal information, etc). Long before we face the situation of a self-aware AI run amok, we'll have dealt with humans run amok who use AIs as weapons.

In that light, I think the NPR coverage is a little naive. The Technological Singularity is an event defined by exponential growth. If and when it arrives it will be an enormous change in a short amount of time. Yet it will not be completely unexpected. Even institutions which do not have a clear interest in the Singularity will, by the regular mechanics of their industry, generate tools and knowledge that will help humanity deal with the growth of artificial intelligence. Google, Microsoft, IBM, etc - these companies deal with narrow AI in various forms. As they continue to develop such possible precursors to true machine sentience they will also develop means to use them efficiently, because that is what will be profitable.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

That's not to say we don't need people taking the ultra long view, and considering the small (but potentially deadliest) risks. We must plan for the negative as well as positive outcomes that could arise from accelerating technologies like AI. Yet such plans are an example of prudence, not a prophesy for inevitable destruction. And while it is not my place to be defensive on the behalf of SIAI, I do feel that their stance on the risks of artificial intelligence is a little more nuanced than as presented in NPR's piece.

But, you know, it was less than eight minutes on the radio. What do I expect?

As the concept of the Singularity slowly makes its way into the mainstream, I'm sure it will be cast in many different lights. "Rapture of the nerds", "robopocalypse", "grey goo gone bad" - most of the public perceptions out there so far aren't especially flattering to those who take the concept seriously. Yet even if a major news source like NPR does use some tired rhetoric in its coverage, getting people to think about the possibilities of accelerating technology and/or exponential growth in intelligence is a good thing. I corresponded with Michael Vassar, President of the Singularity Institute, and he seemed fairly pleased with the coverage: "I'm glad to see interest in our institute by a mainstream media outlet with such an intellectual and thoughtful audience. I hope that this leads to more people becoming aware of our work."

Any publicity is good publicity, right?

Source: npr

Related Articles

An AI Solution to an 80‑Year‑Old Problem Has Shocked Mathematicians

Photosynthetic Drops Soothe Dry Eyes With Sunlight

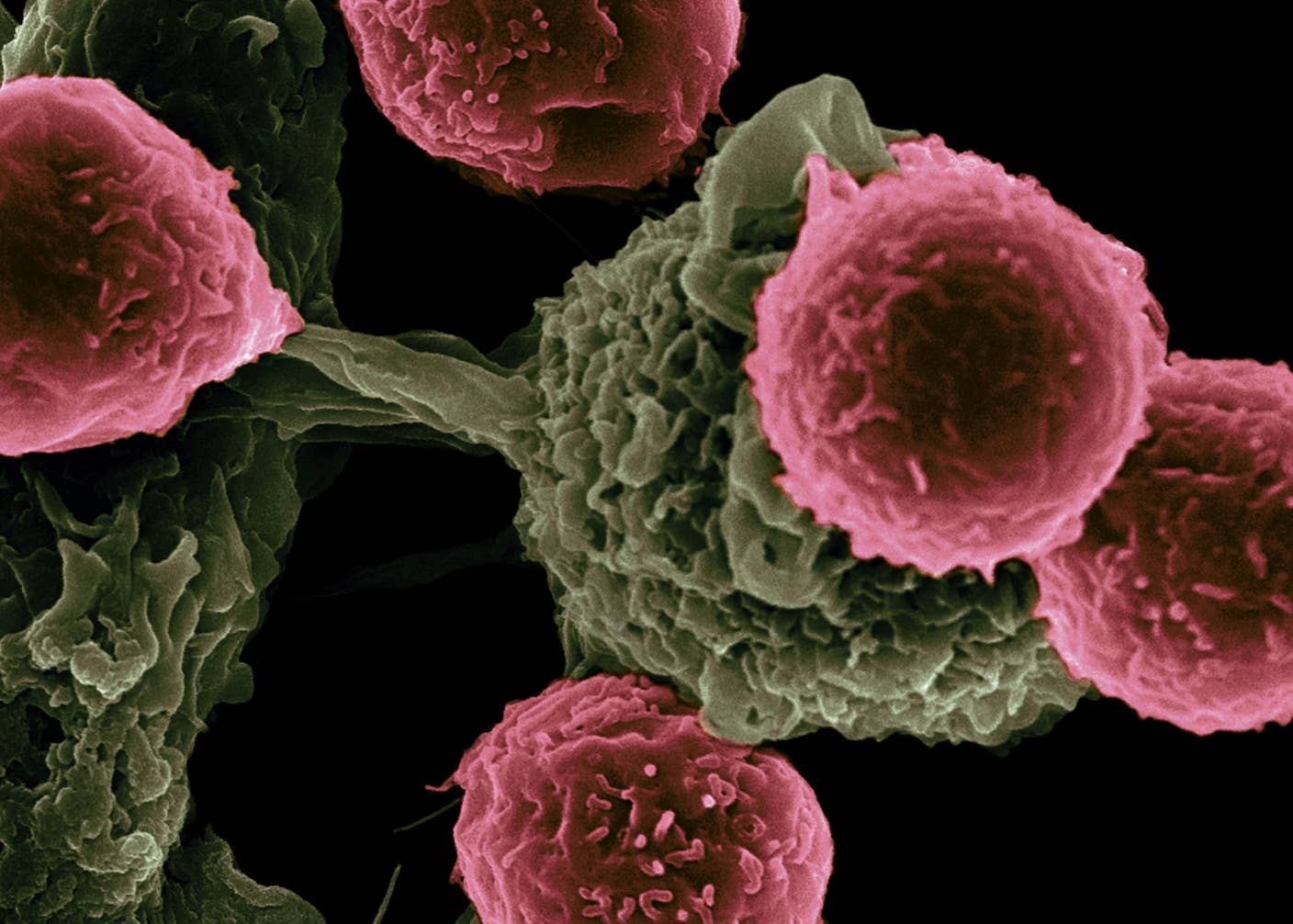

A Revolutionary Cancer Treatment Could Transform Autoimmune Disease

What we’re reading