About every twenty-four months the computer chip manufacturers of the world manage to double the amount of transistors they can cram on an inexpensive integrated circuit. That steady doubling, known as Moore’s Law, has held true for decades, and while scientists have had to resort to ever more creative methods to keep it going, Intel has proven that such exponential growth will last for at least a few years more. Today Intel, co-founded by Gordon Moore himself, announced that it commercialized the world’s first 3D transistor, known as TriGate. The 22nm transistor performs better and uses less energy than the current cutting edge 32nm transistor. The first processor using this 22nm 3D technology will be called Ivy Bridge and it will be available in the second half of 2011. Right on time for Moore’s Law. Watch Intel Senior Fellow Mark Bohr explain TriGate in the video below, followed by a look at how Ivy Bridge performs in laptops, servers, and desktops. Moore’s Law has continued to grant us faster, better, and cheaper computers for more than forty years, and for the time being it looks like nothing can stop it.

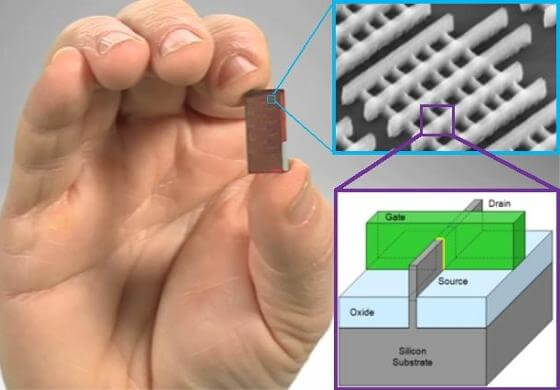

Intel first announced the creation of this 3D transistor back in 2002. It’s taken nearly a decade to move it from the lab to the fabrication factory. What makes this new technology worth that effort? Better performance at less power. At low voltages, the 22nm TriGate is up to 37% better in performance than the current 32nm transistors. Or, at the same performance rates, TriGate would require less than half the power consumption of its 32nm sibling. TriGate performs better because it’s much better at turning off and on. All transistors are essentially switches, and TriGate’s big innovation is taking the controls for that switch and pulling them into the third dimension. Mark Bohr explains more in the video below.

As the nano-sized Bohr demonstrated, Intel’s new 22nm transistor has an extended fin that comes off the 2D plane. By wrapping around this fin in three dimensions, the gate can provide better control for the transistor, helping it perform better. So, there you have it, Intel improved transistors with the same technology that made cars cool in the 50s. Long live the fin!

For those, like myself, that hear ‘3D transistor’ and mistakenly think “ooh, the computer chip is going to turn into a computer cube,” the explanation of TriGate can be a bit disappointing. After all, it’s not that electric current (or information if you like) is flowing in three dimensions, it’s that the TriGate uses a 3D bump to help it better send current in 2D space. Like those cars in the 1950s: the fin may be extended into the sky, but the car itself still drove around on the ground. So too with the TriGate. It’s using three dimensions, but for all (architectural) intents and purposes it’s a planar chip.

But who cares, right? I mean, when the Ivy Bridge processors come out they’ll perform better, use less energy, and help push down the price curve for computer performance. Oh man, I can’t wait to see how amazing laptops, servers, and desktops with Ivy Bridge/TriGate technology will be. Cue up that video:

And now cue up the sound of sad trombones. Those demonstrations for Ivy Bridge were underwhelming. Sure, the graphics and processing speed seemed nice, but they didn’t blow my mind. Shouldn’t we be seeing awe-inspiring leaps of improvement with this new technology?

That expectation is why Moore’s Law is a double-edged sword for hardware innovators like Intel. Computers get smaller and faster every year. The desktop you buy today will cost half as much, and perform better in a few years. Your iPhone5 will make your iPhone3 (or 4) look like a piece of junk. We’ve grown used to the exponential curve. In fact, we’re so immune to the excitement of that curve that we ignore it’s implications. 37% better performance in each generation is wonderful. Imagine if your investments allowed your bank account to increase by 37% every two years. Cutting power in half for the same performance is astounding. Think of your car being twice as fuel efficient. There’s really great reasons to be excited by the commercialization of the 22nm TriGate. As Mark Bohr said in Intel’s announcement:

“The performance gains and power savings of Intel’s unique 3-D Tri-Gate transistors are like nothing we’ve seen before. This milestone is going further than simply keeping up with Moore’s Law. The low-voltage and low-power benefits far exceed what we typically see from one process generation to the next. It will give product designers the flexibility to make current devices smarter and wholly new ones possible. We believe this breakthrough will extend Intel’s lead even further over the rest of the semiconductor industry.” – Mark Bohr, Intel Senior Fellow

From one single generation to the next in computer chips, the doubling seems nice but hardly world-changing. As you compound those generations, however, Moore’s Law is as important as a comet ready to hit the Earth. Not everyone believes we’ll be able to keep doubling transistors on integrated circuits every year. But we have done exactly that since well before Gordon Moore first introduced the concept to the world in 1965. Intel’s already working on 14nm and smaller transistors in its facilities in Oregon and Arizona. Moore’s Law looks like it has at least a decade more left it in. The big question this brings to my mind is will this steady exponential growth be a guarantee that we’re headed towards the Singularity?

As much as Singularitarians rely upon Moore’s Law to fuel our visions of the future, it’s not some inescapable truth of the universe. Producing ever smaller transistors is a job for thousands of engineers around the world, spending billions of dollars in research. Each step towards increasing computer processor performance per dollar requires innovation, and those innovations take time and effort to perfect. TriGate is a great example – millions of dollars and a decade of preparation for its eventual launch. Moore’s Law may continue indefinitely, but it will rely upon the creativity and resilience of many developers at the top of their game. Can they keep it up? Can we keep pushing computers to become faster, better, and more efficient so they double in performance every two years?

Well, I wouldn’t bet against the Intel team. Here’s a little victory video they produced when they succeeded in upholding Moore’s Law back in 2007:

Even if Moore’s Law has a lifespan attached to it, it’s not the only engine for exponential growth. We should remember that computer production and internet adoption is growing in new markets all over the world. We’ve seen the power of distributed computing and crowd sourcing. If Moore’s Law fails, can these trends pick up the slack of doubling our effective processing power? Perhaps, but I like to think of another scenario: What happens if Moore’s Law continues even as our collective computational might is bolstered by cloud computing and a greater interconnectivity of humans through the internet? Exponentials on top of exponentials, my friends.

Today’s a good day for Intel, and a good day for Moore’s Law….but tomorrow could make today look like a snail’s race. I can’t wait to see what happens next.

[image credits: Intel (some modified)]

[source: Intel]