Group Set To Sequence 1000 Genomes By The End Of The Year

Share

When the Human Genome Project got underway in 1990 it was expected to take 15 years to sequence the over 3 billion chemical base pairs that spell out our genetic code. In true Moore’s Law tradition the emergence of faster and more efficient sequencing technologies along the way led to the Project’s early completion in 2003. Today, 22 years after scientists first committed to the audacious goal of sequencing the genome, the next generation of sequencers are setting their sites much higher.

About a thousand times higher.

The 1000 Genomes Project, as its name suggests, is a joint public-private effort to sequence 1000 genomes. Begun in 2008, the Project’s main goal is to create an “extensive catalog of human genetic variation that will support future medical research studies.” The 1000 Genomes Consortium is headed by the NIH’s National Human Genome Research Institute which in turn is collaborating with research groups in the US, UK, China and Germany.

That might not sound like much. Thanks in large part to companies like Silicon Valley start up Complete Genomics perhaps as many as 30,000 complete genomes around the world have already been sequenced. But what is unique about the 1000 Genomes Project is that their genomes will be made available to the public for free, and stored in a place where the world can access the data easily and interact with it.

The original effort to sequence the human genome, while a triumph, is limited in its usefulness insofar as linking genetic sequence to disease. Because it involved DNA from just a small number of individuals (the fifth personal genome, that of Korean researcher Seong-Jin Kim, was completed only in 2008) it is impossible to use the data to make correlations between genetic variations and diseases. The 1000 Genomes Consortium hopes that their sample size will be large enough to catalog all genetic variants that occur in at least 1 percent of the population.

Among the 3 billion base pairs contained in the human genome scientists have already identified more than 1.4 million single nucleotide polymorphisms, or SNPs (pronounced “snips”). SNPs are single base variations that differ between people. By characterizing which people have which SNPs, scientists hope to identify the SNPs that predispose people for diseases such as cancer or heart disease. Smartly, the Consortium is not limiting themselves to any particular population, which might bias the genetic variability to disproportionately represent that population. The equivalent of 1,000 genomes will actually be gotten from the incomplete sequences of 2,661 people from 26 different “populations” around the world.

Just as advances in sequencing technologies throughout the ‘90s galvanized the Human Genome Project, advances in the last decade have put 1000 genomes within reach. So-called “next-gen” sequencing platforms reduced the cost of DNA sequencing by over two orders of magnitude in just a three year span. The lowered cost meant that individual labs could get in on the sequencing act and contribute to the kind of large-scale sequencing that had previously been the domain of major genome centers. And not only was more data being generated, but techniques to verify the quality of the sequences significantly improved.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

Sequencing technology will undoubtedly continue to improve until the next “next-gen” sequencing platforms will allow us to sequence even faster and more cheaply. But the current swell in DNA data has put pressure on another technology to keep pace.

The amount of data generated from DNA sequencing is prodigious. Right now the Project has already amassed over 200 terabytes of data. That’s equivalent to 30,000 standard DVDs or 16 million file cabinets topped with text. According to the NIH, it is the largest set of data on human genetic variation. Not to be overburdened by a few hundred terabytes, Amazon announced last week that the 1000 Genomes Project data is now stored on their Amazon Web Services cloud and is publicly available. It currently contains sequence data from about 1,700 people. Sequencing the remaining 900 or so samples is expected to be completed by the end of the year.

You can find information on how to access the data here.

As if to answer the call for improved data handling tools, the Obama Administration last week launched its “Big Data Research and Development Initiative” that basically spreads $200 million across six federal science agencies to fund R & D of technologies that “access, store, visualize, and analyze” enormous sets of data. The 1,000 Genomes Project is part of the White House Initiative.

The National Human Genome Research Institute, rightly so, calls the Human Genome Project “one of the great feats of human exploration in history – an inward voyage of discovery rather than an outward exploration of the planet or the cosmos.” For the first time we were able to map our entire genome from end to end. Our estimate of total genes was whittled down to between 20,000 and 25,000, we have a better understanding of our relatedness to other species, and not to mention, we’ve discovered gene mutations associated with breast cancer, muscle disease, deafness, and other illnesses. Who knows what the 1,000 Genome Project is yet reveal about our DNA and ourselves. We’ve painted a digital portrait of our DNA, we now begin to add the finer strokes.

[image credits: National Geographic and DNA Sequencing Service]

image 1: DNA array

image 2: drugs

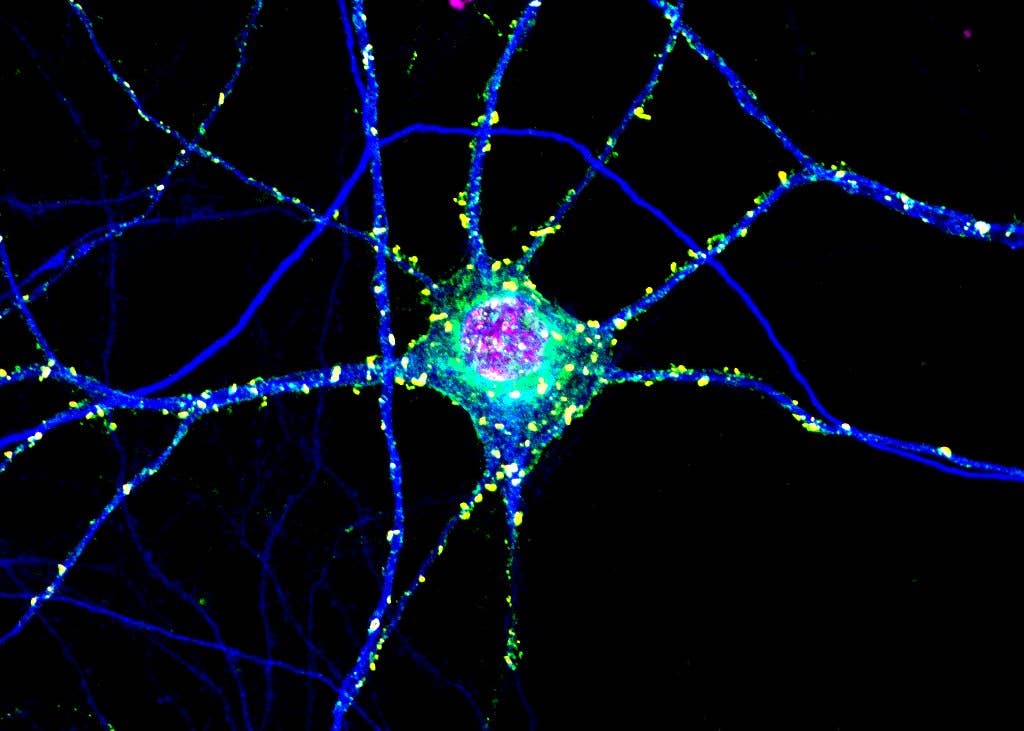

Peter Murray was born in Boston in 1973. He earned a PhD in neuroscience at the University of Maryland, Baltimore studying gene expression in the neocortex. Following his dissertation work he spent three years as a post-doctoral fellow at the same university studying brain mechanisms of pain and motor control. He completed a collection of short stories in 2010 and has been writing for Singularity Hub since March 2011.

Related Articles

Toxic Clumps in Huntington’s Disease May Protect the Brain Too

AI Can Now Design and Run Thousands of Experiments Without Human Hands. We Aren’t Ready for the Risk to Biosecurity.

Three Countries Own the Lithium Market. An MIT Startup Wants to Break Their Grip.

What we’re reading