A Look at IBM’s Watson 5 Years After Its Breathtaking Jeopardy Debut

Share

The year was 2012, and IBM’s AI software Watson was in the midst of its heyday.

Watson beat two of Jeopardy’s all-time champions a year earlier in 2011, and the world was stunned. It was the first widespread and successful demonstration of a natural language processing computer of its class. Combined with the popularity of Jeopardy, Watson became an immediate mainstream icon.

Later in 2012, IBM announced one of the first major practical partnerships for Watson—a Cleveland Clinic collaboration to bring the system into medical training.

Using Watson to synthesize huge sums of data and generate evidence-based hypotheses, it was hoped the system would help clinicians and students more accurately diagnose disease and choose better treatment plans for patients.

Back then, we wrote an article titled "Paging Dr. Watson" that outlined IBM’s plans to train Watson in medicine and looked at some of IBM’s ambitions for the technology’s future. Now, four years later, we thought we’d see what Watson has been up to since then. Are we where we thought we’d be? Let’s take a look.

We’re paging Dr. Watson…again.

It’s been a long road for IBM’s Watson

Under the hood, Watson was (and still is) powered by DeepQA software. Put simply, DeepQA is the complex software architecture that analyzes, reasons about, and answers the content fed into Watson. In 2012, the system ran on an 80 teraFLOP/s computer—a machine capable of 80,000,000,000,000 operations per second—and was located in Yorktown Heights, New York, where its servers filled a small room.

IBM envisioned Watson becoming “a supercharged Siri for business,” and it largely has. Only now, it’s marketed as cognitive computing for enterprise. Or to be exact, “The platform for cognitive business.”

That’s exactly what Watson has become—a platform.

From sole supercomputer to jack-of-all-trades cloud-based platform

As promised, Watson of 2012 got a major upgrade.

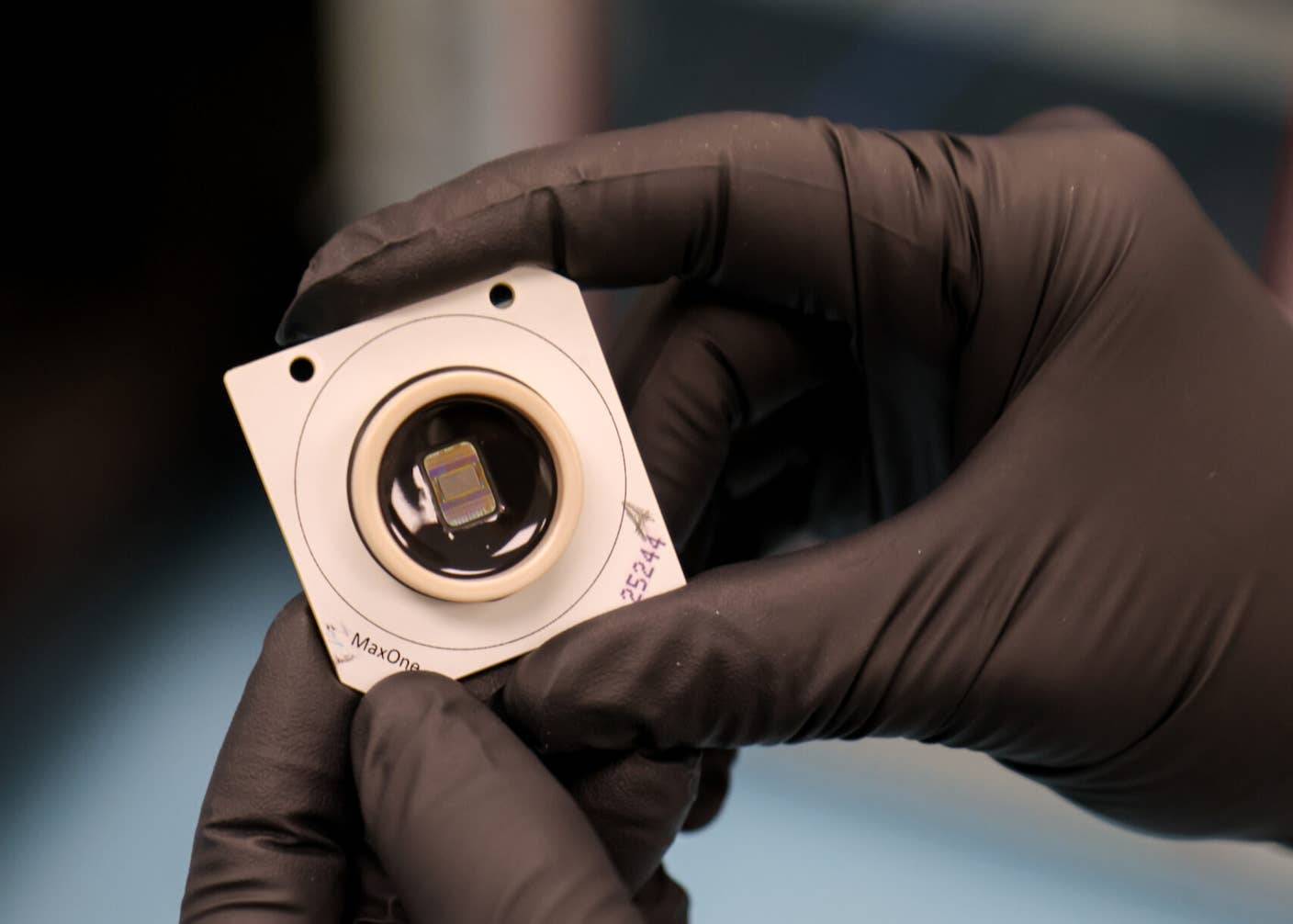

Watson shrunk from the size of a large bedroom to that of four pizza boxes and is now accessible via the cloud on tablet and smartphone. The system is 240% more powerful than its predecessor and can process 28 types (or modules) of data, compared to just 5 previously.

In 2013, IBM open-sourced Watson’s API and now offers IBM Bluemix, a comprehensive cloud platform for third-party developers to build and run apps on top of Watson’s many computing capabilities.

But one of the biggest moves that’s made Watson into what it is today was when, in 2014, IBM invested $1 billion into creating “IBM Watson Group," a massive division dedicated to all things Watson and housing some 2,000 employees.

This was the tipping point when Watson went from “startup mode” to making cognitive computing mainstream. It’s when Watson started to feel very, well, “IBM.”

Fast-forward to 2016, and Watson has more enterprise services and solutions than I can list here—financial advisor, automated customer service representative, research compiler—you name the service, Watson can probably do it.

As the technology behind the 2012 supercomputer evolved, so has IBM’s positioning of Watson—and it’s perhaps best reflected in how Watson is being used in medicine.

Meet Watson Health

Today, Watson efforts within the health space are lumped under a new division called Watson Health. It’s a strategic move, considering many healthcare partnerships have been added to the portfolio following the Cleveland Clinic announcement in 2012.

Since 2012, there’s been no shortage of updates on the prospects of Watson’s partnership with Cleveland Clinic. At the 2014 Cleveland Clinic Medical Innovation Summit, for example, IBM announced oncologists would use Watson to connect genomic and medical data to develop more personalized treatments.

A Business Insider article projected that Watson may eventually allow oncologists to, “Upload the DNA fingerprint of a patient's tumor, which indicates which genes are mutated…and Watson will sift through thousands of mutations and try to identify which is driving the tumor, and therefore what a drug must target.”

And indeed, the University of Tokyo recently used Watson to correct the diagnosis of a 60-year-old patient's form of leukemia by comparing genetic data to millions of cancer research papers. It's a pretty amazing example, but it still may be a little while yet before such work is happening broadly across all of medicine.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

Even though IBM has doubled down on Watson and had some success, the process of making it practical in the most ambitious sense—like in medicine—is taking a little time.

Last year, Brandon Keim in IEEE Spectrum asked, “What’s in the way?” and outlined a few valid reasons why “Dr. Watson” has yet to fully become what we hoped.

IBM’s Watson has come a long way, but its progress compared to expectations for “instant disruption” make the advances seem less significant. Keim writes, “Medical AI is about where personal computers were in the 1970s…The application of artificial intelligence to health care will, similarly, take years to mature.”

Complex problems in the US healthcare system, such as data quality, are also hindering Watson. Electronic medical records are often filled with errors, and were initially digitized for the use of hospital administrators, not for the purpose of mining for data on disease treatment.

Finally, teaching Watson is a grueling process—especially for medical training—where Watson’s answers impact human lives.

Spewing fast answers to patient quandaries has little similarity to Watson mastering Jeopardy. Watson has to learn to think like a good doctor. Meaning, it needs to learn how to find the right pieces of data, weigh evidence, and make accurate inferences.

What’s next for Watson? Keeping up with the Joneses

For Watson to continue progressing, it must also meet the current advances in the field of AI. The biggest change since 2012 has been the rise of deep learning—an AI technique in which programs train themselves using huge annotated datasets.

Indeed, Watson’s successor in game-playing AI is Google DeepMind’s AlphaGo deep learning computer program, which taught itself the game of Go to beat the best.

Of course, IBM is aware of deep learning and last year told MIT Technology Review that the team is integrating the deep learning approach into Watson. The original system was already a bit of a mashup—combining natural language understanding with statistical analysis of large datasets. Deep learning may round it out further.

“The key thing about Watson is that it’s inherently about taking many different solutions to things and integrating them to reach a decision,” says James Hendler, director of the Rensselaer Polytechnic Institute for Data Exploration and Applications.

With huge budgets dedicated to evolving the next generation of chatbots and virtual assistants and all the biggest technology players involved—including Google and Facebook—it’s only a matter of time until a powerful mainstream natural language system exists for consumers, though what its name will be remains to be seen.

Watson is IBM’s foray into the next round of computers and humans working together in powerful ways. But sometimes we forget to give a nod to the hard work required behind the scenes when we look ahead. We’ll keep an eye on the software as it continues to advance and look forward to seeing it in action more.

Image source: Shutterstock

Alison tells the stories of purpose-driven leaders and is fascinated by various intersections of technology and society. When not keeping a finger on the pulse of all things Singularity University, you'll likely find Alison in the woods sipping coffee and reading philosophy (new book recommendations are welcome).

Related Articles

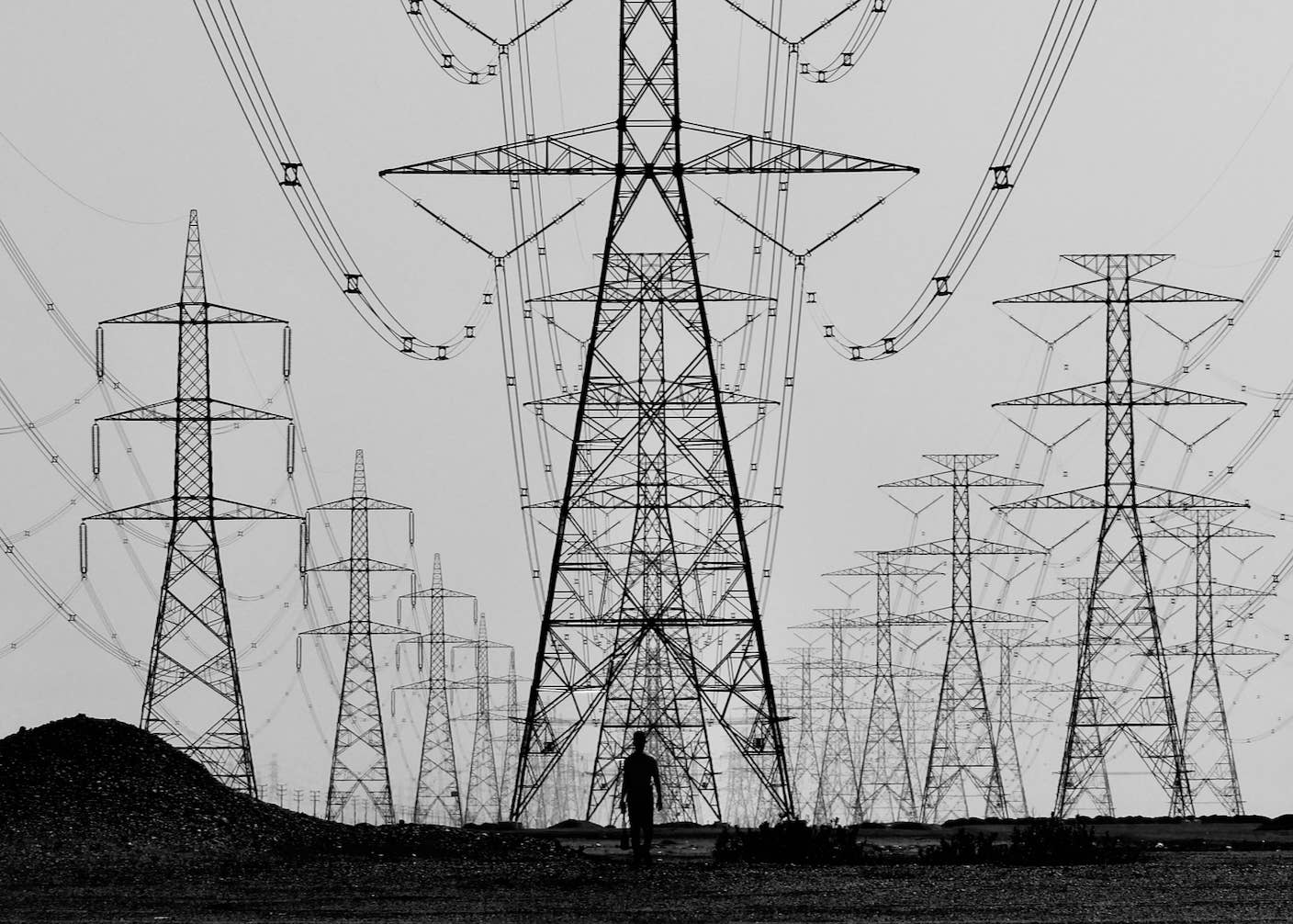

The Mad Scramble to Power AI Is Rewiring the US Grid

Chatbots ‘Optimized to Please’ Make Us Less Likely to Admit When We’re Wrong

These Mini Brains Just Learned to Solve a Classic Engineering Problem

What we’re reading