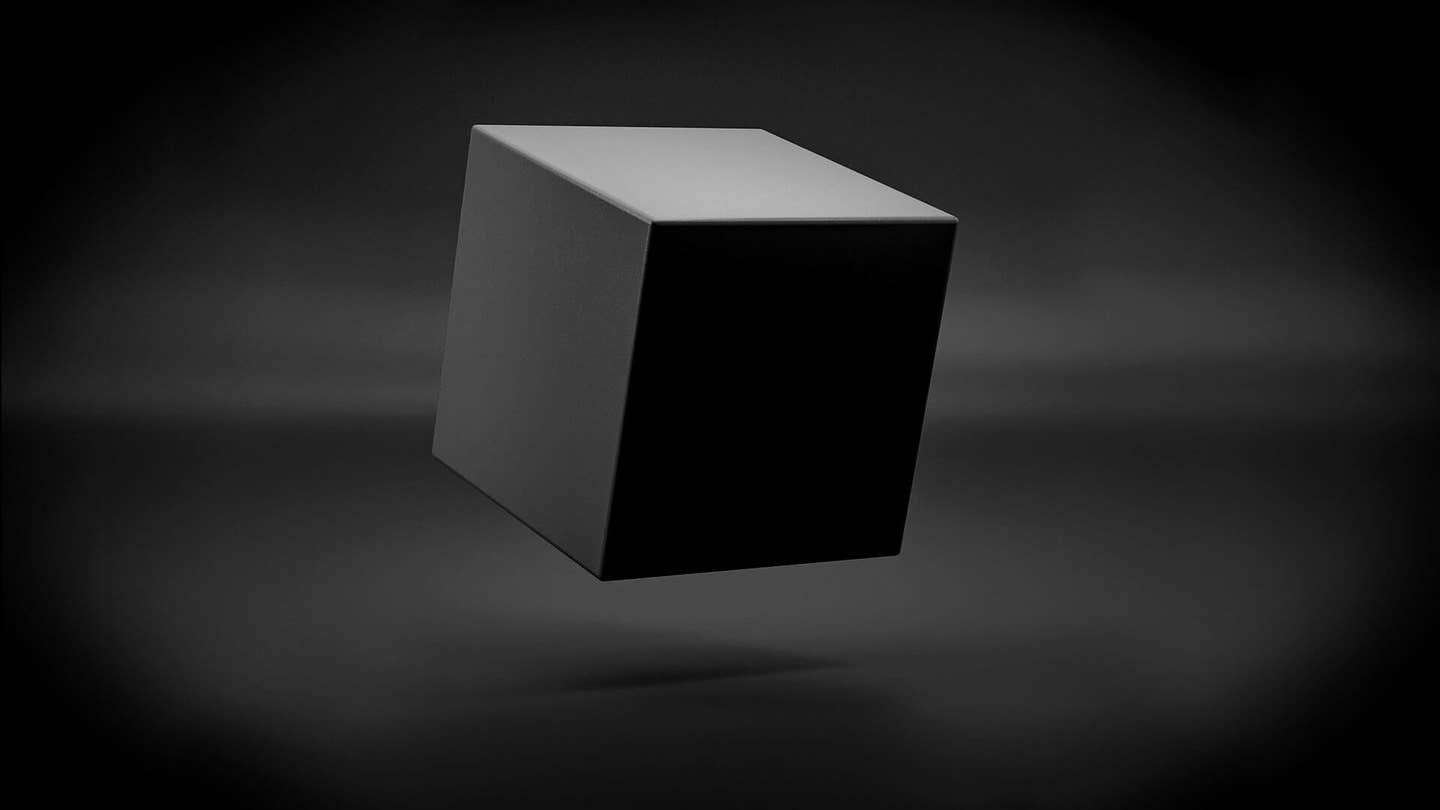

Where Should We Draw the Line Between Rejecting and Embracing Black Box AI?

Share

Deep learning is powering some amazing new capabilities, but we find it hard to scrutinize the workings of these algorithms. Lack of interpretability in AI is a common concern and many are trying to fix it, but is it really always necessary to know what’s going on inside these “black boxes”?

In a recent perspective piece for Science, Elizabeth Holm, a professor of materials science and engineering at Carnegie Mellon University, argued in defense of the black box algorithm. I caught up with her last week to find out more.

Edd Gent: What's your experience with black box algorithms?

Elizabeth Holm: I got a dual PhD in materials science and engineering and scientific computing. I came to academia about six years ago and part of what I wanted to do in making this career change was to refresh and revitalize my computer science side.

I realized that computer science had changed completely. It used to be about algorithms and making codes run fast, but now it’s about data and artificial intelligence. There are the interpretable methods like random forest algorithms, where we can tell how the machine is making its decisions. And then there are the black box methods, like convolutional neural networks.

Once in a while we can find some information about their inner workings, but most of the time we have to accept their answers and kind of probe around the edges to figure out the space in which we can use them and how reliable and accurate they are.

EG: What made you feel like you had to mount a defense of these black box algorithms?

EH: When I started talking with my colleagues, I found that the black box nature of many of these algorithms was a real problem for them. I could understand that because we're scientists, we always want to know why and how.

It got me thinking as a bit of a contrarian, “Are black boxes all bad? Must we reject them?” Surely not, because human thought processes are fairly black box. We often rely on human thought processes that the thinker can't necessarily explain.

It’s looking like we're going to be stuck with these methods for a while, because they're really helpful. They do amazing things. And so there's a very pragmatic realization that these are the best methods we've got to do some really important problems, and we're not right now seeing alternatives that are interpretable. We're going to have to use them, so we better figure out how.

EG: In what situations do you think we should be using black box algorithms?

EH: I came up with three rules. The simplest rule is: when the cost of a bad decision is small and the value of a good decision is high, it’s worth it. The example I gave in the paper is targeted advertising. If you send an ad no one wants it doesn't cost a lot. If you're the receiver it doesn't cost a lot to get rid of it.

There are cases where the cost is high, and that's then we choose the black box if it's the best option to do the job. Things get a little trickier here because we have to ask “what are the costs of bad decisions, and do we really have them fully characterized?” We also have to be very careful knowing that our systems may have biases, they may have limitations in where you can apply them, they may be breakable.

But at the same time, there are certainly domains where we're going to test these systems so extensively that we know their performance in virtually every situation. And if their performance is better than the other methods, we need to do it. Self driving vehicles are a significant example—it’s almost certain they're going to have to use black box methods, and that they're going to end up being better drivers than humans.

The third rule is the more fun one for me as a scientist, and that's the case where the black box really enlightens us as to a new way to look at something. We have trained a black box to recognize the fracture energy of breaking a piece of metal from a picture of the broken surface. It did a really good job, and humans can't do this and we don't know why.

What the computer seems to be seeing is noise. There's a signal in that noise, and finding it is very difficult, but if we do we may find something significant to the fracture process, and that would be an awesome scientific discovery.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

EG: Do you think there's been too much emphasis on interpretability?

EH: I think the interpretability problem is a fundamental, fascinating computer science grand challenge and there are significant issues where we need to have an interpretable model. But how I would frame it is not that there's too much emphasis on interpretability, but rather that there's too much dismissiveness of uninterpretable models.

I think that some of the current social and political issues surrounding some very bad black box outcomes have convinced people that all machine learning and AI should be interpretable because that will somehow solve those problems.

Asking humans to explain their rationale has not eliminated bias, or stereotyping, or bad decision-making in humans. Relying too much on interpretability perhaps puts the responsibility in the wrong place for getting better results. I can make a better black box without knowing exactly in what way the first one was bad.

EG: Looking further into the future, do you think there will be situations where humans will have to rely on black box algorithms to solve problems we can't get our heads around?

EH: I do think so, and it’s not as much of a stretch as we think it is. For example, humans don’t design the circuit map of computer chips anymore. We haven't for years. It's not a black box algorithm that designs those circuit boards, but we’ve long since given up trying to understand a particular computer chip’s design.

With the billions of circuits in every computer chip, the human mind can’t encompass it, either in scope or just the pure time that it would take to trace every circuit. There are going to be cases where we want a system so complex that only the patience that computers have and their ability to work in very high-dimensional spaces is going to be able to do it.

So we can continue to argue about interpretability, but we need to acknowledge that we're going to need to use black boxes. And this is our opportunity to do our due diligence to understand how to use them responsibly, ethically, and with benefits rather than harm. And that's going to be a social conversation as well as as a scientific one.

*Responses have been edited for length and style

Image Credit: Chingraph / Shutterstock.com

Related Articles

MIT Mined Bacteria for the Next CRISPR—and Found Hundreds of Potential New Tools

The Mad Scramble to Power AI Is Rewiring the US Grid

Chatbots ‘Optimized to Please’ Make Us Less Likely to Admit When We’re Wrong

What we’re reading