This Giant AI Chip Is the Size of an iPad and Holds 1.2 Trillion Transistors

Share

People say size doesn’t matter, but when it comes to AI the makers of the largest computer chip ever beg to differ. There are plenty of question marks about the gargantuan processor, but its unconventional design could herald an innovative new era in silicon design.

Computer chips specialized to run deep learning algorithms are a booming area of research as hardware limitations begin to slow progress, and both established players and startups are vying to build the successor to the GPU, the specialized graphics chip that has become the workhorse of the AI industry.

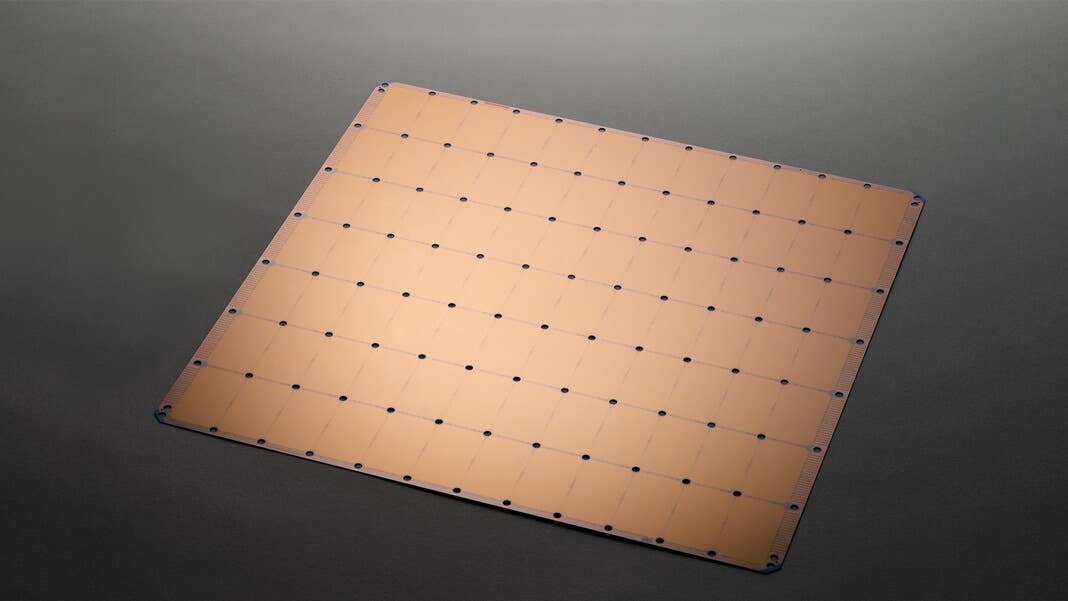

On Monday Californian startup Cerebras came out of stealth mode to unveil an AI-focused processor that turns conventional wisdom on its head. For decades chip makers have been focused on making their products ever-smaller, but the Wafer Scale Engine (WSE) is the size of an iPad and features 1.2 trillion transistors, 400,000 cores, and 18 gigabytes of on-chip memory.

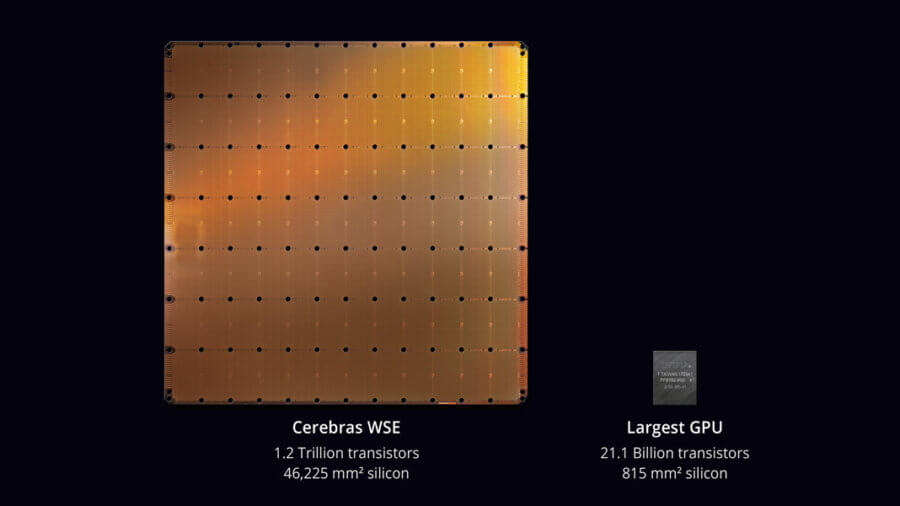

The Cerebras Wafer-Scale Engine (WSE) is the largest chip ever built. It measures 46,225 square millimeters and includes 1.2 trillion transistors. Optimized for artificial intelligence compute, the WSE is shown here for comparison alongside the largest graphics processing unit. Image Credit: Used with permission from Cerebras Systems.

There is a method to the madness, though. Currently, getting enough cores to run really large-scale deep learning applications means connecting banks of GPUs together. But shuffling data between these chips is a major drain on speed and energy efficiency because the wires connecting them are relatively slow.

Building all 400,000 cores into the same chip should get round that bottleneck, but there are reasons it’s not been done before, and Cerebras has had to come up with some clever hacks to get around those obstacles.

Regular computer chips are manufactured using a process called photolithography to etch transistors onto the surface of a wafer of silicon. The wafers are inches across, so multiple chips are built onto them at once and then split up afterwards. But at 8.5 inches across, the WSE uses the entire wafer for a single chip.

The problem is that while for standard chip-making processes any imperfections in manufacturing will at most lead to a few processors out of several hundred having to be ditched, for Cerebras it would mean scrapping the entire wafer. To get around this the company built in redundant circuits so that even if there are a few defects, the chip can route around them.

The other big issue with a giant chip is the enormous amount of heat the processors can kick off—so the company has had to design a proprietary water-cooling system. That, along with the fact that no one makes connections and packaging for giant chips, means the WSE won’t be sold as a stand-alone component, but as part of a pre-packaged server incorporating the cooling technology.

There are no details on costs or performance so far, but some customers have already been testing prototypes, and according to Cerebras results have been promising. CEO and co-founder Andrew Feldman told Fortune that early tests show they are reducing training time from months to minutes.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

We’ll have to wait until the first systems ship to customers in September to see if those claims stand up. But Feldman told ZDNet that the design of their chip should help spur greater innovation in the way engineers design neural networks. Many cornerstones of this process—for instance, tackling data in batches rather than individual data points—are guided more by the hardware limitations of GPUs than by machine learning theory, but their chip will do away with many of those obstacles.

Whether that turns out to be the case or not, the WSE might be the first indication of an innovative new era in silicon design. When Google announced it’s AI-focused Tensor Processing Unit in 2016 it was a wake-up call for chipmakers that we need some out-of-the-box thinking to square the slowing of Moore’s Law with skyrocketing demand for computing power.

It’s not just tech giants’ AI server farms driving innovation. At the other end of the spectrum, the desire to embed intelligence in everyday objects and mobile devices is pushing demand for AI chips that can run on tiny amounts of power and squeeze into the smallest form factors.

These trends have spawned renewed interest in everything from brain-inspired neuromorphic chips to optical processors, but the WSE also shows that there might be mileage in simply taking a sideways look at some of the other design decisions chipmakers have made in the past rather than just pumping ever more transistors onto a chip.

This gigantic chip might be the first exhibit in a weird and wonderful new menagerie of exotic, AI-inspired silicon.

Image Credit: Used with permission from Cerebras Systems.

Related Articles

An AI Solution to an 80‑Year‑Old Problem Has Shocked Mathematicians

AI Lab Partners Are Rewiring the Hunt for New Drugs

In the Scramble to Power AI, Investors Bet $140 Million on Data Centers at Sea

What we’re reading