The brain is an exceptionally powerful computing machine. Scientists have long tried to recreate its inner workings in mechanical minds.

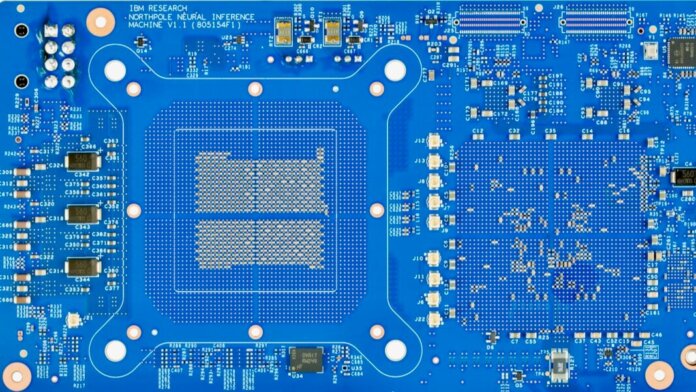

A team from IBM may have cracked the code with NorthPole, a fully digital chip that mimics the brain’s structure and efficiency. When pitted against state-of-the-art graphics processing units (GPUs)—the chips most commonly used to run AI programs—IBM’s brain-like chip triumphed in several standard tests, while using up to 96 percent less energy.

IBM is no stranger to brain-inspired chips. From TrueNorth to SpiNNaker, they’ve spent a decade tapping into the brain’s architecture to better run AI algorithms.

Project to project, the goal has been the same: How can we build faster, more energy efficient chips that allow smaller devices—like our phones or computers in self-driving cars—to run AI on the “edge.” Edge computing can monitor and respond to problems in real-time without needing to send requests to remote server farms in the cloud. Like switching from dial-up modems to fiber-optic internet, these chips could also speed up large AI models with minimal energy costs.

The problem? The brain is analog. Traditional computer chips, in contrast, use digital processing—0s and 1s. If you’ve ever tried to convert an old VHS tape into a digital file, you’ll know it’s not a straightforward process. So far, most chips that mimic the brain use analog computing. Unfortunately, these systems are noisy and errors can easily slip through.

With NorthPole, IBM went completely digital. Tightly packing 22 billion transistors onto 256 cores, the chip takes its cues from the brain by placing computing and memory modules next to each other. Faced with a task, each core takes on a part of a problem. However, like nerve fibers in the brain, long-range connections link modules, so they can exchange information too.

This sharing is an “innovation,” said Drs. Subramanian Iyer and Vwani Roychowdhury at the University of California, Los Angeles (UCLA), who were not involved in the study.

The chip is especially relevant in light of increasingly costly, power-hungry AI models. Because NorthPole is fully digital, it also dovetails with existing manufacturing processes—the packaging of transistors and wired connections—potentially making it easier to produce at scale.

The chip represents “neural inference at the frontier of energy, space and time,” the authors wrote in their paper, published in Science.

Mind Versus Machine

From DALL-E to ChatGTP, generative AI has taken the world by storm with its shockingly human-like text-based responses and images.

But to study author Dr. Dharmendra S. Modha, generative AI is on an unsustainable path. The software is trained on billions of examples—often scraped from the web—to generate responses. Both creating the algorithms and running them requires massive amounts of computing power, resulting in high costs, processing delays, and a large carbon footprint.

These popular AI models are loosely inspired by the brain’s inner workings. But they don’t mesh well with our current computers. The brain processes and stores memories in the same location. Computers, in contrast, divide memory and processing into separate blocks. This setup shuttles data back and forth for each computation, and traffic can stack up, causing bottlenecks, delays, and wasted energy.

It’s a “data movement crisis,” wrote the team. We need “dramatically more computationally-efficient methods.”

One idea is to build analog computing chips similar to how the brain functions. Rather than processing data using a system of discrete 0s and 1s—like on-or-off light switches—these chips function more like light dimmers. Because each computing “node” can capture multiple states, this type of computing is faster and more energy efficient.

Unfortunately, analog chips also suffer from errors and noise. Similar to adjusting a switch with a light dimmer, even a slight mistake can alter the output. Although flexible and energy efficient, the chips are difficult to work with when processing large AI models.

A Match Made in Heaven

What if we combined the flexibility of neurons with the reliability of digital processors?

That’s the driving concept for NorthPole. The result is a stamp-sized chip that can beat the best GPUs in several standard tests.

The team’s first step was to distribute data processing across multiple cores, while keeping memory and computing modules inside each core physically close.

Previous analog chips, like IBM’s TrueNorth, used a special material to combine computation and memory in one location. Instead of going analog with non-standard materials, the NorthPole chip places standard memory and processing components next to each other.

The rest of NorthPole’s design borrows from the brain’s larger organization.

The chip has a distributed array of cores like the cortex, the outermost layer of the brain responsible for sensing, reasoning, and decision-making. Each part of the cortex processes different types of information, but it also shares computations and broadcasts results throughout the region.

Inspired by these communication channels, the team built two networks on the chip to democratize memory. Like neurons in the cortex, each core can access computations within itself, but also has access to a global memory. This setup removes hierarchy in data processing, allowing all cores to tackle a problem simultaneously while also sharing their results—thereby eliminating a common bottleneck in computation.

The team also developed software that cleverly delegates a problem in both space and time to each core—making sure no computing resources go to waste or collide with each other.

The software “exploits the full capabilities of the [chip’s] architecture,” they explained in the paper, while helping integrate “existing applications and workflows” into the chip.

Compared to TrueNorth, IBM’s previous brain-inspired analog chip, NorthPole can support AI models that are 640 times larger, involving 3,000 times more computations. All that with just four times the number of transistors.

A Digital Brain Processor

The team next pitted NorthPole against several GPU chips in a series of performance tests.

NorthPole was 25 times more efficient when challenged with the same problem. The chip also processed data at lighting-fast speeds compared to GPUs on two difficult AI benchmark tests.

Based on initial tests, NorthPole is already usable for real-time facial recognition or deciphering language. In theory, its fast response time could also guide self-driving cars in split-second decisions.

Computer chips are at a crossroads. Some experts believe that Moore’s law—which posits that the number of transistors on a chip doubles every two years—is at death’s door. Although still in their infancy, alternative computing structures, such as brain-like hardware and quantum computing, are gaining steam.

But NorthPole shows semiconductor technology still has much to give. Currently, there are 37 million transistors per square millimeter on the chip. But based on projections, the setup could easily expand to two billion, allowing larger algorithms to run on a single chip.

“Architecture trumps Moore’s law,” wrote the team.

They believe innovation in chip design, like NorthPole, could provide near-term solutions in the development of increasingly powerful but resource-hungry AI.

Image Credit: IBM