The next wave of upcoming musicians don’t have soul. Or heart. Or even blood. That’s because these cutting edge instrumentalists are robots. Expressive Machines Musical Instruments (EMMI) is an organization geared towards automated acoustic performance. Formed by University of Virginia PhD students Troy Rogers, Scott Barton, and Steven Kemper in 2007, EMMI has built several robotic drums and a guitar-like apparatus as well as written numerous pieces of music for them to play. They’re even expanding into wind instruments. Some of EMMI’s performances are robot-only, while others use human-generated feedback to direct their sounds. We’ve got plenty of videos showcasing these mechanical talents for you below, have fun watching them all. Far from simply replicating human performance, EMMI is actively exploring how robots expand the kinds of music we can produce. The music of the future may be shaped not only by new instruments, but by a new species of performers as well.

Kemper, Barton, and Rogers are all music PhD students at the University of Virginia, and as such don’t have a ton of funding for their EMMI project. To raise money to create new instruments, they paired up with experimental musicians EARduo and went on Kickstarter. The following video is their pitch for a Monochord-Aerophone Robotic Instrument Ensemble (MARIE) which includes a robotic re-imagining of the clarinet. The Kickstarter project for MARIE already closed (it was a success!) but the video gives a great overview of EMMI. (Oh, and you can always still donate on the EMMI site.)

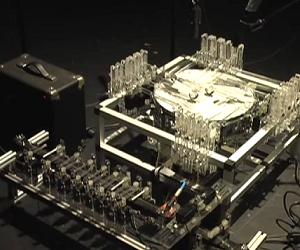

There are three main instruments in the EMMI stable: PAM or Poly-tangent Automatic (multi-)Monochord, a stringed device that can shred like an electric guitar; MADI or Multi-mallet Automatic Drumming Instrument, which is basically a snare drum attached to a million different robotic arms that can strike it; and CADI or Configurable Automatic Drumming Instrument, a group of various percussion instruments with robotic strikers. Here are clips for each:

One of Steven Kemper’s other projects is RAKS, Remote electroAcoustic Kinesthetic Sensing. Using a series of wearable kinetic sensors, a human can move their body to alter the general tone or sounds of a robotic ensemble. In the following video, dancer and musician Aurie Hsu performs a belly dance routine while outfitted with RAKS. See if you can notice how her motions change the performance (hint: it’s a lot easier to see when she’s wearing hand cymbals).

While most of these videos are a few months old (or more), EMMI continues to travel and perform. The robots and their human overlords were recently seen at the Sonic Circuits experimental music festival and are regulars in various smaller venues in the Virginia area.

There are many artists exploring the robot-music interface, and each has their own niche. High-end professional humanoids like the trumpet and violin playing Toyota robots are seeking to not only replicate human-like performance, but also human-like appearance. Other musicians are exploring how remote controlled robots can extend one person’s expression across a host of instruments simultaneously, and still others look to see how automated systems can echo and expand upon their performances. EMMI seems to be about pushing the boundaries of what music we can make when we extend ourselves through robotic instruments. When I see PAM shredding, or MADI pounding a beat it would take humans on several instruments to replicate, I envision a new generation of robotic-acoustic devices whose very nature includes mechanical performers. Just as portable synthesizers changed the realm of what was possible in the 70s, these robot instruments could open up whole new fields of music in the 21st century.

Assuming, of course, that musicians don’t get distracted by other technologies. We have virtual instruments, virtual singers, and even virtual composers. Not being a musician, I have no idea if robotic acoustics have enough allure to keep us simply from doing everything on a computer.

Even if we eventually drop the robots in favor of virtual virtuosos, projects like EMMI are valuable reminders of how pervasive automation has become in our culture. Robots are everywhere, and they are moving from simply utilitarian to more creative roles. For now EMMI and its ilk still rely on humans for direction, but in the not too distant future they could easily expand to write and perform music on their own. Give it enough time and humans will be learning from artificial musicians, instead of controlling them. Who knows, maybe they’ll even show us they have soul.

[source: Expressive Machines]