How AI Is Deepening Our Understanding of the Brain

Share

Artificial neural networks are famously inspired by their biological counterparts. Yet compared to human brains, these algorithms are highly simplified, even “cartoonish.”

Can they teach us anything about how the brain works?

For a panel at the Society for Neuroscience annual meeting this month, the answer is yes. Deep learning wasn’t meant to model the brain. In fact, it contains elements that are biologically improbable, if not utterly impossible. But that’s not the point, argues the panel. By studying how deep learning algorithms perform, we can distill high-level theories for the brain’s processes—inspirations to be further tested in the lab.

“It’s not wrong to use simplified models,” said panel speaker Dr. Sara Solla, an expert in computational neuroscience at Northwestern University’s Feinberg School of Medicine. Discovering what to include—or exclude—is an enormously powerful way to find out what’s critical and what’s evolutionary junk for our neural networks.

Dr. Alona Fyshe at the University of Alberta agrees. “AI [algorithms] have already been useful for understanding the brain…even though they are not faithful models of physiology.” The key point, she said, is that they can provide representations—that is, an overall mathematical view of how neurons assemble into circuits to drive cognition, memory, and behavior.

But what, if anything, are deep learning models missing? To panelist Dr. Cian O’Donnell at Ulster University, the answer is a lot. Although we often talk about the brain as a biological computer, it runs on both electrical and chemical information. Incorporating molecular data into artificial neural networks could nudge AI closer to a biological brain, he argued. Similarly, several computational strategies the brain uses aren’t yet used by deep learning.

One thing is clear: when it comes to using AI to inspire neuroscience, “the future is already here,” said Fyshe.

The 'Little Brain’s' Role in Language

As an example, Fyshe turned to a recent study about the neuroscience of language.

We often think of the cortex as the central processing unit for deciphering language. But studies are now pointing to a new, surprising hub: the cerebellum. Dubbed the “little brain,” the cerebellum is usually known for its role in motion and balance. When it comes to language processing, neuroscientists are in the dark.

Enter GPT-3, a deep learning model with crazy language writing abilities. Briefly, GPT-3 works by predicting the next word in a sequence. Since its release, the AI and its successors have written extraordinary human-like poetry, essays, songs, and computer code, generating works that stump judges tasked with determining machine from human.

In this highlighted study, led by Dr. Alexander Huth at the University of Texas, volunteers listened to hours of podcasts while getting their brains scanned with fMRI. The team next used these data to train AI models, based on five language features, that can predict how their brains fire up. For example, one feature captured how our mouths move when speaking. Another looked at whether a word is a noun or verb; yet another the context of the language. In this way, the study captured the main levels of language processing, from low-level acoustics to high-level comprehension.

Amazingly, only the contextual model—one based on GPT-3—was able to accurately predict neural activity when tested on a new dataset. Conclusion? The cerebellum prefers high-level processing, particularly related to social or people categories.

“This is pretty strong evidence that the neural network model was required in order for us to understand what the cerebellum is doing,” said Fyshe. “[This] would not have been possible without deep neural networks.”

Deep Bio-Learning

Despite being inspired by the brain, deep learning is only loosely based on the wiggly bio-hardware inside our heads. AI is also not subject to biological constraints, allowing processing speeds that massively exceed that of human brains.

So how can we bring an alien intelligence closer to its originators?

To O’Donnell, we need to dive back into the non-binary aspects of neural networks. “The brain has multiple… levels of organization,” going from genes and molecules to cells that connect into circuits, which magically lead to cognition and behavior, he said.

It’s not hard to see biological aspects that aren’t in current deep learning models. Take astrocytes, a cell type in the brain that’s increasingly acknowledged for its role in learning. Or “mini-computers” inside a neuron’s twisting branches—dendrites—suggesting a single neuron is far more powerful than previously thought. Or molecules that are gatekeepers of learning, which can package themselves up into fatty bubbles to float from one neuron to the next to change the receiver’s activity.

The key question is, which of these biological details matter?

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

As O’Donnell explains, three aspects could move deep learning towards higher biological probability: adding in biological details that underlie computation, learning, and physical constraints.

One example? Biochemical workers inside a neuron, such as those that actively reshape the structure of a synapse, a physical bump that connects adjacent neurons, computes data, and stores memories at the same time. Within a single neuron, molecules can react to a data input, floating around dendrites to trigger biochemical computations at a much slower timescale than electrical signals—a sort of “thinking fast and slow,” but at the level of a single neuron.

Similarly, the brain learns in a dramatically different manner compared to deep learning algorithms. While deep neural networks are highly efficient at one job, the brain is flexible in many, all the time. Deep learning has also traditionally relied on supervised learning—in that it requires many examples with the correct answer for training—while the brain’s main computational method is unsupervised and often based on rewards.

Physical restraints in the brain could also contribute to its efficacy. Neurons have sparse activity, meaning that they’re normally silent—in sleepy mode—and only fire up when needed, thus preserving energy. They’re also chatterboxes and quite noisy, having a built-in redundancy trick to tackle our messy world.

Finally, the brain minimizes space wastage: its input and output cables are like a highly mashed bowl of spaghetti, but their 3D position in the brain can influence their computation; neurons near each other can share a bath of chemical modulators, even when not directly connected. This local but diffused regulation is something very much missing from deep learning models.

These may seem like nitty-gritty details, but they could push the brain’s computational strategies toward different paths than deep learning.

Bottom line? “I do think the link between deep learning and brains is worth exploring, but there are lots of known and unknown biological mechanisms…that aren’t typically incorporated into deep neural networks,” said O’Donnell.

Machine-Mind Meld

Hesitations aside, it’s clear that AI and neuroscience are converging. Aspects of neuroscience conventionally not considered in deep learning—say, motivation or attention—are now increasingly popular among the deep learning crowd.

For Solla, the critical point is to keep deep learning models close—but not too close—to an actual brain. “If you have a model that’s as detailed as the system itself…you already have something to poke,” she said, “a faithful model might not be useful.”

All three panelists agreed that the next crux is figuring out the sweet spot between abstraction and neurobiological accuracy so that deep learning can form synergies with neuroscience.

One yet unknown question is which brainy details to include. Although it’s possible to systematically test ideas from neuroscience, for Solla, there’s no silver bullet. “It’ll be problem-dependent,” she said.

O’Donnell has a different perspective. “With deep learning networks you can train them to do well on tasks” that standard brain-inspired models couldn’t previously solve. By comparing and uniting the two, we could potentially get the best of both worlds: an algorithm that does well and also plays well with biological accuracy, he said.

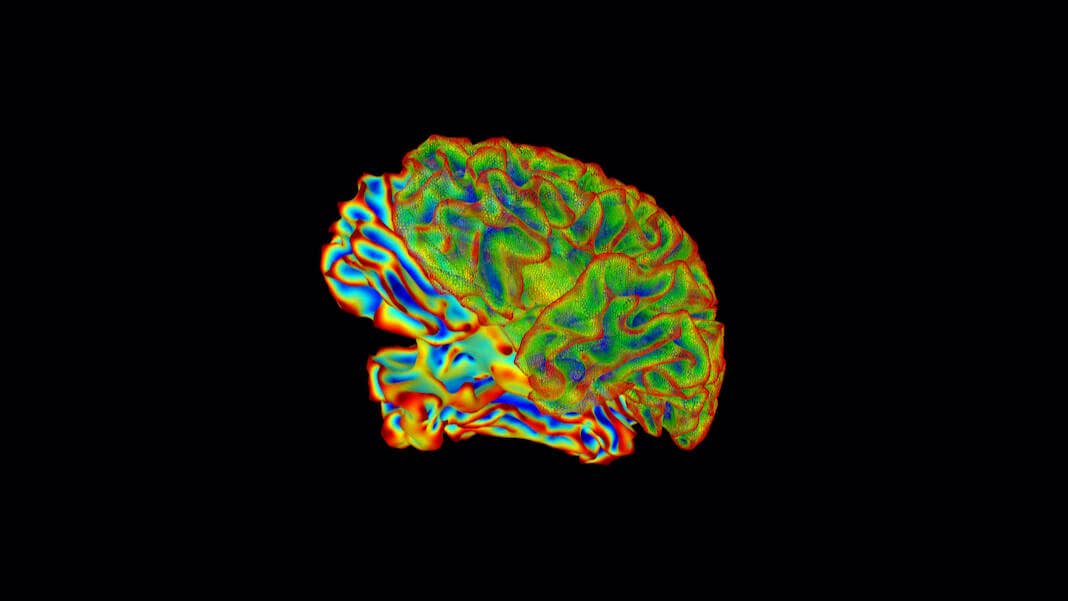

Image Credit: National Institute of Mental Health, National Institutes of Health

Dr. Shelly Xuelai Fan is a neuroscientist-turned-science-writer. She's fascinated with research about the brain, AI, longevity, biotech, and especially their intersection. As a digital nomad, she enjoys exploring new cultures, local foods, and the great outdoors.

Related Articles

AI Lab Partners Are Rewiring the Hunt for New Drugs

In the Scramble to Power AI, Investors Bet $140 Million on Data Centers at Sea

You Probably Wouldn’t Notice if a Chatbot Slipped Ads Into Its Responses

What we’re reading