AI-Powered Brain Implant Smashes Speed Record for Turning Thoughts Into Text

Share

We speak at a rate of roughly 160 words every minute. That speed is incredibly difficult to achieve for speech brain implants.

Decades in the making, speech implants use tiny electrode arrays inserted into the brain to measure neural activity, with the goal of transforming thoughts into text or sound. They’re invaluable for people who lose their ability to speak due to paralysis, disease, or other injuries. But they’re also incredibly slow, slashing word count per minute nearly ten-fold. Like a slow-loading web page or audio file, the delay can get frustrating for everyday conversations.

A team led by Drs. Krishna Shenoy and Jaimie Henderson at Stanford University is closing that speed gap.

Published on the preprint server bioRxiv, their study helped a 67-year-old woman restore her ability to communicate with the outside world using brain implants at a record-breaking speed. Known as “T12,” the woman gradually lost her speech from amyotrophic lateral sclerosis (ALS), or Lou Gehrig’s disease, which progressively robs the brain’s ability to control muscles in the body. T12 could still vocalize sounds when trying to speak—but the words came out unintelligible.

With her implant, T12’s attempts at speech are now decoded in real time as text on a screen and spoken aloud with a computerized voice, including phrases like “it’s just tough,” or “I enjoy them coming.” The words came fast and furious at 62 per minute, over three times the speed of previous records.

It’s not just a need for speed. The study also tapped into the largest vocabulary library used for speech decoding using an implant—at roughly 125,000 words—in a first demonstration on that scale.

To be clear, although it was a “big breakthrough” and reached “impressive new performance benchmarks” according to experts, the study hasn’t yet been peer-reviewed and the results are limited to the one participant.

That said, the underlying technology isn’t limited to ALS. The boost in speech recognition stems from a marriage between RNNs—recurrent neural networks, a machine learning algorithm previously effective at decoding neural signals—and language models. When further tested, the setup could pave the way to enable people with severe paralysis, stroke, or locked-in syndrome to casually chat with their loved ones using just their thoughts.

We’re beginning to “approach the speed of natural conversation,” the authors said.

Loss for Words

The team is no stranger to giving people back their powers of speech.

As part of BrainGate, a pioneering global collaboration for restoring communications using brain implants, the team envisioned—and then realized—the ability to restore communications using neural signals from the brain.

In 2021, they engineered a brain-computer interface (BCI) that helped a person with spinal cord injury and paralysis type with his mind. With a 96 microelectrode array inserted into the motor areas of the patient’s brain, the team was able to decode brain signals for different letters as he imagined the motions for writing each character, achieving a sort of “mindtexting” with over 94 percent accuracy.

The problem? The speed was roughly 90 characters per minute at most. While a large improvement from previous setups, it was still painfully slow for daily use.

So why not tap directly into the speech centers of the brain?

Regardless of language, decoding speech is a nightmare. Small and often subconscious movements of the tongue and surrounding muscles can trigger vastly different clusters of sounds—also known as phonemes. Trying to link the brain activity of every single twitch of a facial muscle or flicker of the tongue to a sound is a herculean task.

Hacking Speech

The new study, a part of the BrainGate2 Neural Interface System trial, used a clever workaround.

The team first placed four strategically located electrode microarrays into the outer layer of T12’s brain. Two were inserted into areas that control movements around the mouth’s surrounding facial muscles. The other two tapped straight into the brain’s “language center,” which is called Broca’s area.

In theory, the placement was a genius two-in-one: it captured both what the person wanted to say, and the actual execution of speech through muscle movements.

But it was also a risky proposition: we don’t yet know whether speech is limited to just a small brain area that controls muscles around the mouth and face, or if language is encoded at a more global scale inside the brain.

Enter RNNs. A type of deep learning, the algorithm has previously translated neural signals from the motor areas of the brain into text. In a first test, the team found that it easily separated different types of facial movements for speech—say, furrowing the brows, puckering the lips, or flicking the tongue—based on neural signals alone with over 92 percent accuracy.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

The RNN was then taught to suggest phonemes in real time—for example, “huh,” “ah,” and “tze.” Phenomes help distinguish one word from another; in essence, they’re the basic element of speech.

The training took work: every day, T12 attempted to speak between 260 and 480 sentences at her own pace to teach the algorithm the particular neural activity underlying her speech patterns. Overall, the RNN was trained on nearly 11,000 sentences.

Having a decoder for her mind, the team linked the RNN interface with two language models. One had an especially large vocabulary at 125,000 words. The other was a smaller library with 50 words that’s used for simple sentences in everyday life.

After five days of attempted speaking, both language models could decode T12’s words. The system had errors: around 10 percent for the small library and nearly 24 percent for the larger one. Yet when asked to repeat sentence prompts on a screen, the system readily translated her neural activity into sentences three times faster than previous models.

The implant worked regardless if she attempted to speak or if she just mouthed the sentences silently (she preferred the latter, as it required less energy).

Analyzing T12’s neural signals, the team found that certain regions of the brain retained neural signaling patterns to encode for vowels and other phonemes. In other words, even after years of speech paralysis, the brain still maintains a “detailed articulatory code”—that is, a dictionary of phonemes embedded inside neural signals—that can be decoded using brain implants.

Speak Your Mind

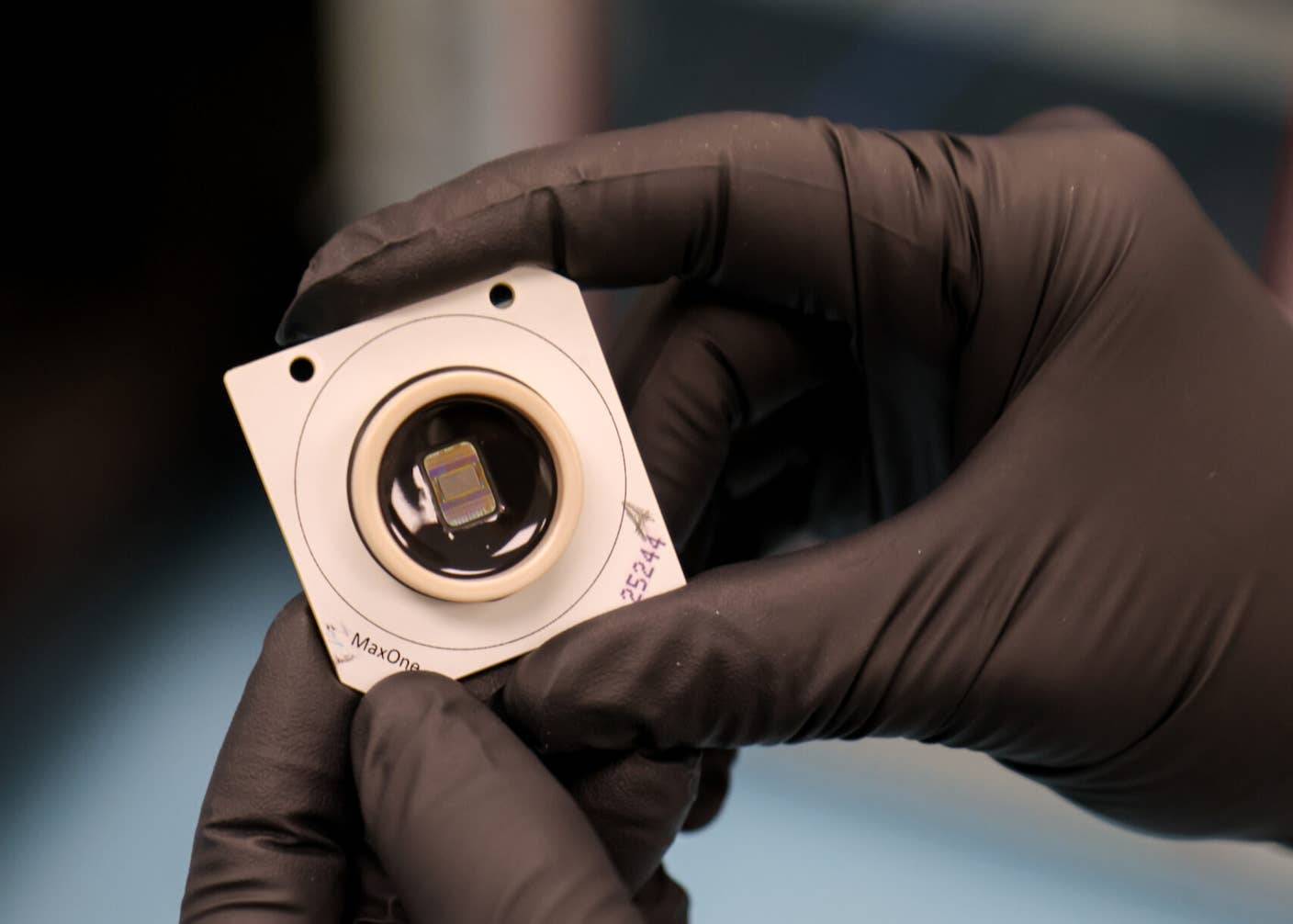

The study builds upon many others that use a brain implant to restore speech, often decades after severe injuries or slowly-spreading paralysis from neurodegenerative disorders. The hardware is well known: the Blackrock microelectrode array, consisting of 64 channels to listen in on the brain’s electrical signals.

What’s different is how it operates; that is, how the software transforms noisy neural chatter into cohesive meanings or intentions. Previous models mostly relied on decoding data directly obtained from neural recordings from the brain.

Here, the team tapped into a new resource: language models, or AI algorithms similar to the autocomplete function now widely available for Gmail or texting. The technological tag-team is especially promising with the rise of GPT-3 and other emerging large language models. Excellent at generating speech patterns from simple prompts, the tech—when combined with the patient’s own neural signals—could potentially “autocomplete” their thoughts without the need for hours of training.

The prospect, while alluring, comes with a side of caution. GPT-3 and similar AI models can generate convincing speech on their own based on previous training data. For a person with paralysis who’s unable to speak, we would need guardrails as the AI generates what the person is trying to say.

The authors agree that, for now, their work is a proof of concept. While promising, it’s “not yet a complete, clinically viable system,” for decoding speech. For one, they said, we need to train the decoder with less time and make it more flexible, letting it adapt to ever-changing brain activity. For another, the error rate of roughly 24 percent is far too high for everyday use—although increasing the number of implant channels could boost accuracy.

But for now, it moves us closer to the ultimate goal of “restoring rapid communications to people with paralysis who can no longer speak,” the authors said.

Image Credit: Miguel Á. Padriñán from Pixabay

Dr. Shelly Xuelai Fan is a neuroscientist-turned-science-writer. She's fascinated with research about the brain, AI, longevity, biotech, and especially their intersection. As a digital nomad, she enjoys exploring new cultures, local foods, and the great outdoors.

Related Articles

These Mini Brains Just Learned to Solve a Classic Engineering Problem

Google DeepMind Plans to Track AGI Progress With These 10 Traits of General Intelligence

Tech Companies Are Blaming Massive Layoffs on AI. What’s Really Going On?

What we’re reading