ChatGPT, DALL-E, Stable Diffusion, and other generative AIs have taken the world by storm. They create fabulous poetry and images. They’re seeping into every nook of our world, from marketing to writing legal briefs and drug discovery. They seem like the poster child for a man-machine mind meld success story.

But under the hood, things are looking less peachy. These systems are massive energy hogs, requiring data centers that spit out thousands of tons of carbon emissions—further stressing an already volatile climate—and suck up billions of dollars. As the neural networks become more sophisticated and more widely used, energy consumption is likely to skyrocket even more.

Plenty of ink has been spilled on generative AI’s carbon footprint. Its energy demand could be its downfall, hindering development as it further grows. Using current hardware, generative AI is “expected to stall soon if it continues to rely on standard computing hardware,” said Dr. Hechen Wang at Intel Labs.

It’s high time we build sustainable AI.

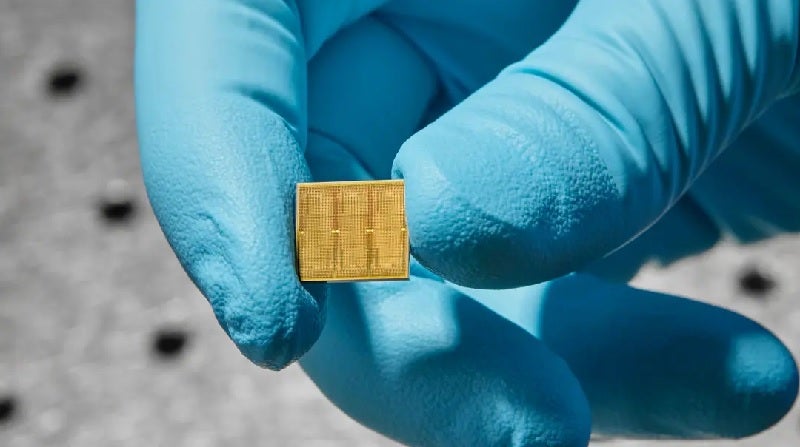

This week, a study from IBM took a practical step in that direction. They created a 14-nanometer analog chip packed with 35 million memory units. Unlike current chips, computation happens directly within those units, nixing the need to shuttle data back and forth—in turn saving energy.

Data shuttling can increase energy consumption anywhere from 3 to 10,000 times above what’s required for the actual computation, said Wang.

The chip was highly efficient when challenged with two speech recognition tasks. One, Google Speech Commands, is small but practical. Here, speed is key. The other, Librispeech, is a mammoth system that helps transcribe speech to text, taxing the chip’s ability to process massive amounts of data.

When pitted against conventional computers, the chip performed equally as accurately but finished the job faster and with far less energy, using less than a tenth of what’s normally required for some tasks.

“These are, to our knowledge, the first demonstrations of commercially relevant accuracy levels on a commercially relevant model…with efficiency and massive parallelism” for an analog chip, the team said.

Brainy Bytes

This is hardly the first analog chip. However, it pushes the idea of neuromorphic computing into the realm of practicality—a chip that could one day power your phone, smart home, and other devices with an efficiency near that of the brain.

Um, what? Let’s back up.

Current computers are built on the Von Neumann architecture. Think of it as a house with multiple rooms. One, the central processing unit (CPU), analyzes data. Another stores memory.

For each calculation, the computer needs to shuttle data back and forth between those two rooms, and it takes time and energy and decreases efficiency.

The brain, in contrast, combines both computation and memory into a studio apartment. Its mushroom-like junctions, called synapses, both form neural networks and store memories at the same location. Synapses are highly flexible, adjusting how strongly they connect with other neurons based on stored memory and new learnings—a property called “weights.” Our brains quickly adapt to an ever-changing environment by adjusting these synaptic weights.

IBM has been at the forefront of designing analog chips that mimic brain computation. A breakthrough came in 2016, when they introduced a chip based on a fascinating material usually found in rewritable CDs. The material changes its physical state and shape-shifts from a goopy soup to crystal-like structures when zapped with electricity—akin to a digital 0 and 1.

Here’s the key: the chip can also exist in a hybrid state. In other words, similar to a biological synapse, the artificial one can encode a myriad of different weights—not just binary—allowing it to accumulate multiple calculations without having to move a single bit of data.

Jekyll and Hyde

The new study built on previous work by also using phase-change materials. The basic components are “memory tiles.” Each is jam-packed with thousands of phase-change materials in a grid structure. The tiles readily communicate with each other.

Each tile is controlled by a programmable local controller, allowing the team to tweak the component—akin to a neuron—with precision. The chip further stores hundreds of commands in sequence, creating a black box of sorts that allows them to dig back in and analyze its performance.

Overall, the chip contained 35 million phase-change memory structures. The connections amounted to 45 million synapses—a far cry from the human brain, but very impressive on a 14-nanometer chip.

These mind-numbing numbers present a problem for initializing the AI chip: there are simply too many parameters to seek through. The team tackled the problem with what amounts to an AI kindergarten, pre-programming synaptic weights before computations begin. (It’s a bit like seasoning a new cast-iron pan before cooking with it.)

They “tailored their network-training techniques with the benefits and limitations of the hardware in mind,” and then set the weights for the most optimal results, explained Wang, who was not involved in the study.

It worked out. In one initial test, the chip readily churned through 12.4 trillion operations per second for each watt of power. The energy consumption is “tens or even hundreds of times higher than for the most powerful CPUs and GPUs,” said Wang.

The chip nailed a core computational process underlying deep neural networks with just a few classical hardware components in the memory tiles. In contrast, traditional computers need hundreds or thousands of transistors (a basic unit that performs calculations).

Talk of the Town

The team next challenged the chip to two speech recognition tasks. Each one stressed a different facet of the chip.

The first test was speed when challenged with a relatively small database. Using the Google Speech Commands database, the task required the AI chip to spot 12 keywords in a set of roughly 65,000 clips of thousands of people speaking 30 short words (“small” is relative in deep learning universe). When using an accepted benchmark—MLPerf— the chip performed seven times faster than in previous work.

The chip also shone when challenged with a large database, Librispeech. The corpus contains over 1,000 hours of read English speech commonly used to train AI for parsing speech and automatic speech-to-text transcription.

Overall, the team used five chips to eventually encode more than 45 million weights using data from 140 million phase-change devices. When pitted against conventional hardware, the chip was roughly 14 times more energy-efficient—processing nearly 550 samples every second per watt of energy consumption—with an error rate a bit over 9 percent.

Although impressive, analog chips are still in their infancy. They show “enormous promise for combating the sustainability problems associated with AI,” said Wang, but the path forward requires clearing a few more hurdles.

One factor is finessing the design of the memory technology itself and its surrounding components—that is, how the chip is laid out. IBM’s new chip does not yet contain all the elements needed. A next critical step is integrating everything onto a single chip while maintaining its efficacy.

On the software side, we’ll also need algorithms that specifically tailor to analog chips, and software that readily translates code into language that machines can understand. As these chips become increasingly commercially viable, developing dedicated applications will keep the dream of an analog chip future alive.

“It took decades to shape the computational ecosystems in which CPUs and GPUs operate so successfully,” said Wang. “And it will probably take years to establish the same sort of environment for analog AI.”

Image Credit: Ryan Lavine for IBM