Unlike Samantha in the Movie Her, Artificial Intelligence Will Have a Body

Share

The recent movie, Her, chronicles the romantic relationship between a man, Theodore Twombly, and an intelligent operating system named Samantha in the year 2025. Samantha is not just smart, she’s empathetic. She’s not static, she’s adaptive. Samantha, in short, is strong artificial intelligence in every sense.

But there’s a problem, says Ray Kurzweil, the well-known futurist, some of whose concepts inspired the film. Theodore’s only interaction with Samantha is by way of a small earpiece. To Theodore, Samantha is no more than a disembodied voice.

Why's that a problem? By the time advanced AI like Samantha is possible, it’ll also be possible to give her a realistic digital body. That is, Theodore should be able to talk to Samantha and watch her expressions and body language. Samantha will have form and fashion, and her interaction with Theodore will be even richer than it is in the film.

I suspect the choice to deprive Samantha of a body may have been a cinematic decision. The film very elegantly explores AI without leaning too heavily on CGI. And as Kurzweil points out, that Samantha doesn't have a body and Theodore does is a prime source of dramatic tension throughout the plot. But Kurzweil's larger point is well taken.

In the real world, Samantha probably will have a body.

We’re closer to making photorealistic digital characters than we are to making AIs with Samantha’s impressive powers. Last year, for example, we wrote about a digital Audrey Hepburn resurrected at the height of her charm for a chocolate commercial. CGI is climbing out of the uncanny valley. Digital Audrey was terribly cute, not terribly creepy.

However, although Audrey's looks were impressive, her actions and expressions were carefully choreographed behind the scenes. That’s not enough for avatars representing people or AIs. Future characters need to be both photorealistic and adaptive.

If the intelligence (human or otherwise) behind an avatar finds something humorous, but only a little, a subtle smirk may be in order. If they’re surprised, the eyebrows might jump and nostrils flare, while the head jolts back a fraction. For smooth interaction, all this needs to happen with as little latency as possible, nearly real time. (Think of how disruptive a slight phone delay can be.)

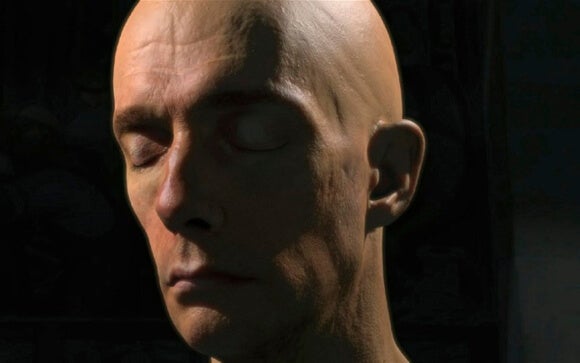

Another project, however, amply demonstrates how photorealistic, real-time digital characters may be coming soon. Digital Ira, created at the University of Southern California (USC), is a nearly photorealistic digital character that draws upon an encyclopedia of expressions to more closely resemble a human being—communicating emotion and physical quirks in high fidelity onscreen.

Digital Ira's USC project page says,"We tried to create a real-time, photoreal digital human character which could be seen from any viewpoint, any lighting, and could perform realistically from video performance capture even in a tight closeup."

The Digital Ira demo is impressive. But back to reality for a moment.

Digital Ira is just a head—not an entire body—the character can’t yet represent a human in real time, and he's expensive to produce. Ira was made by bringing an actor into the studio and painstakingly recording him performing improvised lines in high definition from multiple perspectives using USC's Light Stage X system. Post production was even more complicated and time consuming.

As IEEE Spectrum notes, it’s still more economical to hire George Clooney than to reproduce him in binary. But the costs of creating realistic digital characters are dependent on computing power—time and monetary expenses will fall in coming years.

Be Part of the Future

Sign up to receive top stories about groundbreaking technologies and visionary thinkers from SingularityHub.

Entertainment is an obvious market for photorealistic characters. Digital Ira is a glimpse of next-generation, choreographed film or video game characters. Big productions with a budget. But a little further on? We might all get our very own Digital Ira.

As powerful processors and clever programming bring down the cost and difficulty of digital embodiment, a new industry might spring up. For a fee, we'll spend a day in the studio and come away with a customized, photorealistic avatar. Maybe we won't even need to go into the studio, but will instead make avatars from the comfort of home (possibly using 3D scanners).

We might use these avatars to interact with other people (for fun or business) in some virtual world, like an advanced Second Life. But our AIs will get a body too. AIs and humans will meet and interact virtually—or even in the real world.

The future equivalent of Theodore Twombly might well combine his earpiece with a pair of (stylish) augmented reality glasses that overlay Samantha (or a human friend who isn't present) on the real world. She could walk next to him, in front of him—anywhere she pleases. He couldn’t touch her, but he could look at and interact with her image.

And his friends could too.

There’s a scene where Samantha and Theodore go on a picnic with another couple. Similarly equipped with earpieces, the couple can converse with and hear Samantha. If they're wearing augmented reality glasses, they could see her as well.

And then there are the more immersive possibilities provided by virtual reality.

With a pair of VR goggles like the Oculus Rift and a (yet to be invented) haptic suit for physical feedback, Theodore and Samantha could project their avatars into the world of their choosing. If not a fully faithful reproduction of the material world, their interaction would be more fulfilling.

We're making progress on artificial intelligence. But instead of 2025, Kurzweil estimates Samantha won't show up until 2029. Meanwhile, we're fast developing realistic digital characters, augmented reality, and virtual reality right now.

By the time strong AI like Samantha hits the scene? You can bet she'll have a body and interacting with her will go beyond a simple earpiece.

Image Credit: NVIDIA, Galaxy Chocolate/YouTube

Jason is editorial director at SingularityHub. He researched and wrote about finance and economics before moving on to science and technology. He's curious about pretty much everything, but especially loves learning about and sharing big ideas and advances in artificial intelligence, computing, robotics, biotech, neuroscience, and space.

Related Articles

An AI Solution to an 80‑Year‑Old Problem Has Shocked Mathematicians

AI Lab Partners Are Rewiring the Hunt for New Drugs

In the Scramble to Power AI, Investors Bet $140 Million on Data Centers at Sea

What we’re reading